Remote IT Security Report: Web Resilience Analysis

The State of Remote IT Infrastructure: Web Security Resilience Across 500 Agencies

The Paradigm Shift in Remote Information Technology and Web Security

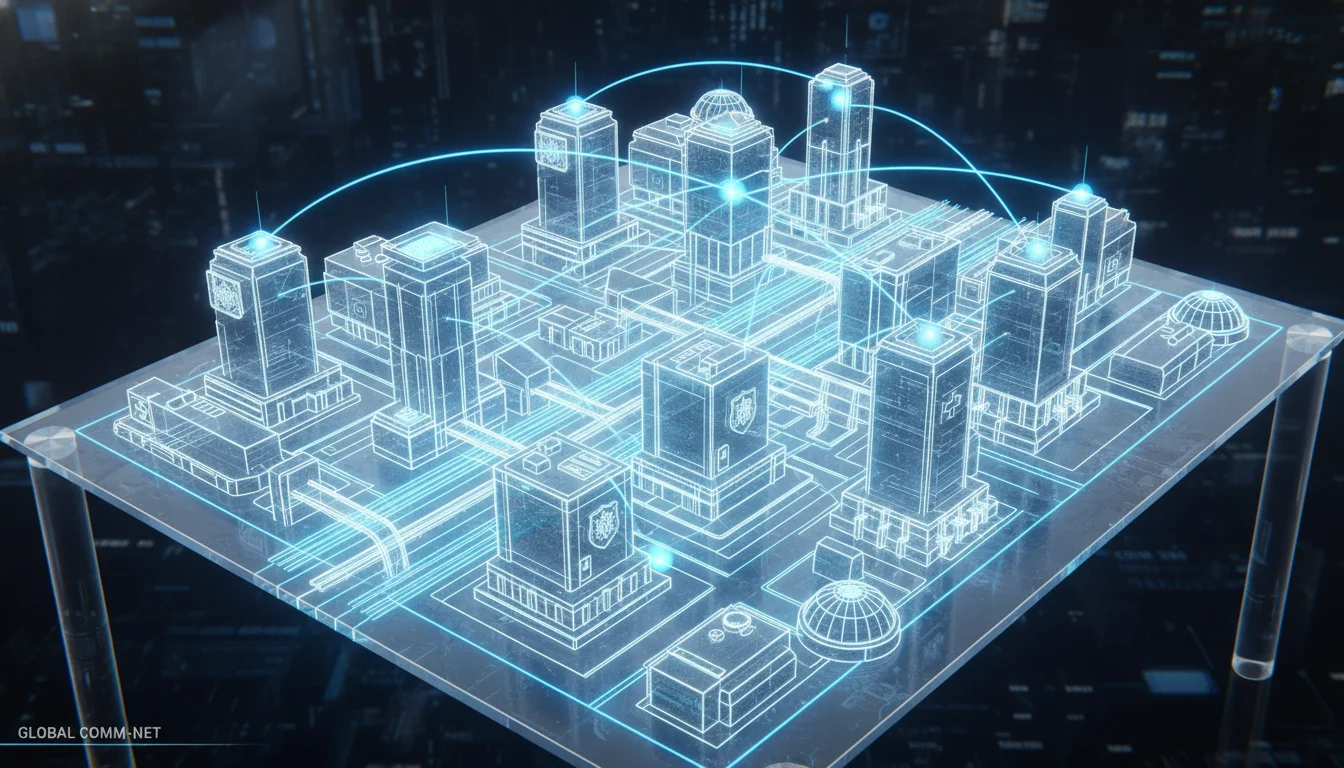

The fundamental architecture of information technology within government agencies, critical infrastructure sectors, and their private-sector partners has undergone a tectonic shift over the past decade, accelerating at an unprecedented rate due to the normalization of hybrid work models. The traditional, highly centralized network perimeter has been rendered largely obsolete. Today, the operational infrastructure of public and private sector organizations is characterized by decentralized operations, cloud-native application development, and an exponentially expanding attack surface that defies traditional defensive postures.

In the contemporary administrative environment, the hybrid work model—combining strictly regulated on-site presence with widespread remote access—has become the undisputed operational norm. Employees, contractors, and agency personnel increasingly connect to highly sensitive professional computer environments utilizing a vast and continuously rotating plethora of vulnerable personal devices and mobile networks. This transition has effectively scattered information workers far from the defensible domains of regular, centralized office environments. While this model successfully mitigates the friction of employee commuting challenges and preserves some collaborative benefits of face-to-face peer interactions for complex problem solving, it fundamentally disrupts centralized technological oversight.

Consequently, organizations are experiencing profound friction in executing core functions. Companies and government entities report substantial difficulties in managing projects, maintaining secure communication channels, and tracking individual tasks across widely distributed, remote teams. The lack of continuous physical presence requires the acquisition and deployment of specialized, cloud-based project management software to assign tasks and track progress effectively. However, every new software acquisition introduces new third-party risks and expands the network boundary, fundamentally redefining the current state of remote IT workforces. A managed IT security agency approach is increasingly required simply to secure company endpoints and maintain basic network hygiene.

The Fallacy of the Perimeter and the Chronic Nature of Cyber Threats

The dissolution of the traditional, well-defined corporate network has exposed a critical vulnerability in legacy defensive methodologies. Traditional security solutions that place an overwhelming emphasis on the protection of localized network domains have systematically failed their supporters. Cybersecurity experts and industry analysts have definitively noted that absolute reliance on endpoint security tools is a flawed, unsustainable strategy. As the security firm Absolute articulated, “one hundred percent of endpoint security tools eventually fail reliably and predictably”. This stark operational reality necessitates a fundamental pivot in how digital infrastructure is governed. Rather than attempting to build impenetrable digital walls around a perimeter that no longer exists, defensive efforts must seamlessly pivot toward intelligent network monitoring, continuous behavioral anomaly detection, and automated, AI-driven remediation.

This transition in defensive philosophy is heavily complicated by a profound misunderstanding of the modern threat environment, often exacerbated by sensationalist popular media. The cybersecurity landscape is frequently, and erroneously, framed as awaiting a singular, catastrophic event—a “Cyber 9/11”—with commentators relying on the tired cliché that a devastating attack is “not a matter of if, but when”. This perspective is analytically flawed and operationally dangerous. The actual threat environment is not characterized solely by acute disasters, but rather by chronic, systemic vulnerabilities.

To conceptualize this, industry analysts frequently draw parallels to the aviation safety and food inspection industries, where operational complacency portends subsequent disaster. Numerous historic airline mishaps were the culmination of a string of minor command errors or localized mechanical flaws, rather than a single, catastrophic external attack. For example, the loss of Air France Flight 447, which dove into the Atlantic Ocean in 2009, was ultimately attributed to pilot disorientation triggered by frozen pitot tubes. Similarly, the crash of American Airlines Flight 191 in 1979, which shed an engine upon departure from Chicago O’Hare, was determined to be the result of improper, chronic maintenance procedures. When the root causes of such physical tragedies are pinpointed afterward, industry-wide improvements invariably ensue, leading to a drastic reduction in major jet crashes.

However, in the field of cybersecurity today, an upswing in successful malicious actions is ironically accompanied by a relative complacency regarding foundational hygiene. Addressing a chronic digital condition requires continuous, systemic health monitoring, rigorous patch management, and strict identity verification, rather than merely fortifying boundaries against a singular, devastating breach.

Sector-Specific Infrastructure Architectures and 500-Agency Clusters

To thoroughly understand the practical implications of remote IT and web security, it is necessary to examine the operational realities of distinct cohorts of government and public-sector agencies. Research consistently indicates that infrastructure ecosystems frequently cluster around operational hubs supporting approximately 500 disparate agencies. These clusters require highly complex, multi-tenant security architectures to function.

Law Enforcement and First Responder Networks

At the municipal and state levels, emergency services represent the primary protectors of all other critical infrastructure sectors. The operations within this sector—comprising police, fire departments, emergency medical services (EMS), emergency management, and public works—are highly distributed by nature and heavily reliant on immediate, secure access to centralized data. These services provide immediate, life-saving responses during emergent situations and mitigate risks from both manmade and natural threats.

In jurisdictions such as the state of New Jersey, the scale of this distributed network is immense. The law enforcement sector alone comprises approximately 30,000 active, full-time, sworn officers distributed across more than 500 distinct agencies. Furthermore, the New Jersey State Firemen’s Association reports roughly 68,000 career and volunteer firefighters staffing over 530 independent departments. The New Jersey Department of Health, Office of Emergency Medical Services, certifies more than 26,000 Emergency Medical Technicians (EMTs) and 1,700 active Mobile Intensive Care Paramedics, who operate more than 4,500 highly connected EMS vehicles throughout the state.

The volume of remote endpoints in this environment—ranging from ruggedized mobile data terminals in police cruisers to connected biometric sensors on EMS personnel—creates an extraordinarily complex web security challenge. These personnel operate in harsh, dynamic environments where secure telework practices must be rigorously enforced despite physical stressors. State cybersecurity agencies have had to issue highly specific directives for teleworkers and administrative staff supporting these operations. These directives emphasize remote access security, the prevention of virtual meeting intrusions (e.g., “Zoom bombing”), and the secure utilization of video teleconferencing platforms. Furthermore, securing these distributed networks requires meticulous network segmentation, the deployment of robust Virtual Private Networks (VPNs), and strict configuration guidelines for the home Wi-Fi routers utilized by off-site support staff.

Emergency Communications and Transportation Networks

The physical and digital infrastructures of modern agencies are inextricably linked, a reality clearly demonstrated by large-scale, statewide emergency communications networks. For example, the WyoLink system serves over 500 distinct agencies and entities, functioning as a critical emergency communications backbone. The diverse user base encompasses:

- 2 federal agencies

- 68 emergency medical services (including ambulances and medical centers)

- 20 state-level agencies

- 44 educational institutions

- 144 local government agencies

- 19 private entities (such as mine rescue operations and Life Flight services)

This infrastructure does not merely support basic voice communications; it provides the crucial microwave backhaul necessary for modern Intelligent Transportation Systems (ITS). The network integrates highly sensitive, interconnected roadside devices, including connected vehicles, variable speed limit signs, dynamic messaging systems, remote weather stations, and webcams, while simultaneously routing road reports from snowplow drivers and highway patrol officers.

Every single interconnected device and dispatch center represents a potential ingress point for malicious actors.

Securing a network of this scale requires substantial, continuous capital investment and rigorous maintenance to preserve its effectiveness. Wyoming has invested over $135 million in the capitalization, maintenance, and operational costs of this long-term project. A massive portion of this investment is dedicated to cybersecurity testing for the entire system, including local dispatch centers. Because these local centers frequently rely on Windows-based platforms, routine and immediate application of security patches is absolutely mandatory. The system requires an annual upgrade agreement designed to maintain an “up-to-date” status, which previously included managing the critical migration to Windows 10 prior to the expiration of legacy support, alongside upgrading hardware components that could no longer support modern security protocols.

The intersection of physical safety and IT infrastructure is further emphasized by organizations like the National Policing Institute. Their Academic Training to Inform Police Responses initiative, sponsored by the Bureau of Justice Assistance, has reached 1,600 officers across more than 500 agencies. This program focuses heavily on roadway safety and operations. When officers operate on roadways, their safety is deeply tied to the reliability of digital systems—from dispatch communications to dynamic messaging signs that warn oncoming traffic. If the underlying IT infrastructure is compromised via web vulnerabilities, the physical safety of officers engaging in these roadway operations is directly threatened.

Centralized Data Processing and Civic Transactions

The administrative functions of government—such as vital records management and municipal citation processing—further illustrate the massive scale of modern web security challenges. Systems like VITALIQ, operated by LexisNexis VitalChek, serve more than 500 vital record agencies across 47 states, the District of Columbia, Puerto Rico, and American Samoa. For nearly four decades, VitalChek has grown to become the largest provider of vital record processing services, maintaining the electronic database of vital record events and advancing the speed, efficiency, and security of these requests.

These systems process the most sensitive Personally Identifiable Information (PII) available, including birth, death, and marriage records. Consequently, the digital architecture must align with rigorous National Center for Health Statistics (NCHS) 2003 edit specifications and National Model Law standards. The migration of vital records from localized, physically secured servers to vendor-hosted, comprehensive electronic registration systems highlights the absolute reliance on secure, remote cloud architectures. The core VITALIQ product is proven to meet 85% to 90% of any state’s vital events requirements, standardizing security protocols across hundreds of fragmented jurisdictions.

Similarly, the collection of delinquent civic citations involves vast networks of data exchange. Service providers like Data Ticket/Phoenix Group, which interface with over 500 municipalities and agencies nationwide, process millions of highly sensitive financial transactions. Originally focused in 1989 on collecting delinquent citations and managing California DMV registration holds, the service has evolved into a massive digital clearinghouse. Securing these web interfaces requires strict, verifiable adherence to industry-approved standards. Payment processing portals must implement advanced security protocols, such as McAfee SECURE certified services. Furthermore, these sites must undergo daily testing to pass FBI/SANS Internet Security Tests. To protect sensitive credit card data (Visa, MasterCard, American Express, Discover) from interception during transit, these digital infrastructures utilize premier SSL encryption (e.g., VeriSign), ensuring the highest security scanning standards of the United States government are continuously met.

Inter-Agency Data Sharing Architectures and Non-Profit Networks

State welfare and eligibility systems demonstrate the extreme complexity of inter-agency remote IT management. The State of Indiana’s Division of Family Resources (DFR) relies on complex, multi-portal architectures to manage benefits such as the IMPACT and IEDSS system components. The infrastructure is massive, including a Benefits Portal interacting with over 2 million clients and authorized representatives, an Agency Portal serving approximately 500 approved non-DFR agencies, and specialized worker portals supporting 3,500 E&E workers.

The architecture relies heavily on batch interfaces, strict access controls, and complex mailings made available in a Document Center and CDMS system. Notably, to manage the risk inherent in interfacing with 500 external agencies, strict architectural boundaries are enforced. Except for applicable, highly scrutinized interface architecture, the underlying infrastructure for specific third-party solutions (such as PostMasters) is intentionally isolated and not supported on the main state network. This deliberate air-gapping strategy is designed to mitigate the risk of lateral movement across the network in the event that one of the 500 connected agencies is compromised.

Beyond strict government silos, non-profit ecosystems also operate massive, 500-agency networks that handle highly sensitive data, particularly involving minors. The Big Brothers Big Sisters (BBBS) program, which is the most extensive mentoring program in the United States, operates through more than 500 agencies nationwide. This program matches young people (typically ages 10 to 16) with young adult mentors, requiring the collection, storage, and transmission of highly sensitive background checks, personal behavioral data, and location tracking for meetings. Securing this data across a decentralized network of 500 independent non-profit agencies requires enterprise-grade security protocols, mirroring the complexity of government systems but often operating with significantly tighter budgetary constraints. The prioritization of goals among these agencies—balancing operational reach against stringent data security—often requires explicit trade-offs, echoing the broader macroeconomic challenges of balancing growth and stability.

- Emergency Services (NJ State): Deployment of >500 agencies; 30k+ Police; 68k+ Fire. Primary Data: Criminal justice data, EMS biometrics. Architecture: Network segmentation, VPNs, strict telework/Wi-Fi router policies.

- WyoLink Communications: Deployment of >500 entities; 144 local gov. Primary Data: Emergency dispatch, ITS, road reports. Architecture: Continuous Windows patching, $135M infrastructure capitalization.

- Vital Records (VitalChek): Deployment of >500 agencies; 47 States. Primary Data: PII (Birth, Death, Marriage records). Architecture: Vendor-hosted cloud registration, NCHS standards compliance.

- Citation Processing (Phoenix): Deployment of >500 agencies. Primary Data: PCI Data (Credit cards, DMV holds). Architecture: McAfee SECURE, daily FBI/SANS testing, VeriSign SSL encryption.

- Benefits/Welfare (Indiana DFR): Deployment of 500 non-DFR agencies; 2M+ clients. Primary Data: Eligibility data, financial benefits. Architecture: Segmented portals, isolated third-party architecture (air-gapping).

The Threat Vector Ecosystem and Attack Methodologies

The threat landscape facing these complex 500-agency ecosystems is intensely multifaceted, characterized by highly sophisticated threat actors who exploit both deeply technical vulnerabilities and fundamental human errors. An analysis of recent attack vectors reveals distinct, recurring patterns in how remote IT infrastructure is systematically compromised.

Supply Chain Cascades and Ransomware Escalation

The most devastating cyber incidents in recent years have actively leveraged the deep interconnectivity of the modern digital supply chain. Attackers have strategically recognized that penetrating a single Managed Service Provider (MSP) or ubiquitous software vendor can provide immediate, unrestricted, and highly privileged access to hundreds or thousands of downstream agencies simultaneously.

The SolarWinds breach serves as the foundational case study for this paradigm. Highly sophisticated attackers compromised the software build environment of the SolarWinds Orion IT monitoring platform, effectively injecting malware disguised as a legitimate, cryptographically signed software update. This strategy allowed threat actors to penetrate numerous Fortune 500 companies and critical U.S. government agencies seamlessly, entirely bypassing traditional perimeter defenses. The defensive tools designed to monitor and protect the network became the very vehicles facilitating the attack.

Similarly, the Kaseya ransomware attack infected an MSP’s administrative infrastructure, allowing the ransomware payload to propagate rapidly and cripple up to 1,500 downstream businesses and organizations. These incidents highlight the extreme fragility of trust-based IT architectures.

This vulnerability to foundational compromise was further demonstrated in the Colonial Pipeline incident. In this case, hackers simply utilized a single compromised password to gain access to a legacy VPN, allowing them to initiate a ransomware attack that resulted in the proactive shutdown of a 5,500-mile fuel pipeline vital to the United States East Coast. The fact that a single credential failure can result in the catastrophic disruption of physical infrastructure underscores how the chronic failure of basic credential hygiene—such as the lack of Multi-Factor Authentication (MFA)—can yield acute, devastating results.

The Proliferation of API Vulnerabilities

As organizations transition aggressively to cloud-native architectures, monolithic legacy applications have been deconstructed into agile microservices that communicate continuously via Application Programming Interfaces (APIs).

APIs are now ubiquitous across modern infrastructure; they allow disparate software systems to communicate and share data seamlessly, both within internal enterprise networks and across external organizational boundaries. Currently, industry data reveals that more than 83% of all internet traffic involves API calls.

While the API ecosystem drives massive operational efficiency and enables the 500-agency portals utilized by states like Indiana, it fundamentally expands the digital attack surface. The first half of 2022 saw attacks against API services surge by an unprecedented 168%. The inherent openness of an API-driven ecosystem introduces immense, multifaceted risk whenever an organization transmits data to a third party. Agencies must meticulously manage not only technical controls—such as payload encryption, rigorous rate limiting, and token-based authentication—but also highly complex contractual risks regarding data handling, storage duration, and privacy compliance. Poorly configured APIs, particularly those suffering from broken object-level authorization, can expose entire backend databases to unauthorized extraction, seamlessly bypassing traditional web application firewalls that are not specifically tuned to inspect complex API logic.

Legacy Protocols, Skimming, and Persistent Malware

Despite the intense focus on advanced, cloud-native threats, legacy vulnerabilities remain highly exploitable and frequently targeted. Historically, foundational web traffic relied almost exclusively on port 80 for HTTP communication. A comprehensive look at the state of web security by Internet Security Systems (ISSX) previously found that 70% of all network intrusion attempts specifically targeted port 80. While modern systems enforce HTTPS over port 443, the legacy architecture of the internet dictated that shielding port 80 at the firewall level would entirely disrupt essential web traffic, leaving it widely open. This historical context is vital; it demonstrates the inherent difficulty in securing necessary operational pathways. Attackers will consistently and aggressively target the exact communication channels that organizations are operationally required to keep open.

In the contemporary landscape, this dynamic is clearly evident in the prevalence of advanced web skimming and malware campaigns. The current state of web security is frequently compromised because many agencies and development shops fail to strictly validate external content before it loads into user browsers. This chronic vulnerability allows attackers to inject malicious JavaScript into seemingly secure, trusted websites. Historically, skimmers were placed directly on checkout forms to harvest payment data. However, threat actors have evolved their tactics. We now observe skimmers actively impersonating legitimate payment processors, utilizing sophisticated social engineering and phishing-like tricks to deceive users within dynamic web environments. This places massive pressure on municipal payment portals that process citations and utility bills.

Concurrently, persistent and adaptive malware strains continue to plague government agencies and critical infrastructure across the globe. Cybersecurity alerts from international bodies, such as the Australian Cyber Security Centre (ACSC), have highlighted ongoing campaigns by the Emotet botnet specifically targeting critical infrastructure and government agencies across Australia and New Zealand. While overall detection rates for certain malware types fluctuate—for instance, Singapore experienced a 4% decrease in overall detections, replaced by a surge in consumer adware and cryptominers—the business and administrative sectors remain heavily targeted by sophisticated Trojans like FakeAlert. This constant rotation of payloads indicates that threat actors are continuously refining their methodologies to evade traditional endpoint detection systems.

The Corporate Tech Response and Cloud-Native Defense Strategies

In response to the rapidly escalating and diversifying threat landscape, agencies and their corporate technology partners are fundamentally restructuring their defensive architectures. The sheer volume and complexity of remote web traffic necessitate scalable, automated security solutions that traditional, on-premises hardware appliances simply cannot provide.

Consequently, there has been a massive acceleration in the adoption of cloud-based security technologies. Recent surveys analyzing the state of web security indicate that companies of various sizes reported an increased usage of advanced web security tools between 133% and 214% in 2022 over their previous rates. The most significant architectural shift is the migration toward native cloud defenses. Currently, 64% of surveyed organizations utilize a native Web Application Firewall (WAF) provided directly by their cloud service provider. This native integration reduces network latency, simplifies global deployment, and allows security policies to scale dynamically alongside fluctuating cloud workloads.

Furthermore, there is a pronounced, industry-wide trend toward technological consolidation. The deployment of Unified Security Solutions—which seamlessly integrate WAF capabilities, API protection, bot mitigation, and Distributed Denial of Service (DDoS) defense into a single, comprehensive platform—is experiencing the second-highest growth rate in the sector, with companies expecting usage rates to increase by up to 150% over baseline levels. Providers like Reblaze have built extensive, global client bases, including Fortune 500 companies and innovative organizations, by offering these comprehensive, cloud-based defensive perimeters.

Similarly, premium managed hosting platforms, such as those provided by WP Engine, have become absolutely critical for agencies that lack the internal engineering personnel to manage complex web environments. WP Engine powers digital experiences for applications built on WordPress, providing the performance, reliability, and enterprise-grade security required by the biggest brands while remaining affordable for smaller entities. These platforms effectively outsource the rigorous daily demands of web security patching and threat mitigation. This infrastructure is vital for digital marketing and content operations; for instance, link-building and content marketing campaigns executed for more than 500 agencies across 12 countries rely heavily on these secure, scalable hosting environments to prevent devastating supply chain compromises through ostensibly harmless marketing channels.

The broader responsibility of defining and enforcing the state of web security is often led by the technology titans. Fortune 500 technology companies—specifically computer and internet vendors like Apple, Hewlett Packard (HP), Google, International Business Machines (IBM), Microsoft, and Amazon—play an outsized role in shaping global cybersecurity discourse and baseline standards. A meticulous analysis of their corporate security blogs and policy pages reveals a highly concentrated rhetorical focus on themes such as “make users safer,” building a “more secure browser,” and actively “improving the state of web security.”

A critical area of debate and policy divergence among these entities is the concept of vulnerability disclosure. Establishing “reasonable disclosure deadlines” is heavily debated as the best measure for protecting end-users while allowing developers time to remediate flaws. However, profound rhetorical and policy differences exist; for example, the debate between Google and Microsoft over strict patching deadlines highlights the immense friction between the rapid pace of threat actors and the operational realities of software development. When a company argues that vendors should be held to strict patching deadlines, it directly impacts the vulnerability window for the thousands of agencies relying on their enterprise software.

Human Capital, Insider Threats, and AI Integration

While advanced technological architectures are foundational to web security, the human element remains the most unpredictable, heavily exploited, and arguably critical vulnerability. The rapid transition to remote IT infrastructure has severely exacerbated the risks associated with human error, poor credential hygiene, and both negligent and malicious insider threats.

Staffing Shortages and Baseline Rigor

A severe, systemic issue currently impacting the security posture of law enforcement and government agencies is chronic staffing shortages. As agencies struggle continuously to meet their recruiting needs, many have been operationally forced to lower their baseline hiring requirements. While modified requirements may temporarily attract a larger pool of candidates, extensive archival analyses and broad survey data indicate that this practice yields severe, long-term operational and security problems.

In a massive survey encompassing 4,006 agencies—sampling municipal and sheriff departments with over 100 sworn officers, alongside 500 agencies from strata with fewer than 100 sworn officers—researchers analyzed hiring procedures and selection processes. Agencies were randomly assigned to receive materials with either NCITE branding or School of Criminology & Criminal Justice branding to ensure unbiased responses. The results generated a “transparency and rigor score” ranging from 0 to 6, with an average score of 3.49.

The data firmly established that inadequate hiring policies and diminished selection procedures directly correlate to an increased risk of insider threats. When transparency and rigor scores in hiring are compromised, the baseline technical literacy, situational awareness, and adherence to security protocols among incoming personnel inevitably decline.

In a deeply interconnected environment where a single clicked phishing link can compromise a 500-agency communications network, the intellectual caliber and integrity of the human endpoint is just as critical as the configuration of the digital endpoint.

Revolutionizing Compliance Training

To counteract the risks introduced by distributed workforces and fluctuating personnel standards, organizations must completely overhaul their approach to compliance and security awareness. Traditional cybersecurity compliance training has historically proven highly ineffective. It is frequently perceived by employees as a tedious, “check-the-box” exercise completely disconnected from their daily, real-world job responsibilities.

The severe limitations of traditional training methods were acutely experienced by a global advertising and media company managing operations across 84 countries, comprising more than 500 disparate agencies and teams. Ensuring that this massively distributed, culturally diverse workforce adhered to strict cybersecurity protocols and global regulatory requirements for handling sensitive information was an immense challenge. A monolithic, one-size-fits-all approach failed to resonate, resulting in extremely poor engagement and low knowledge retention.

To solve this systemic vulnerability, the organization partnered with instructional design experts (TrainingPros) to deploy a scalable, dynamic compliance program integrated seamlessly into their enterprise Learning Management System (LMS). The solution expertly leveraged native automation tools to handle massive enrollment, tracking, and reporting tasks across regions. More importantly, the curriculum was completely redesigned. It featured compelling storytelling, highly interactive compliance modules, and, crucially, role-specific, job-based scenarios. By making the training directly applicable to daily tasks, the program ensured widespread workforce adoption and successfully reinforced the critical cybersecurity behaviors necessary to protect sensitive data across the sprawling 500-agency network.

The Artificial Intelligence Frontier

The human element is currently colliding violently with the rapid emergence of Artificial Intelligence (AI). Generative AI and Large Language Models (LLMs) offer unprecedented capabilities for automating workflows, synthesizing complex intelligence, and drastically improving public services. A comprehensive Government Accountability Office (GAO) study found that government agencies are actively and aggressively integrating AI across numerous critical sectors, including agriculture, financial services, healthcare, internal management, national security, law enforcement, science, telecommunications, and transportation.

The scale of this technological adoption is massive: more than 500 agencies are currently deep in the planning phase for AI integration, and over 200 are already utilizing AI programs in live production environments. However, the integration of LLMs introduces profound new web security and privacy risks. Prompt injection attacks, data poisoning, and the inadvertent exposure of highly classified or PII data through internal LLM inputs represent critical, emerging vulnerabilities.

While broad Executive Orders exist to provide high-level frameworks, they do not resolve the immediate, granular technical challenges facing IT administrators. As the development of Generative AI programs continues rapidly over the coming years, internal agency policy changes and strict, localized guidelines for handling Gen AI tools will likely outpace formal, sluggish government regulations. Agencies must immediately establish clear, inflexible parameters regarding what internal data can be processed by external, cloud-hosted AI models to prevent massive data exfiltration events.

Regulatory Governance, Information Sharing, and Framework Compliance

The immense technological and human complexities of managing remote IT infrastructure necessitate rigid adherence to federal guidelines, robust oversight committees, and highly structured risk management frameworks. Cybersecurity cannot be ad hoc or reactive; it must be deeply and legally embedded into the organizational architecture.

Federal Oversight and Information Dissemination

At the federal level, securing the infrastructure of hundreds of interdependent agencies requires massive coordination and continuous intelligence sharing. Oversight groups, such as the State, Local, Tribal, and Private Sector Policy Advisory Committee (SLTPS-PAC), work tirelessly to integrate intelligence and standardize security practices. Strategic efforts are continuously made to incorporate high-level members from the National Security Council (NSC) and the Cybersecurity and Infrastructure Agency (CISA) into these advisory roles, although acute geopolitical crises frequently overwhelm federal bandwidth and delay structural progress.

Programs like Sentry are vital mechanisms for this coordination. The Sentry program currently reaches between 400 and 500 agencies, involving over 800 individual participants. The operational philosophy of these programs relies heavily on a cascading, multiplier information-sharing model. Once actionable cyber intelligence or updated security protocols are distributed to a primary agency or hub (such as the NYPD or a regional DHS Fusion Center), it is implicitly understood and expected that the information will be rapidly disseminated internally and cascaded down to local partners and the broader law enforcement community.

Risk Management and Enterprise Architecture

The foundational document governing federal information security policy is the Office of Management and Budget (OMB) Circular A-130. This stringent directive mandates that agencies must comprehensively manage risk by considering information security, privacy, records management, public transparency, and supply chain security issues throughout the entire system development life cycle.

A critical, structural component of OMB A-130 is the uncompromising requirement for agencies to develop a comprehensive Enterprise Architecture (EA). The EA must rigidly describe the baseline architecture, outline the target architecture, and present a fully funded transition plan. This process forces agencies to map their current state accurately, align business and technology resources to achieve strategic outcomes, eliminate wasteful duplication, and explicitly prioritize the upgrade, replacement, or retirement of legacy information systems that can no longer be appropriately secured. Furthermore, agencies are explicitly directed to consult and implement the highly technical guidelines established by the National Institute of Standards and Technology (NIST), specifically aligning with Federal Information Processing Standards (FIPS) and the NIST Special Publications (SP) 500, 800, and 1800 series guidelines.

Privacy Assessments, Background Checks, and EEO Compliance

Security policy extends deeply into privacy management and human resources protocols. Under the Criminal Justice Information Services (CJIS) Security Policy, agencies must perform rigorous, documented privacy and security risk assessments specifically regarding records retention. Decisions surrounding how long highly sensitive records are kept must be mathematically derived from formalized, risk-based decision processes, rather than arbitrary administrative convenience. Furthermore, stringent requirements apply to Cloud Service Providers (CSPs) handling this data, mandating that verifiers execute state and national fingerprint-based record checks consistent with 5 CFR 731.106 and Office of Personnel Management guidelines.

Organizational health and security culture are also deeply intertwined and monitored through regular reporting mechanisms, such as the MD-715 “State of the Agency” reports utilized by the Department of the Navy (SECNAV) and the General Services Administration (GSA). These rigorous reports require senior management officials to present briefings on the status of barrier analysis processes. They demand strict accountability, ensuring 100% timely completion of mandatory training on critical topics including anti-harassment, reasonable accommodations, alternative dispute resolution, and EEO procedures.

The GSA, for example, utilizes detailed, highly segmented analytics from the Federal Employee Viewpoint Survey (FEVS) and generates quarterly Heads of Services and Staff Offices (HSSO) snapshots to monitor complaint activity. Through aggressive customer engagement meetings and targeted supplemental training, the GSA successfully resolved major compliance deficiencies, reducing reported deficiencies from 33 in FY 2022 to just 10 in FY 2024. By actively maintaining a healthy, legally compliant, and highly engaged organizational culture, agencies indirectly but substantially bolster their cybersecurity posture. Disenfranchised, poorly trained, or highly stressed employees historically represent the highest risk vectors for severe insider threats and remain the most susceptible targets for sophisticated social engineering campaigns.

Synthesized Insights: The Interconnected Vulnerability Matrix

Analyzing the operational modalities, technical architectures, and human capital structures of over 500 agencies reveals a deep, structural interconnectivity that completely defines the modern state of remote IT infrastructure. The data demonstrates conclusively that vulnerabilities are rarely isolated; they cascade rapidly through shared services, ubiquitous APIs, and multi-tenant cloud environments.

First, the correlation between the deployment of physical infrastructure and digital risk is absolute and undeniable.

Massive systems like the WyoLink communication network or New Jersey’s sprawling emergency services network highlight that securing physical assets—such as police cruisers, EMS biometric sensors, and variable speed traffic signs—now intrinsically requires enterprise-grade IT security. When physical infrastructure becomes “smart” and interconnected, it simultaneously and unavoidably becomes a potential vector for catastrophic digital intrusion. Patching a Windows server in a remote dispatch center is no longer just an IT task; it is a life-safety requirement.

Second, the digital supply chain is the ultimate Achilles’ heel of the 500-agency ecosystem. The deep reliance on centralized vendor-hosted databases like VitalChek for birth records or citation processors like Phoenix Group for financial transactions means that a single municipal agency’s security posture is entirely dependent on the rigor of its third-party providers. The SolarWinds and Kaseya breach events proved a terrifying new paradigm: highly advanced threat actors no longer need to expend resources attempting to breach 500 individual agencies. They only need to breach the single, trusted software provider that services those 500 agencies. Consequently, third-party risk management and the enforcement of aggressive contractual security stipulations are now as critical as the configuration of firewalls.

Third, the human element remains dangerously caught between two opposing, highly disruptive forces: the aggressive drive for rapid technological adoption (evidenced by the 500 agencies currently rushing to plan AI integration) and the grim reality of diminishing baseline skills due to chronic staffing shortages and lowered hiring rigor. This dangerous friction can only be resolved through the deployment of advanced, role-specific, and automated training architectures that aggressively elevate the baseline technical competency of the workforce to match the immense complexity of the tools they are now required to operate remotely.

Conclusion

The state of remote IT infrastructure across government, critical infrastructure, and enterprise agencies is firmly characterized by the permanent dissolution of the traditional network perimeter. The absolute normalization of hybrid work models has scattered millions of endpoints, necessitating a profound strategic pivot away from static perimeter defense toward intelligent, cloud-native network monitoring, strict identity verification, and automated behavioral anomaly detection.

The comprehensive analysis of massive 500-agency cohorts—ranging from vital records management and state emergency communication networks to global media compliance ecosystems and non-profit youth organizations—reveals that operational success now relies entirely on secure, heavily segmented cloud architectures and the deployment of native Web Application Firewalls. However, this aggressive transition to API-driven, cloud-native environments has drastically expanded the digital attack surface. It has created a highly lucrative, interconnected landscape specifically primed for catastrophic supply chain compromises, devastating ransomware operators, and advanced persistent threats targeting the very backbone of civic infrastructure.

To achieve true operational resilience, agencies must definitively cease viewing cybersecurity as mere preparation for an acute, catastrophic event. Instead, they must manage digital security as a chronic, systemic condition requiring continuous, uncompromising hygiene. This requires rigorous, verifiable adherence to federal frameworks like OMB A-130, the ruthless elimination and retirement of legacy systems, strict and continuous vetting of all third-party vendors, and the implementation of highly engaging, role-specific compliance training to mitigate the pervasive insider threat. Ultimately, securing the remote IT infrastructure of the modern 500-agency ecosystem is no longer solely a technical engineering endeavor; it is a holistic, continuous exercise in complex enterprise architecture, rigorous human capital management, and relentless organizational evolution.