Self-Host Your Marketing Stack: Open Source MarTech for Agencies

Self-Hosting the Modern Marketing Stack: A Comprehensive Linux Environment Setup and Architecture Guide for Digital Agencies

The digital marketing landscape is currently undergoing a profound architectural and philosophical shift. For digital agencies tasked with managing complex, multi-layered client portfolios, the traditional reliance on proprietary Software-as-a-Service (SaaS) platforms presents escalating challenges regarding data sovereignty, vendor lock-in, and spiraling subscription costs. A sovereignty-first marketing stack represents a paradigm shift from closed ecosystems to modular, open-source architectures deployed on self-hosted infrastructure. This exhaustive technical report delineates the architecture, deployment optimization, security hardening, and ongoing maintenance of a self-hosted, multi-tenant marketing technology (MarTech) stack on a Linux environment, tailored specifically for the intensive operational demands of digital agencies.

The strategic imperative of data sovereignty cannot be overstated in an era defined by stringent regulatory frameworks such as the General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA). A sovereign marketing stack is fundamentally about operational control, privacy compliance, and architectural agility. Digital agencies routinely process massive volumes of personally identifiable information (PII) across diverse global jurisdictions. Self-hosting marketing infrastructure ensures that this sensitive data resides exclusively on chosen servers—whether those are private on-premise hardware deployments or strictly geofenced regional cloud providers. This architectural decision fundamentally mitigates the legal complexities of cross-border data transfers inherent in multi-tenant SaaS models, providing clients with absolute cryptographic and physical assurance regarding data localization.

Furthermore, proprietary marketing suites operate as monolithic structures designed to encapsulate the user within a single billing ecosystem. In stark contrast, an open-source, containerized stack allows for a modular, composable architecture where discrete components are connected via open Application Programming Interfaces (APIs) and standard protocols. This modularity permits a digital agency to orchestrate specialized, best-in-class applications rather than compromising with a generalized suite that may excel in email marketing but fail in complex data visualization. By migrating from platforms like HubSpot or Salesforce Marketing Cloud to equivalent open-source solutions, agencies can achieve near-infinite scalability without absorbing the punitive per-contact or per-execution pricing penalties typical of commercial marketing automation platforms.

The Sovereign MarTech Ecosystem Versus Proprietary Monoliths

To construct a viable alternative to enterprise SaaS offerings, the chosen open-source components must not only match the feature parity of their commercial counterparts but also offer superior integration capabilities. The contemporary marketing technology landscape is dominated by a few key players in 2025, including HubSpot for all-in-one automation, ActiveCampaign for customer journey logic, and Salesforce Marketing Cloud for enterprise orchestration. However, these platforms inherently trap data within a “black box,” severely limiting portability and visibility.

The transition to a self-hosted architecture necessitates substituting these proprietary giants with robust open-source alternatives. The self-hosted stack embraces a philosophy where data interoperability is the norm, enabling agencies to swap out tools dynamically as market conditions or client requirements evolve.

| Functional Area | Dominant Proprietary Solutions | Recommended Open-Source Component | Core Capabilities and Architectural Advantages |

|---|---|---|---|

| Marketing Automation & Email | HubSpot, Marketo, ActiveCampaign, Mailchimp | Mautic | Facilitates multi-channel campaigns, predictive lead scoring, automated email sequencing, and deep segment building. Crucially, it provides native tools for GDPR compliance and complete data ownership without tier-based contact limits. |

| Workflow Orchestration & Integration | Zapier, Make.com, Workato | n8n | Offers node-based visual workflow automation, complex API integrations, and intelligent webhook handling. Its architecture supports “Queue Mode” utilizing Redis and PostgreSQL for horizontal scaling across massive execution volumes. |

| Web Analytics & Telemetry | Google Analytics 4, Adobe Analytics | Matomo | Delivers real-time traffic analysis, session tracking, heatmaps, and funnel optimization. It guarantees total data ownership, bypasses ad-blocker limitations when served from a first-party domain, and allows raw data SQL access for bespoke BI reporting. |

| Customer Relationship Management (CRM) | Salesforce, Zoho CRM, Pipedrive | EspoCRM | Manages sales pipelines, customer entity tracking, and lead assignments. It features a lightweight API-first architecture and utilizes real-time WebSocket notifications for immediate sales agent alerts. |

The synthesis of these tools creates a comprehensive MarTech ecosystem capable of supporting agile marketing and sales operations. By leveraging a unified customer data approach across these interconnected, self-hosted applications, agencies can execute highly personalized, cross-channel lifecycle marketing campaigns without the latency or sync errors common when bridging disparate commercial SaaS platforms.

Hardware Architecture and Capacity Planning for Multi-Tenant Environments

Deploying a multi-tenant environment for a digital agency requires strict resource allocation and capacity planning to ensure that intense background operations do not degrade the performance of concurrent client services. For example, executing a 250,000-recipient email broadcast in Mautic or parsing massive, multi-gigabyte analytics payloads in Matomo generates significant CPU and memory pressure.

Virtual Private Servers (VPS) or dedicated bare-metal instances offer predictable CPU quotas and dedicated Disk I/O, which are absolutely critical for database-heavy applications. Unlike shared hosting environments that suffer from “noisy-neighbor” syndromes, a properly provisioned VPS isolates resource usage per instance. While homelab enthusiasts might run a basic Linux stack on an older 6-core Intel i5 processor with 8GB of RAM for personal projects, a production-grade agency environment mandates significantly higher specifications.

A containerized MarTech stack relies heavily on relational databases (MySQL, MariaDB, PostgreSQL), in-memory data structures (Redis), and background task runners (PHP-FPM workers, Node.js execution threads). For a digital agency hosting multiple client instances, the underlying hardware must be robust, scalable, and highly available.

| Resource Type | Minimum Specification (Single Small Client / Staging) | Production Recommendation (Multi-Tenant Agency Infrastructure) | Architectural Justification |

|---|---|---|---|

| Compute (vCPU) | 4 Cores | 8 - 16 Cores | Essential for managing parallel workflow executions in n8n and parallel email spooling processes in Mautic. Insufficient CPU threads will cause workflow queues to backlog severely. |

| Memory (RAM) | 16 GB | 32 - 64 GB | Relational databases require aggressive caching (e.g., InnoDB buffer pools) to prevent disk reads. Redis session management and concurrent PHP-FPM worker processes demand extensive, fast RAM to avoid swapping. |

| Storage | 100 GB NVMe SSD | 500 GB - 2 TB NVMe SSD | High Input/Output Operations Per Second (IOPS) are critical for database write speeds during tracking events. Ample storage is required for persistent Docker volumes, application logs, and database files. |

| Network | 1 Gbps | 1 Gbps - 10 Gbps (Low Latency) | Crucial for handling massive influxes of external API calls, webhook ingestions, and database clustering synchronization (if utilizing multiple database nodes). |

It is a common misconception that all applications can be scaled horizontally with ease. As infrastructure demands grow, the strategy diverges based on the application’s native architecture. For instance, attempting to horizontally scale Mautic across multiple application servers is notoriously complex due to its file-caching mechanisms and background job processing; scaling Mautic is generally achieved more reliably through vertical scaling (adding more CPU and RAM to a single large instance). Conversely, n8n is explicitly designed for horizontal scaling via its Queue Mode, allowing an agency to spin up dozens of identical worker containers across a Docker Swarm or Kubernetes cluster to handle workflow execution spikes.

Operating System Hardening: Securing Ubuntu 24.04 LTS

The foundation of the entire architecture is the underlying host operating system. Ubuntu 24.04 Long Term Support (LTS) is the current industry standard for server deployments due to its rock-solid stability, extensive package repositories, and predictable five-year security support lifecycle. However, a default installation of any Linux distribution presents an unacceptable attack surface for an internet-facing production server. System hardening must be executed meticulously prior to installing any application stack.

Foundational Security Configurations and Access Control

The initial phase of operating system hardening involves minimizing access vectors, enforcing strict authentication protocols, and securing the network layer against automated scanning and exploitation frameworks.

The most basic, yet frequently overlooked, hardening measure is maintaining strict patch management.

The system must be fully updated using package managers, and automatic security updates should be configured to patch known vulnerabilities dynamically without requiring manual intervention. Following package updates, Secure Shell (SSH) access must be aggressively secured. SSH is the most common attack vector on internet-facing servers, constantly bombarded by automated botnets attempting credential stuffing. The default SSH port 22 should be changed to a non-standard high port to evade rudimentary automated scanners. Furthermore, password-based authentication must be strictly disabled within the /etc/ssh/sshd_config file (PasswordAuthentication no), mandating the use of secure cryptographic key pairs, such as Ed25519 or RSA, for all remote access. Direct root login over SSH must also be explicitly denied (PermitRootLogin no), requiring administrators to log in as an unprivileged user and escalate privileges via sudo.

Network traffic must be controlled through a strict firewall policy. The Uncomplicated Firewall (UFW) should be enabled with a default deny-all incoming policy. Administrators should exclusively permit traffic on port 80 (HTTP), port 443 (HTTPS), and the custom SSH port. Kernel parameters must also be tuned via sysctl to protect the host against TCP SYN floods, IP spoofing, and routing redirects. Finally, Ubuntu 24.04 ships with AppArmor, a Mandatory Access Control (MAC) system, enabled by default. It is imperative to verify that AppArmor profiles for critical system services are active and set to enforce mode, thereby restricting the capabilities of compromised processes.

Active Threat Mitigation: The Superiority of CrowdSec over Fail2Ban

For decades, Fail2Ban has been the standard utility for parsing system logs and banning malicious IP addresses via iptables. However, modern containerized architectures require a significantly more sophisticated approach. Fail2Ban was designed primarily to protect against simple brute-force attacks in isolated environments. In contrast, CrowdSec has emerged as the superior Intrusion Prevention System (IPS) for Docker-based agency environments.

CrowdSec is a collaborative, behavior-based security engine. It fundamentally decouples the detection engine from the remediation component (known as the “bouncer”). This decoupled architecture allows the CrowdSec agent to parse logs from an array of sources—including Nginx access logs, SSH authentication logs, and specific application logs—and subsequently share threat intelligence across a global network of users. This crowd-sourced threat intelligence enables CrowdSec to proactively block IP addresses known to harbor malicious intent before they even attempt to interact with the agency’s server infrastructure.

In an Ubuntu 24.04 environment running a Dockerized stack, the optimal implementation utilizes the crowdsec-firewall-bouncer-nftables package. Because the Docker daemon dynamically manipulates legacy iptables rules to manage complex container networking, older firewall bouncers or traditional Fail2Ban setups often conflict, leading to networking failures or bypassed security rules. Implementing the modern nftables bouncer ensures that IP bans occur directly at the Linux kernel firewall level without disrupting Docker’s internal routing tables.

Deployment involves initializing the Security Engine via the official CrowdSec repository, installing the nftables bouncer, and subsequently adding specific behavioral collections via the Command Line Interface. For a marketing stack, installing collections such as crowdsecurity/linux, crowdsecurity/nginx, and crowdsecurity/http-probing instructs the engine on exactly which log patterns indicate a coordinated attack. Furthermore, because CrowdSec can be configured to protect outgoing traffic as well, it serves as a critical fail-safe; if a vulnerability allows a botnet payload to be installed within a Docker container, CrowdSec can prevent that container from establishing outgoing command-and-control (C2) communications.

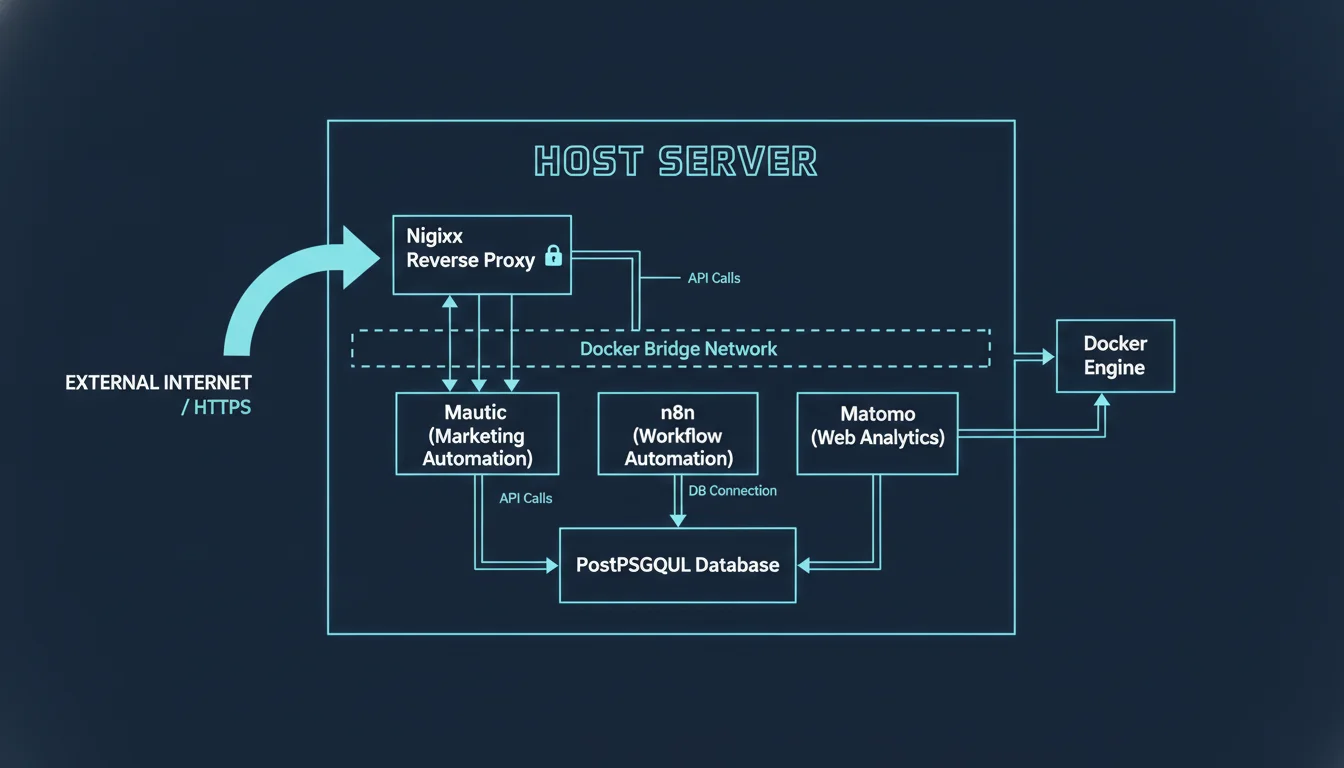

Containerized Deployment Architecture and Network Isolation

Deploying multiple complex applications—such as Mautic, n8n, and Matomo—directly onto the host operating system creates an unmanageable web of dependency conflicts, fragile upgrade paths, and significant security risks. The modern agency stack is entirely containerized using Docker Engine and Docker Compose.

There is a growing trend among novice system administrators to utilize bloated, high-privilege hosting panels like CyberPanel or CloudPanel to manage multiple WordPress or application sites. However, for a high-security agency environment, the “panel-less” route provides maximum isolation and minimizes the attack surface.

The Principle of Strict Container Isolation

Docker encapsulates each application, along with its specific runtime environment, required libraries, and system tools, into isolated units. In a multi-tenant agency scenario, strict Docker isolation prevents a compromised client instance from pivoting laterally to affect other clients.

For optimal security posture, database containers (such as MariaDB for Mautic or PostgreSQL for n8n) must never have their ports bound to the host’s external network interfaces (e.g., exposing port 3306 to the public internet). Instead, they should exist strictly on internal Docker bridge networks, accessible exclusively by the specific application containers that require them. Additionally, Docker Compose allows administrators to enforce hard limits on CPU usage and memory consumption per service. If a poorly configured regular expression in an n8n workflow causes an infinite loop, or a massive segment generation build in Mautic causes the PHP container to spike, these hard resource limits ensure the process is throttled or killed before it can monopolize the host VPS and cause a systemic outage.

Reverse Proxy Engineering and SSL Termination

To efficiently route external web traffic to the appropriate internal Docker containers, a reverse proxy layer is mandatory. Nginx Proxy Manager (NPM) provides a highly streamlined, interface-driven approach to routing traffic, managing Let’s Encrypt SSL certificate issuance and renewal, and applying Access Control Lists (ACLs) to restricted endpoints. NPM acts as the sole entry point for HTTP and HTTPS traffic, terminating the SSL connection at the edge and proxying the unencrypted request via internal Docker networks to the target application container.

While Nginx Proxy Manager is excellent for visual management, more advanced infrastructures—particularly those embracing declarative Infrastructure as Code (IaC)—often migrate to Traefik or Caddy. These modern edge routers offer dynamic container discovery, automatically detecting new Docker containers and provisioning SSL certificates based on labels placed directly within the docker-compose.yml file, eliminating the need to manually configure proxy hosts.

Deploying the Core Stack Applications

The deployment and orchestration of each application within the MarTech stack require meticulous attention to persistent volume mapping, database tuning, and environment variable configuration to ensure stability under agency-level workloads.

Mautic 5: Enterprise Marketing Automation and Deliverability Engineering

Mautic serves as the core intelligence engine of the open-source marketing stack. It relies on a classic LAMP or LEMP stack architecture, requiring PHP, a robust web server (Apache or Nginx), and a high-performance relational database (Percona MySQL or MariaDB). Utilizing the official Mautic Docker image (mautic/mautic:latest) streamlines the deployment lifecycle by packaging the exact required PHP extensions and ensuring correct file permissions out of the box.

Relational Database Optimization for High-Volume Marketing

Mautic is an exceptionally database-intensive application. Every single tracking pixel hit on a client’s website, every form submission, and every email open or click creates a distinct database transaction. For large datasets, the default configurations of MariaDB or MySQL are wholly insufficient and will result in catastrophic performance degradation. The database engine’s innodb_buffer_pool_size must be aggressively tuned to match the host server’s available RAM. In dedicated database node setups, allocating up to 50% to 70% of total memory to the buffer pool is standard practice. This tuning ensures that the entire database index fits within high-speed RAM, preventing the database from falling back to disastrously slow disk I/O swapping during complex segment rebuilds or campaign executions.

Resolving the SMTP Deliverability Challenge via AWS SES

The single most critical failure point for self-hosted Mautic installations is email deliverability. A poorly configured SMTP relay guarantees that marketing campaigns will be routed directly to recipient spam folders.

While fully self-hosted SMTP solutions like Postal or Mailtrain exist and offer great control for developers, achieving reliable inbox placement for millions of emails without an established IP reputation usually necessitates leveraging a commercial SMTP relay infrastructure.

Amazon Simple Email Service (SES) is universally recognized as the industry standard for high-volume, low-cost transactional and marketing emails, easily capable of handling loads exceeding 250,000 emails monthly. With the release of Mautic 5, the core email transport mechanism transitioned from the deprecated SwiftMailer to the modern Symfony Mailer package, fundamentally altering how Amazon SES is configured.

To configure Mautic 5 with Amazon SES, administrators must deploy the symfony/amazon-mailer package alongside a specialized plugin (such as the highly recommended etailors_amazon_ses plugin, which utilizes the AmazonSesBundle namespace) to handle asynchronous webhooks and automated bounce management. Instead of relying on standard SMTP ports (25, 465, or 587)—which are frequently blocked by VPS hosting providers at the network level to prevent spam abuse—communication occurs directly via the authenticated AWS API. This requires configuring the Data Source Name (DSN) string within Mautic specifically as mautic+ses+api.

Bounce processing is an absolute necessity; failing to immediately suppress hard bounces will rapidly destroy the agency’s domain sending reputation and result in AWS algorithmically suspending the SES account. The Mautic SES plugin establishes a highly secure callback URL that Amazon Simple Notification Service (SNS) pings instantaneously whenever a bounce or spam complaint occurs. This webhook allows Mautic to instantly update the affected contact’s Do Not Contact (DNC) status, ensuring automated list hygiene without manual intervention. When implementing this, administrators must ensure cache clearance via the Mautic CLI (php bin/console cache:clear) to properly register the new plugins and routing rules.

n8n: Multi-Tenant Workflow Orchestration

n8n serves as the central nervous system of the agency stack, orchestrating complex data flows between CRM platforms, external lead generation forms, and proprietary APIs. Deploying n8n for an agency presents unique challenges regarding multi-tenancy, data security, and execution scaling.

Scaling with Distributed Queue Mode Architecture

For basic homelab deployments or single-client use cases, n8n runs entirely within a single monolithic Docker container. However, production agency environments handling heavy concurrent Webhook traffic, or complex data transformations involving massive JSON payloads, must operate n8n in its distributed Queue Mode.

Queue mode requires a fundamental architectural shift. It relies on a shared PostgreSQL database to persist workflow definitions and execution histories, and mandates a Redis instance to act as a high-speed message broker. In this distributed topology, a primary n8n instance serves the visual UI and accepts incoming webhook triggers, instantly offloading the actual computational heavy lifting of workflow processing to an array of independent n8n “worker” containers listening to the Redis queue. This horizontal scaling model allows the infrastructure to absorb massive traffic spikes smoothly. Additionally, configuring n8n to utilize external blob storage (like S3) for binary data execution ensures that heavy files (e.g., video processing or large CSV imports) moving through workflows do not bloat the primary PostgreSQL database, preserving optimal query speeds and system responsiveness.

Multi-Tenancy Strategies and Client Billing

Agencies must cryptographically and logically isolate client data. When deploying n8n for a roster of multiple clients, architects must choose between two primary deployment patterns:

- Logical Isolation (The Hybrid Model): A single, massive n8n cluster handles workflows for all clients. Workflows must be meticulously designed to pass a tenant_id context variable through every execution node. While this significantly reduces infrastructure overhead and server costs, it carries a severe, often unacceptable risk of cross-tenant data leakage. A single misconfigured workflow by a junior engineer could inadvertently route Client A’s lead data to Client B’s CRM.

- Physical Isolation (Container-as-a-Service Model): This is the strictly recommended approach for enterprise security and accurate billing transparency. Each client receives a dedicated, walled-off n8n container stack, an independent PostgreSQL schema, and a completely isolated credential store. Agencies utilize container orchestration tools (like Docker Compose templates or Kubernetes Helm charts) to stamp out these identical stacks dynamically upon client onboarding. This inherently isolates the “blast radius” of any security vulnerability, third-party API rate limit ban, or CPU spike to a single client instance.

Operating in a physical isolation model also enables precise client billing based on usage monitoring. By tracking metrics such as execution counts, tasks per workflow, and third-party API call volumes per isolated container, agencies can transition from flat-rate retainers to highly profitable, execution-based or tiered subscription pricing models.

Matomo: Privacy-First Analytics at Scale

Matomo provides an ethical, GDPR-compliant alternative to corporate telemetry platforms like Google Analytics 4. Because Matomo fundamentally requires storing raw data indefinitely on the host server to allow for historical analysis, its database architecture requires careful planning.

For high-traffic agency environments tracking millions of page views across client portfolios, standard dynamic archiving—where Matomo attempts to compute complex reports on-the-fly exactly when a user opens the dashboard—will inevitably crash the PHP-FPM processes and exhaust server RAM. The Docker architecture must be adapted to include a “sidecar” container specifically dedicated to background archiving tasks.

In this multi-container configuration, the primary Matomo web container solely ingests asynchronous tracking requests and serves the static UI. Simultaneously, the headless sidecar container executes continuous cron jobs (core:archive) in the background, pre-computing the complex analytical reports and storing the flattened results in the database. This guarantees a highly responsive dashboard experience for clients, even when querying massive datasets. Furthermore, client-side performance can be highly optimized by caching the matomo.js tracker file using the host-level Nginx proxy and enabling aggressive GZIP compression, which reduces the payload size from approximately 60KB down to a mere 20KB, significantly accelerating page load times across client web properties.

EspoCRM: Modern Customer Entity Management

EspoCRM handles the sales pipelines, opportunity tracking, and customer data entities, serving as the system of record for the agency’s internal operations and client sales teams. Installed via Docker Compose, it requires a web container, a cron daemon container, and a MariaDB or PostgreSQL database container.

A unique architectural requirement for ensuring modern CRM responsiveness is the integration of real-time WebSockets. In standard PHP applications, users must manually refresh pages to see new data. To overcome this, a dedicated WebSocket container (espocrm-websocket) running a specialized daemon must be deployed alongside the main application, mapping to a distinct internal port (e.g., 8081). The reverse proxy (Nginx Proxy Manager or Traefik) must be explicitly configured to upgrade standard HTTP connections to persistent WebSockets for this specific path. This architecture ensures that sales agents receive instantaneous, push-based notifications of lead status updates, incoming emails, and system events without generating excessive database polling traffic. Furthermore, background tasks such as IMAP email fetching and automated workflow triggers depend on a dedicated cron container running the docker-daemon.sh entrypoint, replacing the need for complex host-level crontab configurations.

Data Resilience: Disaster Recovery Strategies with Restic

The absolute centralization of agency and client data within this self-hosted stack dictates that a catastrophic server failure, a malicious ransomware encryption event, or a database corruption incident would be an existential threat to the business. Standard whole-server snapshot backups provided by cloud hosts (like DigitalOcean or Hetzner) are insufficient for production databases because they lack application awareness; taking a snapshot while a database is actively writing transactions often results in a corrupted, unrecoverable backup.

A robust, enterprise-grade backup strategy must target the persistent Docker volumes directly and offsite the heavily encrypted data to S3-compatible object storage (e.g., AWS S3, Backblaze B2, Google Cloud Storage).

Restic is an exceptionally fast, cryptographically secure, and highly efficient backup program written in Go that utilizes advanced block-level deduplication to minimize storage footprints and drastically reduce upload times. Because managing native Restic commands, repository initializations, and snapshot pruning across dozens of distinct Docker containers is highly prone to human error, Autorestic serves as a vital declarative wrapper.

Implementing Autorestic for Docker Environments

Autorestic utilizes a highly readable .autorestic.yaml configuration file to define data locations, snapshot policies, and external S3 backend destinations.

Configuration Concept

-

Locations

Maps host paths (e.g., /var/lib/docker/volumes/) to specific backends.

Allows selective backup of critical data (databases, user uploads) while ignoring ephemeral container data.

-

Backends

Defines the destination, such as an S3 bucket URL, utilizing rclone protocols.

Ensures data is stored off-site. Cryptographic keys are enforced here to guarantee zero-knowledge encryption.

-

Cron Scheduling

Uses standard cron syntax (e.g., _/15 _ * * *) within the YAML.

Automates the backup cadence without relying on host-level crontab configurations.

A critical, non-negotiable insight regarding database backups: executing a direct file-level backup of a live, actively writing MySQL or PostgreSQL database directory via Restic risks severe data corruption, as files may change mid-read. While shutting down the database container prior to backup ensures complete consistency, it introduces unacceptable downtime in a 24/7 agency environment.

The superior architectural approach is a meticulously automated two-step process:

- Use a pre-backup bash script triggered by a cron job to execute a logical dump (e.g., mysqldump or pg_dump) of the database into a mapped, persistent volume within the container.

- Configure Autorestic to back up only the resulting, static .sql or .dump file to the remote S3 bucket, completely avoiding the live database files.

Autorestic also seamlessly manages retention policies via the restic forget command, automatically pruning older snapshots (e.g., configured to keep exactly 7 daily, 4 weekly, and 6 monthly backups) to control accumulating S3 storage costs without compromising historical disaster recovery capabilities.

Comprehensive Observability and Monitoring Stack

Deploying a complex, containerized production environment requires total, granular visibility into both underlying host metrics and application-layer health. A dual-stack monitoring approach utilizing Netdata for deep system telemetry and Uptime Kuma for synthetic availability monitoring provides an impenetrable observability matrix.

Deep System Telemetry with Netdata

Netdata is a highly optimized, distributed monitoring agent that collects thousands of system metrics at an astonishing per-second granularity, operating with minimal CPU overhead. Crucially, when deployed as a privileged Docker container with the host’s /var/run/docker.sock mounted and SYS_PTRACE capabilities enabled, Netdata functions as a brilliant zero-configuration auto-discovery engine.

It natively reads the Linux cgroup (control group) filesystems, bypassing Docker’s abstractions to pull precise, real-time metrics regarding CPU scheduling, memory consumption (differentiating between RSS, cache limits, and mapped memory), and Block I/O read/write operations for every individual container running on the host. This level of introspection allows systems architects to pinpoint exact bottlenecks instantaneously. For example, rather than simply knowing the server is slow, an engineer can identify that specifically the Mautic MySQL container is exhausting its RAM allocation and falling back to swap memory during a massive email campaign launch.

Netdata ships out-of-the-box with hundreds of pre-configured health alarms. Through the configuration of the health.d/custom.conf file, administrators can define highly specific alert thresholds. An agency could set an alarm to trigger immediately if a single n8n worker container exceeds 1GB of memory usage for more than 60 consecutive seconds. These alerts are subsequently routed through webhook integrations to communication platforms like Slack, Discord, or PagerDuty, ensuring the DevOps team is notified of an anomaly before it cascades into a total system failure.

Synthetic Availability Monitoring with Uptime Kuma

While Netdata excels at monitoring internal resource consumption and kernel-level metrics, Uptime Kuma is explicitly tasked with synthetic external monitoring—verifying that the services are actually reachable and functioning from the perspective of an end-user on the internet. Uptime Kuma continuously polls the external HTTP/HTTPS endpoints of the marketing stack, verifying critical parameters such as SSL certificate validity, the presence of specific HTML keywords, and overall network response latency. It can also be configured to monitor specific TCP ports or even directly interface with the Docker daemon to verify container states.

For a digital agency managing hundreds of client URLs, API endpoints, and individual n8n webhook addresses, manually configuring these monitors via a graphical user interface is hopelessly inefficient. The modern architecture leverages the uptime-kuma-api Python library to programmatically interact with the monitoring system. By treating monitor configurations as declarative code, Continuous Integration/Continuous Deployment (CI/CD) pipelines can automatically trigger a Python script to add new HTTP monitors, TCP port checks, or Docker daemon watchers to Uptime Kuma the exact moment a new client environment is provisioned.

Furthermore, Uptime Kuma provides highly customizable, public-facing Status Pages. This allows agencies to offer strict Service Level Agreement (SLA) transparency to their client base directly via WebSocket-driven real-time updates, displaying current status, historical uptime percentages, and incident history without requiring clients to submit support tickets.

Conclusion

The strategic transition to a self-hosted, Linux-based marketing stack represents a significant maturity milestone for modern digital agencies. It decisively reclaims data sovereignty in an era of strict privacy regulations, completely eliminates the punitive scaling costs associated with proprietary SaaS models, and establishes a highly adaptable engineering foundation.

By expertly orchestrating modular open-source applications (Mautic, n8n, Matomo, EspoCRM) through strict Docker Compose network isolation, hardening the underlying infrastructure with Ubuntu 24.04 and the behavior-based CrowdSec IPS, and ensuring absolute data resilience via S3-backed Restic backups and Netdata observability, an agency architects a technology ecosystem fully capable of supporting enterprise-grade, multi-tenant workflows. This sovereign stack not only aggressively protects the agency’s profit margins but fundamentally empowers it to offer unparalleled, legally compliant, and deeply integrated marketing technologies to its diverse clientele.