SageMaker vs. Vertex AI: Enterprise ML Platform Comparison

Enterprise Machine Learning Platforms: A Comprehensive Strategic and Technical Analysis of AWS SageMaker and Google Vertex AI

The enterprise cloud computing ecosystem is currently undergoing a structural transformation driven by the rapid maturation of artificial intelligence. Transitioning from traditional, predictive machine learning paradigms toward generative artificial intelligence (GenAI) and autonomous, multi-agent systems has fundamentally altered how organizations evaluate cloud infrastructure. Data Science and Machine Learning (DSML) platforms are no longer considered peripheral experimentation environments utilized solely by specialized researchers; they have become the foundational operating systems upon which modern enterprises construct their core business logic and competitive advantages. The 2026 Gartner Magic Quadrant for DSML Platforms explicitly highlights this shift, noting that the ability of a vendor to leverage GenAI and agentic workflows is now a primary differentiator, pushing vendors to prove the quality of AI-assisted experiences rather than merely the presence of underlying computing features.

Within this highly competitive landscape, two platforms command the vast majority of enterprise attention and strategic capital: Amazon Web Services (AWS) SageMaker and Google Cloud Vertex AI. Both platforms are universally recognized as industry leaders, consistently achieving the highest execution and vision scores in tier-one evaluations such as the Gartner Magic Quadrant and the Forrester Wave for AI Infrastructure Solutions. However, beneath their shared high-level capabilities—model training, hosting, monitoring, and orchestration—lies a profound philosophical divergence in how they approach software engineering, infrastructure abstraction, and user experience.

Choosing between AWS SageMaker and Google Vertex AI is rarely a simple feature-by-feature comparison; rather, it is a complex architectural commitment that dictates an organization’s engineering velocity, long-term operational expenditure, and talent acquisition strategy. The decision profoundly influences whether an enterprise will require a large team of dedicated Machine Learning Operations (MLOps) engineers to maintain bespoke infrastructure or whether data scientists can operate autonomously within a highly managed environment. This report provides an exhaustive, granular analysis of both platforms across the 2025 and 2026 technology cycles, examining their architectural paradigms, data engineering ecosystems, model development features, generative AI capabilities, inference economics, governance frameworks, and overall return on investment (ROI).

Architectural Philosophy and Ecosystem Integration Paradigms

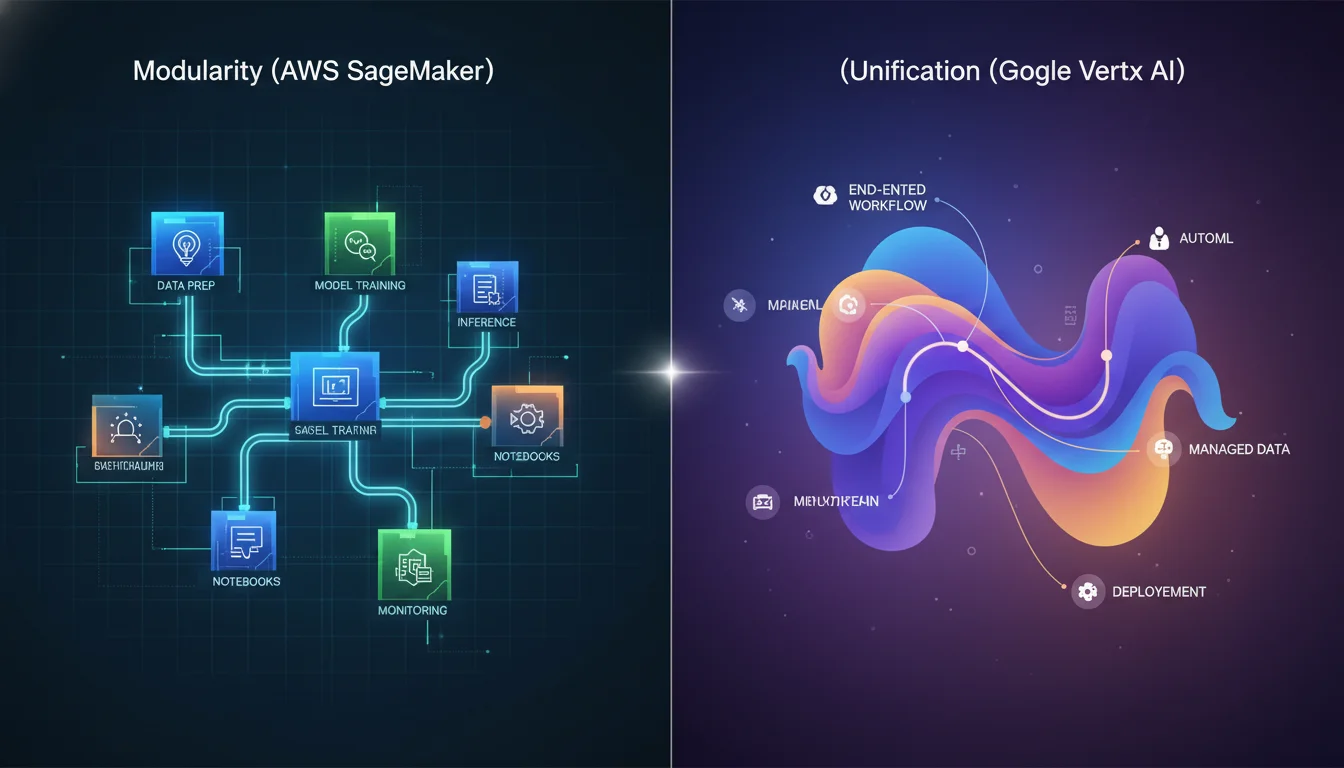

The architectural design of a machine learning platform inherently dictates how engineering teams interact with the underlying compute, storage, and networking layers. The core tension in modern ML platform design is the balance between granular, low-level infrastructure control and automated, frictionless simplicity. AWS SageMaker and Google Vertex AI represent the extreme ends of this spectrum, catering to vastly different organizational structures and engineering cultures.

AWS SageMaker: Granular Control, Modularity, and Ecosystem Depth

Launched in 2017, Amazon SageMaker has evolved into a vast, highly modular suite of independent ML tools deeply embedded within the AWS ecosystem. Its architecture is best understood as a sophisticated “constructor set” rather than a single monolithic application. Components such as SageMaker Training, SageMaker Endpoints, SageMaker Pipelines, and SageMaker Feature Store can be utilized entirely independently or woven together via custom orchestration logic.

This modularity provides profound, granular control over the infrastructure. Machine learning engineers and DevOps professionals can specify precise Elastic Compute Cloud (EC2) instance types down to the hardware accelerator level, configure complex Virtual Private Cloud (VPC) network isolations with custom routing tables, and attach specialized shared file systems like Amazon Elastic File System (EFS) or Amazon FSx for high-throughput data loading during training. However, this deep integration means that maximizing SageMaker’s potential requires substantial organizational familiarity with the broader AWS ecosystem, particularly Identity and Access Management (IAM), Amazon Simple Storage Service (S3), and Amazon CloudWatch.

The second-order implication of this architectural choice is the learning curve. SageMaker is undeniably an engineer-first environment, often described by practitioners and analysts as having a significantly steeper learning curve compared to its competitors. It is a system designed for teams that require the absolute maximum extraction of performance and efficiency from their infrastructure, and who possess the technical acumen to manipulate the requisite “knobs” for fine-tuning network and compute behavior.

To address the fragmentation inherent in a highly modular system, AWS introduced Amazon SageMaker Unified Studio in late 2025. This environment attempts to bridge the gap between disparate data analytics and AI tools by providing a unified lakehouse architecture. Unified Studio integrates with AWS IAM Identity Center and allows users to seamlessly browse data, search assets via a business glossary, and execute Visual Extract, Transform, and Load (ETL) jobs or write Apache Spark code natively within notebooks. Despite these advancements toward unification, the underlying reality of SageMaker remains rooted in providing maximum flexibility at the cost of operational complexity.

Google Vertex AI: Unified Workflows, Automation, and Opinionated Design

Vertex AI, introduced subsequently as a consolidation of Google Cloud’s earlier, fragmented AI Platform and AutoML services, emphasizes an “AI-native,” unified, and highly opinionated workflow. Vertex AI deliberately abstracts much of the underlying infrastructure, offering a significantly more automated and streamlined deployment experience. It is heavily optimized around Google Cloud’s data stack, making it an exceptionally attractive proposition for data engineering teams already leveraging Google BigQuery or Cloud Storage for their enterprise data warehousing.

The design philosophy underpinning Vertex AI aims to minimize the operational friction inherent in moving models from local experimentation to global production. By centralizing the entire machine learning lifecycle into a single, intuitive interface, Vertex AI accelerates deployment velocity, particularly for data science teams that may lack deep cloud infrastructure or Kubernetes expertise. The platform provides an integrated control center where data labeling, model training, hyperparameter tuning, model evaluation, and deployment occur seamlessly without requiring manual stitching of disparate services.

Furthermore, Vertex AI’s architecture is deeply integrated with Dataplex Universal Catalog, which automates the discovery, governance, and lineage tracking of ML artifacts across disparate regions and Google Cloud projects. This reduces the cognitive load on administrators and ensures that governance is treated as a default state rather than an engineered afterthought. However, this simplicity comes with trade-offs; Vertex AI offers moderately less flexibility and fine-tuning capability for niche infrastructural configurations when compared to the vast configurability of AWS SageMaker.

Cross-Cloud Orchestration and the Reality of Ecosystem Lock-in

While cloud vendors frequently tout the portability of containerized machine learning workloads, the reality of enterprise AI deployments is that both platforms inevitably create profound ecosystem lock-in. Models themselves may be portable Docker containers, but the surrounding data ingestion pipelines, identity policies, continuous integration frameworks, and orchestration logic are deeply tethered to the host cloud. Migrating petabytes of training data between clouds introduces prohibitive latency delays and massive network egress costs, meaning that an organization’s existing cloud data gravity is often the primary, overriding determinant of which ML platform will yield the highest engineering and financial efficiency.

In scenarios where enterprises adopt a multi-cloud strategy, integrating AWS SageMaker for granular model training with Google Vertex AI for pipeline management requires complex hybrid architectures. This necessitates advanced identity federation, mapping AWS IAM roles to Google Cloud Platform (GCP) service accounts via OpenID Connect (OIDC), and allowing policy engines to handle secure token exchange. While technically feasible, maintaining strict least-privilege security boundaries and mirrored policy rules across two distinct cloud ecosystems introduces substantial administrative overhead, highlighting why most organizations ultimately standardise on a single primary platform.

Data Engineering, Preparation, and Feature Store Architecture

Data readiness is the fundamental, non-negotiable prerequisite for AI readiness. The quality, cleanliness, and accessibility of training data directly dictate the upper bound of a model’s performance. The mechanisms by which raw data is ingested, cleaned, labeled, transformed, and served to models during training and real-time inference differ significantly between the AWS and Google Cloud ecosystems.

SageMaker Data Preparation and Processing Tools

SageMaker provides a robust, albeit historically fragmented, suite of tools specifically engineered for large-scale data preparation.

This toolkit caters to various personas, from software engineers writing custom distributed processing code to business analysts utilizing visual interfaces.

For users who prefer visual, low-code environments, AWS offers SageMaker Data Wrangler, which is integrated directly into the SageMaker Canvas interface. Data Wrangler is heavily optimized for tabular data tasks, allowing users to combine, clean, and transform datasets using intuitive point-and-click interactions and over 300 built-in transformations. Furthermore, recent updates allow users to employ generative AI-powered natural language instructions to execute complex data transformations without writing underlying Python or Pandas code.

For massive, distributed data processing tasks that exceed the capacity of single instances, SageMaker integrates deeply with Amazon EMR Serverless, enabling data engineers to execute highly scalable Apache Spark and Apache Hive workloads directly within SageMaker Studio without the operational burden of managing Hadoop or Spark clusters. Additionally, AWS provides the SageMaker Processing API, which allows advanced teams to productionize their custom Python or Spark transformation code into managed, containerized jobs that automatically spin up, execute the transformation, output the processed data to S3, and tear themselves down.

When supervised machine learning requires human intervention, SageMaker Ground Truth serves as the primary mechanism for managing complex data labeling workflows. It provides a comprehensive “human-in-the-loop” framework, allowing enterprises to route raw image, text, or video data to internal teams, third-party vendors, or the Amazon Mechanical Turk workforce to generate high-quality, annotated training sets.

Vertex AI Data Management and BigQuery Synergy

Google Cloud’s approach to data preparation within Vertex AI is defined by its incredibly tight coupling with its broader data analytics portfolio, most notably BigQuery. This integration is widely considered Vertex AI’s most potent strategic advantage for data-centric organizations.

Rather than requiring data to be extracted from a warehouse, transformed in an external processing engine, and loaded into a specific machine learning environment, Vertex AI allows data scientists to operate directly where the data resides. Utilizing BigQuery ML, practitioners can perform complex SQL-based data transformations, feature engineering, and even execute distributed model training directly within the data warehouse infrastructure. Models trained in BigQuery ML are automatically registered into the Vertex AI Model Registry without requiring manual export or import procedures, fundamentally streamlining the transition from analytics to predictive modeling.

For data labeling requirements, Vertex AI Data Labeling provides a service highly comparable to SageMaker Ground Truth, facilitating the generation of high-quality labeled datasets through managed human reviewers. Furthermore, Vertex AI features a concept known as “managed datasets,” which automatically interface with Vertex ML Metadata. This ensures that the exact state, schema, and statistical distribution of the dataset used for any specific training run are permanently recorded and queryable in the model’s lineage graph.

Feature Store Implementations: Managed vs. Manual Engineering

A critical component of modern machine learning architecture is the Feature Store—a centralized repository designed to organize, store, share, and serve curated features for both offline training and low-latency online inference.

Google’s Vertex AI Feature Store provides a highly managed experience. It natively handles the automated synchronization between offline stores (used for batch training) and online stores (used for real-time inference), ensuring that models in production always access the most up-to-date feature values. Vertex AI’s solution provides built-in mechanisms for batch features and vector databases with minimal engineering overhead.

Conversely, the Amazon SageMaker Feature Store, while deeply integrated with AWS IAM and CloudTrail for auditing, requires substantially more manual engineering setup. Crucially, out-of-the-box, the SageMaker Feature Store lacks native, managed support for complex streaming aggregations and automated online/offline synchronization. To achieve feature parity with highly managed systems, AWS architects frequently must integrate supplementary services, such as deploying Amazon OpenSearch (Elasticsearch), Amazon DynamoDB, or third-party vector databases like Pinecone and Chroma, to handle real-time streaming feature aggregation. This requirement highlights the constructor-set nature of SageMaker, demanding a higher baseline of data engineering capability.

| Data Engineering Feature | AWS SageMaker Ecosystem | Google Vertex AI Ecosystem |

|---|---|---|

| Visual Data Preparation | SageMaker Data Wrangler / Canvas | Integrated Data Prep / Dataprep |

| Big Data Processing | EMR Serverless, SageMaker Processing API | BigQuery ML, Cloud Dataflow |

| Data Labeling | SageMaker Ground Truth | Vertex AI Data Labeling |

| Feature Store Management | Requires manual sync & external integration | Highly managed, automated online/offline sync |

| Streaming Feature Aggregation | Requires external tools (e.g., Pinecone, OpenSearch) | Supported within managed framework |

Model Development, Hardware Acceleration, and Automated ML

Hardware Acceleration and Silicon Co-Design

As the industry shifts toward training massive Foundation Models and large-scale deep learning networks, the computational efficiency of the underlying hardware defines both the speed of innovation and the ultimate cost of deployment.

Google Cloud pursues an aggressive strategy of silicon-infrastructure co-design. Vertex AI provides native, seamless access to Google’s proprietary Tensor Processing Units (TPUs), including advanced iterations like the TPU v5p. TPUs offer extraordinary performance-per-watt efficiency and massive speed-ups for specific deep learning workloads, particularly large language models based on transformer architectures and models authored in TensorFlow. In addition to TPUs, Google provides vast arrays of NVIDIA GPUs, including the highly sought-after A100 and H100 configurations. However, strategic deployment on GCP must account for hardware scarcity; TPUs are available in fewer geographical regions than standard GPUs and are subject to highly restrictive resource quotas, often requiring enterprises to secure committed capacity well in advance of production workloads.

Amazon Web Services counters this with an exceptionally broad and deep selection of computational infrastructure. SageMaker allows engineers to provision massive clusters of NVIDIA GPUs, automatically scaling compute nodes based on the dynamic demands of the workload. More strategically, AWS has aggressively developed its own proprietary silicon alternatives, specifically the AWS Trainium and AWS Inferentia (e.g., Inferentia3) chip families. These chips are engineered from the ground up to provide highly cost-effective alternatives to NVIDIA hardware for deep learning training and inference, significantly lowering the barrier to scaling massive models for enterprises willing to compile their models for AWS’s Neuron SDK architecture.

Automated Machine Learning (AutoML) and Democratization

For organizations seeking to bypass the highly specialized, manual processes of hyperparameter tuning, neural architecture search, and algorithm selection, both platforms offer sophisticated automated machine learning suites.

Google’s Vertex AI AutoML is widely considered highly mature and industry-leading in its ease of use. It enables users to quickly generate state-of-the-art predictive models for computer vision, natural language processing, and complex tabular data with absolutely minimal code. This capability significantly lowers the barrier to entry, empowering business analysts and citizen data scientists to deploy performant models that historically required PhD-level expertise.

AWS provides comparable capabilities through SageMaker Autopilot, which automates the end-to-end process of building, training, and tuning ML models, providing full visibility into the generated code so data scientists can further refine the automated baseline. For entirely code-free development, AWS offers SageMaker Canvas, a visual interface designed explicitly for business analysts. Canvas allows users to drag and drop datasets, automatically train predictive models, and even fine-tune pre-trained foundation models with just a few clicks, fully democratizing access to predictive analytics across the enterprise.

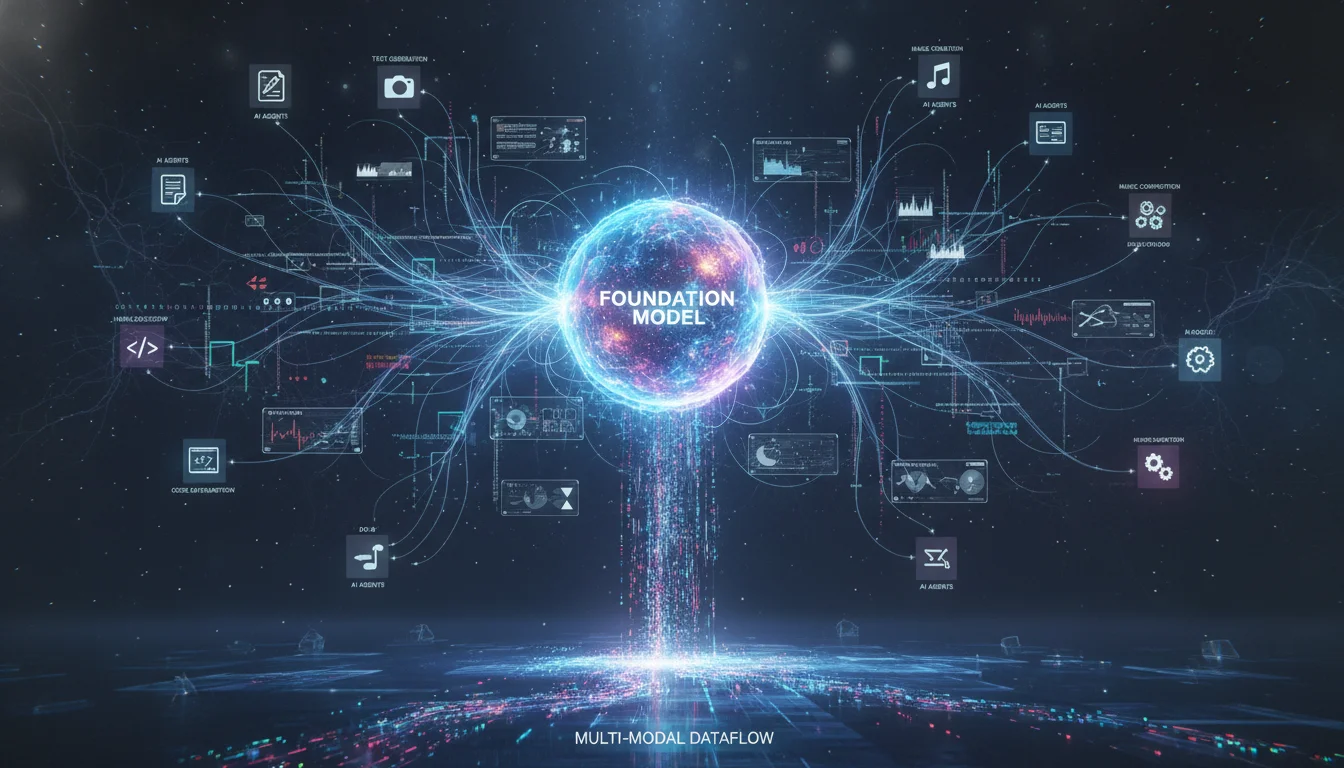

The Generative AI Era: Foundation Models and Agentic Workflows

Throughout 2024, 2025, and into 2026, the focal point of the machine learning platform war has shifted almost entirely to Generative AI, Foundation Models (FMs), and autonomous agentic workflows. Both AWS and Google have aggressively transformed their respective platforms into centralized hubs for accessing, fine-tuning, evaluating, and deploying Large Language Models (LLMs).

The strategic approach of each vendor, however, reflects divergent priorities regarding proprietary model development versus open ecosystem curation.

Vertex AI Model Garden and the Gemini Ecosystem

Google positions the Vertex AI Model Garden as a massive, “neutral supermarket” for foundation models. The Model Garden houses an extensive, curated selection of over 200 enterprise-ready models. This repository includes Google’s powerful first-party models (the Gemini family, Imagen, Chirp, and Veo), deep partnerships with third-party providers (such as Anthropic’s Claude 3.5 Sonnet and Opus), and highly optimized open-source weights (including Meta’s Llama 3 and Google’s own Gemma series).

The crown jewel of Vertex AI is its native, deep integration with the Gemini model family. Vertex AI provides immediate API access to Google’s most capable multimodal models, including Gemini 3.0 Pro, Gemini 2.5 Pro, and specialized reasoning variants like Gemini 2.5 Flash Reasoning. Developers can utilize Vertex AI Studio to prompt, test, and system-tune Gemini using complex mixtures of text, high-definition images, video, and code natively within the browser.

Furthermore, acknowledging the industry’s rapid evolution from basic chatbots to complex, multi-agent systems that autonomously execute tasks, Google introduced Vertex AI Agent Engine and Agent Builder. This includes an Agent Development Kit (ADK) available for Python and Java, alongside a fully managed runtime environment. Agent Engine directly solves the operational hurdles that historically cause AI agent pilots to fail in production by providing built-in memory banks, secure code execution sandboxes, strict release management, and global scalability.

SageMaker JumpStart and the Amazon Nova Series

AWS relies on SageMaker JumpStart as its primary intra-platform repository for discovering and deploying pre-trained open-source and proprietary models, operating in tandem with Amazon Bedrock for fully managed, serverless API access to foundation models.

While AWS heavily promotes third-party models like Anthropic’s Claude, late 2024 and 2025 saw Amazon aggressively enter the proprietary model race with the launch of the Amazon Nova family. The Nova series—comprising Nova Micro, Nova Lite, Nova Pro, and Nova Premier—is engineered specifically to provide low-cost, tightly integrated, multimodal infrastructure for the enterprise. Amazon’s strategic bet with Nova is not necessarily to win every benchmark on raw, absolute intelligence, but to dominate unit economics and latency. For example, Amazon Nova Micro achieves an extraordinary, industry-leading speed of 210 output tokens per second, making it the ideal foundation for high-throughput, latency-critical applications where heavy reasoning models would be cost and time-prohibitive.

To support the customization of these massive models, AWS introduced serverless model customization capabilities within SageMaker in 2025. This innovation automatically selects and provisions the precise compute infrastructure required based on the model’s architecture and the dataset size. It supports a highly advanced spectrum of fine-tuning methodologies, including Parameter-Efficient Fine-Tuning (PEFT), Low-Rank Adaptation (LoRA), and Quantized LoRA (QLoRA). Furthermore, SageMaker provides native support for critical alignment techniques required to ensure AI systems adhere to corporate guidelines, including Reinforcement Learning from Human Feedback (RLHF), Direct Preference Optimization (DPO), and Reinforcement Learning from AI Feedback (RLAIF).

Comparative Performance Benchmarks

A quantitative comparison of model intelligence reveals the divergent strategic priorities of Google and AWS. Google’s Gemini 3.0 and 2.5 Pro models generally dominate raw intelligence, complex mathematics, and deep reasoning benchmarks, positioning them as the superior choice for highly complex, unstructured problem-solving. Conversely, Amazon Nova models compete aggressively on speed, cost-efficiency, and highly specific sub-tasks.

| Benchmark Category | Amazon Best Performer | Google Best Performer |

|---|---|---|

| General Intelligence | 19.0 (Nova Premier 1.0) | 48.4 (Gemini 3 Pro Preview) |

| Advanced Mathematics | 33.7 (Nova 2 Lite) | 95.7 (Gemini 3 Pro Preview) |

| MMLU Pro (Knowledge) | 74.3 (Nova 2 Lite) | 89.8 (Gemini 3 Pro Preview) |

| LiveCodeBench (Coding) | 34.6 (Nova 2 Lite) | 91.7 (Gemini 3 Pro Preview) |

| AIME (Reasoning) | 17.0 (Nova Premier 1.0) | 88.7 (Gemini 2.5 Pro) |

Data sourced from aggregated competitive AI benchmark analysis across 2025/2026 evaluations.

The data emphatically suggests that for use cases requiring immense mathematical reasoning or advanced autonomous code generation, Vertex AI utilizing Gemini 3.0 Pro holds a distinct, undeniable advantage. However, AWS’s ecosystem approach—allowing enterprises to utilize Nova for high-speed, low-cost routing tasks while seamlessly calling Anthropic’s Claude 3.5 for complex reasoning via Amazon Bedrock—ensures that AWS customers are not fundamentally disadvantaged in production capabilities.

The Architectural Trade-off: RAG versus Fine-Tuning

Integrating proprietary enterprise knowledge into Foundation Models requires architects to choose between Retrieval-Augmented Generation (RAG) and model fine-tuning.

This decision transcends basic capabilities; it dictates the security boundaries, scaling behavior, and long-term costs of the AI system.

Retrieval externalizes knowledge. By using vector databases and embeddings (heavily supported by both Vertex AI RAG Engine and Amazon Bedrock Knowledge Bases), the system retrieves relevant documents and inserts them into the model’s prompt at inference time. This approach is highly auditable and flexible, as access control is enforced at query time based on user identity and entitlements. However, every retrieval query expands the attack surface, creating opportunities for malicious prompt injection designed to manipulate the model’s output.

Fine-tuning, conversely, internalizes knowledge directly into the neural network’s weights. This substantially reduces the system’s exposure to retrieval manipulation and prompt injection. However, it introduces a profound security risk: sensitive enterprise data embedded permanently within billions of mathematical weights is exceedingly difficult to audit, scope, or cleanly remove if access policies change. SageMaker’s deep, native integration with AWS Key Management Service (KMS) and highly granular IAM roles provides a slight architectural edge in securely managing, isolating, and encrypting the customized weight artifacts generated during advanced fine-tuning jobs.

MLOps, Pipeline Orchestration, and Metadata Lineage

Machine Learning Operations (MLOps) encompasses the rigorous engineering practices required to deploy, scale, and maintain models reliably in production environments. Despite vendor marketing claims that managed platforms eliminate the need for specialized personnel, MLOps engineers remain vital; while generic tutorial examples are straightforward, actual enterprise data pipelines and business requirements are inherently complex, requiring continuous automation, monitoring, and intervention.

Continuous Integration and Pipeline Orchestration

The mechanisms by which workflows are automated differ drastically between the platforms, reflecting their underlying philosophies.

- Vertex AI Pipelines: Google utilizes Kubeflow—a highly popular, open-source Kubernetes-native platform—as the foundational orchestration engine for Vertex AI Pipelines. This architectural choice provides exceptional portability; pipelines authored by data scientists locally, or on on-premises Kubernetes clusters, can be executed on Vertex AI with minimal modification. It tightly integrates with Google Cloud services like BigQuery and Cloud Storage, offering an intuitive UI that is heavily favored by teams already embedded in the GCP ecosystem.

- SageMaker Pipelines: AWS, conversely, utilizes a proprietary CI/CD framework natively integrated with the broader AWS ecosystem, specifically leveraging AWS Step Functions, AWS Lambda, and Amazon EventBridge. While this proprietary approach lacks the cross-cloud portability of Kubeflow, it provides unparalleled reliability, complex event-driven triggering capabilities, and deep security integrations, making it the preferred choice for large-scale enterprise workloads that demand rigorous compliance and auditing.

Metadata Management and AI Data Lineage

Tracing the exact lifecycle of an AI artifact—from the raw data query to the final deployed prediction endpoint—is critical for ensuring experimental reproducibility, diagnosing model degradation, and adhering to strict regulatory compliance audits.

Vertex ML Metadata provides a highly sophisticated tracking system that builds upon concepts from the open-source ML Metadata library developed by the TensorFlow Extended team. Vertex AI automatically captures comprehensive execution graphs. An artifact’s recorded lineage includes the specific training, test, and evaluation datasets, the precise hyperparameter configurations, the exact codebase executed, and all descendant artifacts, such as the results of batch prediction jobs. Because Vertex AI interfaces seamlessly with Google Data Catalog and Dataplex Universal Catalog (utilizing Fully Qualified Names or FQNs), lineage can be traced immutably across the entire organization, providing a complete picture of the AI data journey.

SageMaker similarly generates queryable lineage graphs automatically as users progress through ML workflows. Engineers can query this backend data to answer complex questions regarding artifact origin and downstream usage. Furthermore, SageMaker Model Registry allows for the detailed tracking and versioning of models, enabling data scientists to programmatically compare different model versions based on specific evaluation metrics, such as Mean Squared Error (MSE), directly within the SageMaker Studio interface before approving a version for deployment.

| MLOps & Orchestration Feature | AWS SageMaker Pipelines | Google Vertex AI Pipelines |

|---|---|---|

| Core Orchestration Engine | Proprietary CI/CD (AWS Step Functions) | Kubeflow (Open Source backing) |

| Metadata Tracking | Queryable lineage entities | Vertex MLMD (Execution graphs) |

| Model Registry | Versioning, MSE comparison | BigQuery ML integration, Dataplex mapping |

| Explainability Tooling | Feature importance, SHAP values | Built-in fairness metrics, feature attribution |

| UI/UX Experience | Comprehensive but complex navigation | Intuitive, visual execution graphs |

Inference Infrastructure, Autoscaling, and Deployment Modalities

Model inference—the operational phase where a trained model processes new data and serves predictions—accounts for the vast majority of machine learning compute costs and engineering complexity at enterprise scale. The platforms vary drastically in how they manage endpoints, request routing, and autoscaling behavior.

Deployment Modalities and Payload Constraints

Both platforms provide robust solutions for serving models, but their specific deployment patterns cater to different use cases.

- SageMaker Deployment Options: SageMaker offers exceptional versatility through three primary deployment modes. It provides real-time endpoints for mission-critical, sub-second latency applications; batch transform jobs for processing massive offline datasets asynchronously; and asynchronous inference endpoints backed by Amazon Simple Queue Service (SQS) with results deposited into Amazon S3. This asynchronous approach is highly resilient, capable of handling variable processing times and sudden traffic spikes without dropping requests, provided the application architecture supports AWS messaging protocols. Furthermore, SageMaker supports massive payload sizes, permitting up to 6 MB for synchronous requests and an enormous 1 GB for asynchronous requests, with timeouts extending up to 15 minutes.

- Vertex AI Deployment Options: Vertex AI supports online prediction endpoints for real-time results and batch prediction heavily integrated with Cloud Storage and BigQuery. However, Vertex AI notably lacks a fully managed, decoupled asynchronous inference option comparable to SageMaker’s SQS-backed architecture. Consequently, handling variable, long-running requests in Vertex AI often requires custom engineering to route high-latency traffic through BigQuery batch jobs rather than real-time queues. Additionally, Vertex AI imposes stricter limits, with public endpoints capped at a 1.5 MB payload size and a strict 60-second timeout limit before prediction requests are dropped.

Multi-Model Endpoints and Scale-to-Zero Capabilities

For organizations hosting hundreds or thousands of specialized models (such as highly personalized recommendation engines), dedicating a unique, always-on compute instance to every individual model is financially unsustainable.

AWS SageMaker excels profoundly in this domain through its native support for Multi-Model Endpoints (MMEs). MMEs allow engineers to host multiple distinct models on a single, shared endpoint, drastically reducing infrastructural overhead and idle costs. The SageMaker infrastructure dynamically loads models from Amazon S3 into instance memory based on real-time traffic demands, keeping frequently accessed models hot in memory while evicting stale models. Crucially, SageMaker allows these components to scale independently and supports scaling idle inference components entirely down to zero, preserving immense capital during periods of low demand. Furthermore, SageMaker allows autoscaling triggers to be configured based on Queries Per Second (QPS) rather than just standard CPU/memory utilization, providing highly precise control over expensive GPU accelerator utilization.

Conversely, Google Vertex AI provides managed auto-scaling for its endpoints but currently lacks native, built-in MME support equivalent to SageMaker’s dynamic S3 loading. To achieve multi-model routing on a single Vertex endpoint, ML engineering teams must develop custom containerization strategies with manually authored model routing, caching, and eviction logic, introducing significant maintenance overhead. Additionally, synchronous Vertex AI endpoints do not support scaling down to zero instances, meaning a baseline cost is always incurred to keep the endpoint responsive.

2025 Inference Innovations: Bidirectional Streaming and Rolling Updates

In 2025, AWS introduced several critical enhancements to SageMaker AI model hosting.

Foremost among these is the implementation of bidirectional streaming for real-time inference. Utilizing HTTP/2 and WebSocket protocols, this architecture maintains persistent connections where data flows simultaneously in both directions between the client and the model. This eliminates the latency overhead of repeated TCP handshakes and transforms inference from isolated, transactional HTTP requests into continuous data streams. This capability is absolutely critical for the new generation of multi-modal applications, enabling real-time voice agents, continuous audio transcription, and live translation services (partnering directly with providers like Deepgram for their Nova-3 speech-to-text models).

Furthermore, SageMaker introduced rolling updates for inference components. Unlike traditional blue/green deployments that require provisioning duplicate, expensive infrastructure to test a new model version, rolling updates deploy the new model in configurable, fractional batches. The system deeply integrates with Amazon CloudWatch alarms; if anomalies, latency spikes, or error codes are detected during the rollout, SageMaker automatically halts the deployment and triggers an immediate rollback to the stable version, ensuring zero-downtime updates with high infrastructural efficiency. Observability was also massively enhanced, allowing engineers to track precise, unaggregated metrics down to the specific InstanceId and ContainerId levels, with publishing frequencies configurable down to 10 seconds for near real-time monitoring of critical workloads.

Governance, Security, and Compliance in Regulated Industries

As machine learning systems transition from experimental prototypes to mission-critical applications within highly regulated sectors—such as healthcare, financial services, and government—governance, robust security, and comprehensive auditing transition from secondary features to absolute, non-negotiable vendor prerequisites.

Regulatory Compliance and Network Isolation

Both AWS and Google operate under the most rigorous global compliance frameworks, providing platforms certified for HIPAA, SOC 2, PCI DSS, GDPR, and FedRAMP workloads.

For organizations operating under strict data sovereignty and military-grade security architectures, AWS SageMaker provides unparalleled granular isolation. It offers extensive auditing capabilities through AWS Artifact and CloudTrail. In 2025, SageMaker expanded its network security profile by introducing comprehensive support for IPv6 addresses across both public and private VPC endpoints, allowing modern enterprises to future-proof their dual-stack network architectures. Crucially, SageMaker features deep AWS PrivateLink integration across all regions, ensuring that enterprises can access SageMaker inference endpoints privately from within their isolated VPCs without sensitive payload data ever traversing the public internet—a fundamental requirement for HIPAA and defense compliance. Administrative governance is enforced via SageMaker Role Manager, which allows security architects to define precise, least-privilege IAM permissions mapped dynamically to individual ML personas.

Google Vertex AI counters with a governance framework that emphasizes seamless integration with Google’s broader security ecosystem. It provides Assured Workloads to mathematically enforce compliance and security controls based on specific regulatory regimes. To satisfy the strict data sovereignty laws of the European Union and other jurisdictions, Google offers Data Residency Zones (DRZ) and the capability to deploy Vertex AI on Google Distributed Cloud (GDC) for on-premises and edge environments, ensuring that data processing never crosses defined geographic boundaries. Furthermore, Google provides Model Armor, a governance-first tool specifically engineered for GenAI. Model Armor provides integrated logging, pre-configured policy templates, and rigorous input/output response filtering to detect and neutralize risks such as PII leakage or prompt injection attacks in regulated sectors.

Continuous Model Monitoring and Drift Detection

Machine learning models are not static software; they inherently degrade over time as the statistical distributions of real-world, live data drift away from the distributions of the historical data used during training. Proactive detection of this degradation is critical.

AWS provides Amazon SageMaker Model Monitor, a fully managed service that continuously evaluates the quality of models hosted in production. It automatically detects data drift, concept drift, and sudden drops in prediction accuracy, alerting model owners to initiate retraining cycles. A specialized subset of this tool, SageMaker Clarify, targets bias detection and explainability. Clarify continuously monitors predictions and automatically generates exportable reports and CloudWatch alerts if bias metrics exceed predefined thresholds. For example, if a model predicting housing prices begins to exhibit bias because the live, real-world mortgage rates have shifted significantly from the rates present in the training dataset, Clarify detects the variance in the Disparate Impact (DPPL) metric and alerts engineering teams to the algorithmic inequity.

Google’s Vertex AI Model Monitoring service provides highly equivalent capabilities, seamlessly integrating with Cloud Logging and Cloud Monitoring to track model health. It excels particularly at Data Skew Detection—identifying statistical differences between the distribution of the original training data and the distribution of the incoming prediction data—and Feature Attribution Drift Detection, which alerts data scientists when the importance of specific input features shifts unexpectedly over time.

Economics, Cost Management, and Enterprise Return on Investment (ROI)

The training of Foundation Models and the deployment of massive-scale MLOps architectures introduce unprecedented computational costs to the enterprise balance sheet. Navigating the pricing structures of AWS and Google Cloud requires meticulous architectural planning, as sub-optimal configurations can lead to catastrophic cost overruns.

Pricing Models and Discount Mechanisms

Both platforms operate on a pay-as-you-go, usage-based billing model, but their primary mechanisms for securing enterprise-scale cost optimization differ significantly in structure and flexibility.

AWS offers Machine Learning Savings Plans for SageMaker, providing extraordinary potential savings of up to 64% to 72% off standard on-demand pricing in exchange for a committed usage term of 1 or 3 years. The critical trade-off is flexibility; this financial commitment is strictly tied to specific instance families. If an enterprise’s ML workload characteristics shift drastically—for example, pivoting from traditional CPU-based random forest inference to heavy, GPU-accelerated large language model rendering—reallocating the financial commitment to a new hardware tier can be administratively complex and economically penalizing for small to mid-sized projects. Furthermore, accessing SageMaker’s premier collaborative tools, such as SageMaker Studio, incurs baseline monthly costs (approximately $24/month per user), and the AWS free tier is highly restrictive, offering only 250 hours per month on a small ml.t3.medium instance (roughly 10 days of continuous uptime), with no access to GPUs.

Google Cloud offers a slightly different financial structure. It automatically applies Sustained Use Discounts for VMs running continuously, and provides Committed Use Discounts (CUDs) for planned, steady resource usage over 1 or 3-year terms, yielding up to 55% to 57% savings on specific amounts of vCPU and RAM usage. Google favors a more integrated, opinionated billing posture, charging strictly per vCPU-hour or via simplified request-based pricing for its managed APIs. Vertex AI also provides a more generous initial experimentation buffer, offering $300 in flexible cloud credits valid for 90 days, allowing startups to test GPU-accelerated workloads without immediate capital outlay.

For highly fault-tolerant workloads, such as hyperparameter optimization sweeps or distributed batch training, both cloud providers offer deeply discounted ephemeral compute resources.

AWS Spot Instances provide up to 90% savings compared to on-demand pricing, while Google Cloud Spot VMs offer competitive savings ranging from 60% to 91%. Utilizing these resources requires sophisticated pipeline engineering to handle sudden instance terminations gracefully.

- Financial Metric/Cost Optimization Feature

- AWS SageMaker Ecosystem

- Google Vertex AI Ecosystem

- Primary Billing Posture

- Instance-based (per hour)

- Usage-based (vCPU/hour, request-based)

- Commitment Discount Mechanism

- ML Savings Plans (Up to 72% savings)

- Committed Use Discounts (Up to 57% savings)

- Ephemeral Compute Savings

- Spot Instances (Up to 90% savings)

- Spot VMs (Up to 91% savings)

- New User Free Tier / Credits

- 250 hours/month (t3.medium) for 2 months

- ~$300 in flexible credits for 90 days

Data compiled from vendor pricing documentation and third-party financial analysis.

Value Realization and Proven Enterprise ROI

Despite the massive capital expenditure required to establish an AI infrastructure footprint, enterprise ROI metrics demonstrate profound, measurable business value.

A comprehensive 2025 study by IDC evaluating global enterprise adoption of Google Cloud AI reported an astonishing average return on investment of 727% over a three-year period for customers utilizing Google’s AI stack. The speed of value realization is equally notable; 74% of enterprise executives utilizing Google’s generative AI tools reported achieving positive ROI within the first 12 months, with 51% successfully taking an AI application from the ideation phase to a live production use case in just 3 to 6 months. Within Google’s own internal operations, the utilization of these AI tools has led to a 10% measurable increase in software coding velocity, with AI agents now autonomously suggesting 30% of all newly written code.

AWS boasts similarly massive enterprise adoption, with extensive case studies highlighting rapid ROI across heavily regulated industries. For instance, Clearwater Analytics utilizes SageMaker JumpStart and Amazon Bedrock to securely optimize generative AI model costs and performance within the highly scrutinized financial services sector. Similarly, consumer-facing organizations like GuardianGamer leverage the hyper-efficient Amazon Nova models for highly scalable, cost-effective narrative generation, proving the economic viability of AWS’s low-latency proprietary models.

Ultimately, SageMaker’s cost optimization ceiling is structurally higher due to its granular infrastructure controls. Features like Multi-Model Endpoints and the ability to scale asynchronous inference components entirely to zero eliminate idle compute costs—the silent killer of ML budgets. However, Vertex AI’s highly managed inference components, native serverless integrations, and lack of complex VPC routing overhead make it inherently easier for smaller, less specialized teams to achieve a highly efficient baseline operational cost without requiring a dedicated squad of FinOps engineers.

Market Mindshare, Analyst Evaluation, and Strategic Conclusion

Analyst Perspectives: Gartner and Forrester (2025–2026)

Independent analyst assessments repeatedly validate the absolute dominance of both AWS and Google Cloud in the DSML landscape, though their market mindshare and strategic focuses differ significantly based on the target persona.

Both AWS and Google are positioned as definitive Leaders in the 2025 and 2026 Gartner Magic Quadrant for Data Science and Machine Learning Platforms.

Gartner analysts highlight Google’s extraordinary engineering depth, vertical integration from custom silicon (TPUs) to proprietary software (Gemini), and production operational features like Agent Engine. These tools directly resolve the complex reproducibility, testing, and secure code execution issues that historically cause enterprise AI pilots to stall before reaching production. However, user reviews within the Gartner Peer Insights network frequently note that Vertex AI’s documentation can be frustratingly sparse for highly advanced use cases, and that handling vast quantities of complex unstructured data through Vertex AI Search can become unexpectedly cost-prohibitive as query volumes scale.

AWS SageMaker is universally praised by analysts for its unmatched completeness of vision, unyielding execution capability, and “customer obsession”. It remains the de facto choice for massive enterprises that demand absolute, uncompromising control over every layer of their machine learning infrastructure. The platform’s immense breadth allows it to serve multiple distinct personas effectively, from deep-learning researchers requiring root SSH access to distributed GPU clusters, to business analysts leveraging the visual interfaces of SageMaker Canvas.

In the Q4 2025 Forrester Wave for AI Infrastructure Solutions, Google Cloud received the highest possible score in 16 out of 19 evaluation criteria—including Vision, Inferencing, Efficiency, and Architecture—validating its aggressive strategy of silicon-infrastructure co-design to improve inference economics. Meanwhile, AWS was credited for rapidly integrating Retrieval-Augmented Generation (RAG) services into its core platform capabilities, setting the global tone for how enterprises construct secure, proprietary knowledge bases.

Regarding peer engagement and market mindshare, metrics tracked in early 2026 indicate a significant shift: Google Vertex AI commands roughly 8.2% of the AI Development Platform mindshare, compared to Amazon SageMaker’s 3.3%. This data strongly reflects Google’s highly successful, aggressive push into developer mindshare through the ubiquitous integration of the Gemini ecosystem, its focus on streamlined usability, and its appeal to organizations seeking rapid GenAI deployment without heavy infrastructure management.

Strategic Recommendations

The decision between AWS SageMaker and Google Vertex AI is rarely determined by the presence or absence of a single technical feature. Rather, it is a foundational architectural commitment that must perfectly align with an organization’s existing cloud footprint, engineering culture, and long-term data strategy.

The overwhelming weight of evidence suggests that AWS SageMaker remains the premier, unmatched choice for organizations deeply entrenched in the AWS ecosystem. It provides extraordinary infrastructural granularity, highly robust military-grade network isolation, and supreme cost-optimization potential through advanced features like Multi-Model Endpoints, spot instance utilization, and asynchronous scaling to zero. It is the definitive platform of choice for highly technical, mature MLOps teams that require an expansive, customizable “constructor set” to squeeze every ounce of computational performance and financial efficiency out of their infrastructure.

Conversely, Google Vertex AI is the optimal platform for organizations prioritizing rapid engineering velocity, unified data-to-AI workflows, and seamless synergy with data warehousing. Vertex AI elegantly abstracts operational complexities, allowing data scientists to focus their intellectual capital on model performance rather than the minutiae of Kubernetes cluster management and VPC routing tables. Furthermore, for enterprises betting their future heavily on multimodality, advanced reasoning, and autonomous multi-agent systems, Vertex AI’s native, frictionless integration with the Gemini 3.0 model family and its specialized, highly efficient TPU infrastructure provides an undeniable, market-leading competitive edge.

Ultimately, enterprise technology leaders must honestly evaluate their internal machine learning maturity and operational capabilities. If the objective is to construct a bespoke, highly controlled, and heavily optimized machine learning factory, AWS SageMaker provides the most robust raw materials in the industry. If the goal is to rapidly deploy state-of-the-art Generative AI models and unify data warehousing with predictive analytics under a single, highly intuitive paradigm, Google Vertex AI stands as the superior strategic investment.