Nepali Technical SEO Checklist: A Phased Guide for Websites

Technical SEO Architecture and Implementation Guide for Nepali Websites: A Step-by-Step Phased Methodology

The digital topography of Nepal presents a highly specific and complex set of environmental conditions for search engine optimization. Unlike homogeneous digital markets where standardized best practices can be universally applied, the Nepalese internet ecosystem is defined by a trilingual search behavior encompassing English, Devanagari script, and Romanized phonetic Nepali. Furthermore, this linguistic diversity is compounded by infrastructural variables, including mobile-dominated internet access, fluctuating network latencies, lower-tier device hardware capabilities, and a highly unique national domain registry system. Consequently, deploying a generalized technical SEO checklist is entirely insufficient for this market. Establishing and sustaining organic search visibility in this environment requires a nuanced, architecturally sound approach that bridges global algorithmic requirements with local infrastructural realities.

This comprehensive report functions as an exhaustive, step-by-step technical SEO framework tailored specifically for websites operating within or targeting the Nepalese market. The methodology is structured into sequential phases of implementation, dissecting baseline telemetry, server-level configurations, crawlability controls, multilingual architectures, Core Web Vitals engineering for low-end devices, and highly localized structured data implementations.

Phase 1: Pre-Audit Preparations and Telemetry Diagnostics

Before implementing structural changes to a website’s architecture, a foundational layer of telemetry must be established. The primary objective of the pre-audit phase is to ensure that accurate, granular data is actively being recorded regarding how search engine crawlers and human users interact with the domain. Attempting technical optimization without baseline metrics renders the entire process subjective and prone to critical errors.

The immediate requirement is the integration and configuration of Google Search Console (GSC) and a comprehensive analytics platform such as Google Analytics 4 (GA4). GSC serves as the primary diagnostic conduit between the webmaster and the search engine, providing direct feedback on indexation status, manual actions, and Core Web Vitals field data. Concurrently, third-party crawling software must be deployed to simulate how an autonomous bot traverses the website architecture. This simulation reveals hidden structural defects, including infinite redirect loops, isolated orphan pages, and dynamic parameter traps that standard analytics platforms cannot detect.

Furthermore, enterprise-level technical SEO in Nepal necessitates the implementation of server log file analysis. While crawling software provides a theoretical map of the website, log file analysis provides empirical evidence of Googlebot’s exact behavior. By parsing the server logs, administrators can identify exactly which URLs are consuming the site’s “crawl budget,” pinpointing whether the search engine is wasting computational resources on low-value parameterized URLs while ignoring critical service pages or recently published news articles. This diagnostic phase establishes the quantitative baseline against which all subsequent technical interventions will be measured.

Phase 2: Domain Hierarchy, TLD Selection, and DNS Administration

The foundational layer of technical SEO dictates how efficiently search engine crawlers can perform initial DNS lookups and establish secure connections to the host server. In Nepal, the choice of Top-Level Domain (TLD) and the administration of Domain Name System (DNS) protocols carry significant structural implications.

Analyzing the TLD Strategy

A prevalent misconception within the industry is that the age of a domain or the length of its registration period serves as a direct algorithmic ranking factor. Search engines have explicitly confirmed that purchasing an aged domain or registering a domain for a decade provides no inherent SEO advantage. Furthermore, utilizing an exact-match keyword domain (e.g., best-trekking-agency-nepal.com.np) yields negligible algorithmic benefit under modern semantic search algorithms.

However, the selection between a generic global TLD (such as .com) and a country-code TLD (such as .com.np) is functionally critical. A .com.np domain provides a massive, explicit geo-targeting signal to search engine algorithms, inherently associating the domain’s content with queries originating from IP addresses within Nepal. For businesses strictly serving local clientele, the .com.np extension is superior.

Conversely, for Nepalese enterprises targeting a global audience—such as international tourism agencies, export businesses, or multinational SaaS platforms—a dual-domain strategy is structurally optimal. Securing both the .com and .com.np variations prevents brand dilution and allows for discrete routing architectures. In this configuration, the .com domain can be engineered for global traffic routing, while the .com.np variation targets local organic search. To execute this properly, strict canonicalization and 301 redirects must be enforced to prevent search engines from indexing identical content across both domains, which would trigger duplicate content penalties and dilute overall domain authority.

DNS Modification and FQDN Constraints

The administration of .np domains by Mercantile Communications involves highly specific technical constraints that severely impact site migrations, server upgrades, and CDN deployments. Unlike global registrars (e.g., Namecheap, GoDaddy) that offer instantaneous, automated DNS propagation, the .np registry system requires manual administrative approval for any Domain Name Server (DNS) modifications. Requests submitted via the portal are generally processed only twice a day.

From a technical SEO perspective, this manual bottleneck dictates that any server migration or DNS shift must be meticulously planned to account for a 24-to-48-hour propagation window. If a webmaster switches off the origin server before the .com.np DNS update is manually approved and globally propagated, the website will experience prolonged downtime. Search engine bots attempting to crawl the site during this window will encounter 5xx (Server Error) or DNS lookup failures. If these failures persist, the search engine will rapidly throttle its crawl rate to avoid stressing what it perceives to be a failing server, potentially leading to the temporary de-indexation of high-value pages.

Furthermore, the registry strictly requires Fully Qualified Domain Names (FQDN) for nameserver configuration (e.g., ns1.hostingprovider.com) and explicitly rejects the use of bare IP addresses. Technical teams must ensure that their hosting provider or CDN supplies FQDN nameservers prior to initiating the modification request; otherwise, the request will be rejected, further extending the period of instability.

Phase 3: Server Proximity, Edge Distribution, and TTFB Optimization

Physical Server Location and Latency

Once the DNS lookup succeeds, the browser (or search engine crawler) waits for the server to return the initial HTML document. The time elapsed during this process is measured as Time to First Byte (TTFB). TTFB is a critical baseline metric that influences all subsequent rendering metrics and user experience thresholds.

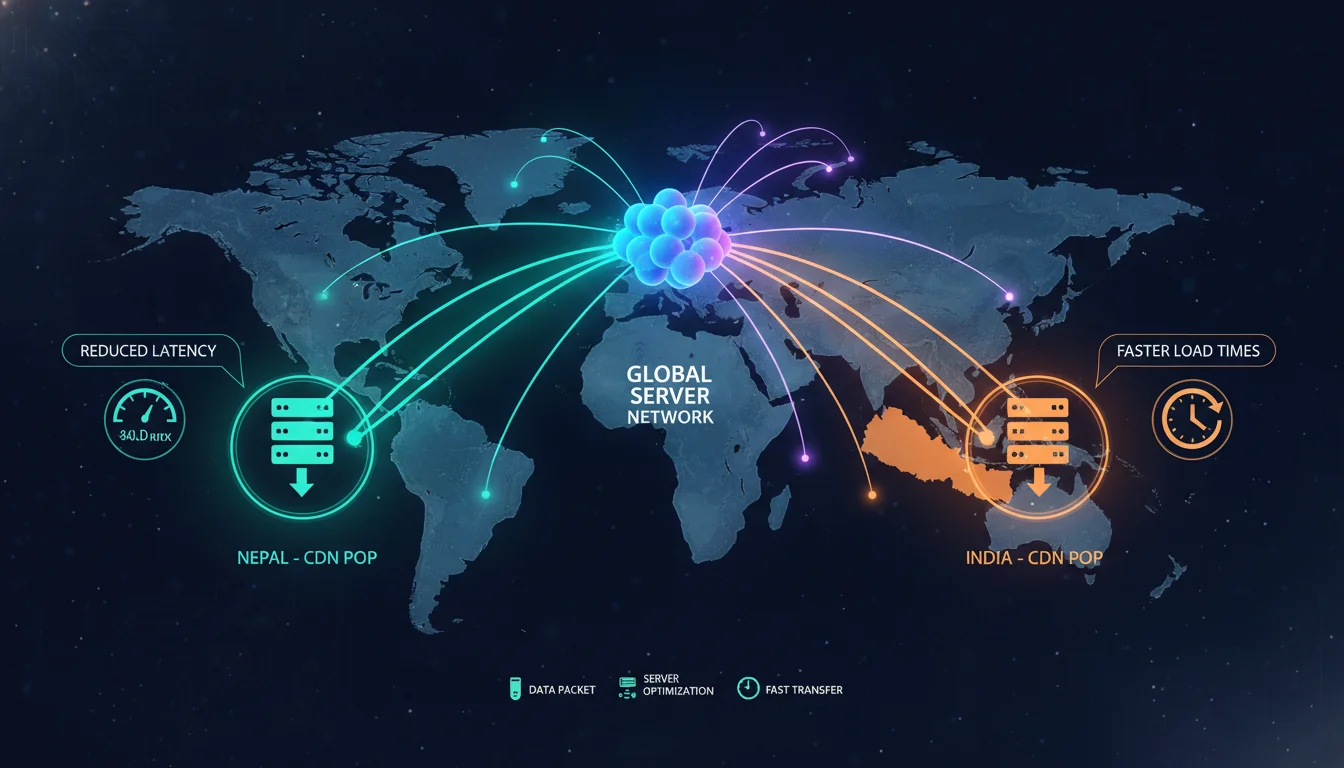

TTFB is heavily dependent on the physical proximity of the hosting server to the end-user and the search engine crawler. Data packets traveling through fiber optic cables are bound by the laws of physics; distance inherently creates latency. For Nepalese websites where the overwhelming majority of the target audience resides within the country, utilizing hosting servers located in the United States or Western Europe introduces unacceptable latency, increasing baseline load times by hundreds of milliseconds before the browser even begins to download assets.

Data indicates that hosting on servers located geographically close to the user base—specifically in India or directly within Nepal—provides the most optimal latency parameters for local organic traffic. A reduction in latency directly translates to faster HTML document retrieval by mobile browsers, which effectively reduces bounce rates. When local hosting is implemented, the server stack itself must be heavily optimized.

Providers must guarantee modern web technologies, including NVMe Solid State Drives (SSDs) for rapid database queries, PHP OPCache to eliminate redundant script compilation, and HTTP/2 or HTTP/3 protocols to allow multiplexed asset downloading.

Target Audience Geography

Table 1: Server location routing strategies based on target audience demographics.

| Recommended Server Location | SEO Impact & Reasoning | |

|---|---|---|

| 90% Nepal Traffic | Nepal or India (Regional) | Drastically reduces latency for the primary user base, ensuring fast TTFB and improved local Core Web Vitals scores. |

| Global / Mixed Traffic | USA / Europe with Global CDN | Ensures proximity to Googlebot’s primary crawl locations while serving edge-cached content to international users. |

Content Delivery Network (CDN) Edge Architecture

A critical paradox exists in international SEO: while prioritizing local TTFB for Nepalese users is essential for conversion rates, it must be reconciled with the reality that Googlebot primarily crawls and renders websites from data centers located in the United States. If a site is hosted in Kathmandu without a globally distributed caching layer, Googlebot will experience the same high-latency trans-Pacific routing delays that local users face when accessing US servers.

A Content Delivery Network (CDN) is the mandatory architectural solution to this paradox. A CDN mitigates the geographical divide by caching static assets (HTML, images, JavaScript, CSS) across a network of global edge nodes. Recent infrastructure deployments have established active, localized CDN Points of Presence (PoPs) directly within the Kathmandu valley.

Table 2: Verified Content Delivery Network Edge Nodes currently operating within Nepal.

| CDN Provider | Verified PoP Locations in Nepal | Cities Served |

|---|---|---|

| Cloudflare | 1 | Kathmandu |

| CDNetworks | 1 | Lalitpur |

| EdgeNext | 1 | Kathmandu |

| Bunny CDN | 1 | Kathmandu |

| Gcore | 1 | Kathmandu |

By routing domain traffic through these specific local nodes, Nepalese users can retrieve heavy multimedia and script assets from servers located mere kilometers away, entirely bypassing congested international internet gateways. Conversely, the CDN’s US-based edge nodes serve the cached HTML payload directly to Googlebot with near-zero latency. This bidirectional optimization satisfies both the local user experience criteria and the crawler efficiency requirements simultaneously.

Phase 4: Core Web Vitals Engineering and Low-End Mobile Optimization

Search engines do not merely evaluate the topical relevance of content; they evaluate the real-world user experience through a strict, uncompromising set of field metrics known as Core Web Vitals (CWV). These metrics act as direct, algorithmic ranking signals.

In Nepal, optimizing for Core Web Vitals is exceptionally challenging. While fixed broadband speeds average an impressive 118.80 Mbps, mobile network speeds—which account for the vast majority of search queries—lag significantly behind, averaging just 14.16 Mbps for downloads and 9.06 Mbps for uploads. Furthermore, a large segment of the population accesses the internet via low-end or mid-range Android devices with limited CPU and RAM capabilities. Consequently, engineering web applications that satisfy Google’s thresholds on slow connections and constrained hardware is a critical technical SEO priority. Current telemetry indicates that up to 78% of websites in Nepal fail basic Core Web Vitals assessments.

Interaction to Next Paint (INP) and SDK Bloat

In March 2024, Google officially deprecated First Input Delay (FID) and replaced it with Interaction to Next Paint (INP) as the primary metric for interactivity and responsiveness. While FID only measured the input delay of the first interaction on a page, INP is far more rigorous; it measures the latency of all user interactions (clicks, taps, keyboard inputs) throughout the entire lifecycle of the user’s session, reporting the longest observed delay. An INP score of milliseconds at the 75th percentile of page loads is required to achieve a passing “Good” rating.

For Nepalese users on low-end smartphones, INP is frequently destroyed by the execution of heavy, unoptimized JavaScript on the browser’s main thread. The main thread handles both parsing code and painting pixels to the screen. If the main thread is blocked by processing a massive script, it cannot paint visual feedback (such as opening an accordion menu or highlighting a clicked button) in response to a user’s tap. This results in perceived unresponsiveness, causing the user to frustration-tap multiple times, further compounding the backlog of tasks and ensuring a severe INP failure.

A highly specific, localized challenge in Nepal involves the integration of domestic payment gateways. E-commerce sites routinely implement third-party JavaScript SDKs from providers like eSewa, Khalti, and Fonepay to facilitate transactions. These SDKs are often notoriously bloated and antiquated, generating massive “Long Tasks” (any script execution exceeding 50 milliseconds) that monopolize the main thread during the initial page load.

To protect the INP metric, technical SEOs and front-end developers must aggressively manage these scripts. Payment gateway SDKs should never be loaded synchronously in the document <head>. Instead, they must be deferred or injected dynamically only when the user explicitly initiates a checkout sequence. Furthermore, non-critical first-party scripts must be scheduled using the requestIdleCallback API, which instructs the browser to execute heavy tasks only when the main thread is completely idle, ensuring the interface remains instantly responsive to user inputs.

The 300ms Viewport Tap Delay: Another highly specific INP failure on mobile devices involves an artificial delay imposed by mobile browsers. If a website lacks the correct declaration in the HTML template, mobile browsers assume the site is a legacy desktop application. To compensate, the browser will wait 300 milliseconds after a user’s tap to determine if the user intends to double-tap to zoom in on the unoptimized layout. Because the “Good” threshold for INP is 200ms, this artificial 300ms delay guarantees an immediate mathematical failure. Enforcing responsive design and strict viewport metadata eliminates this delay entirely.

Largest Contentful Paint (LCP) Optimization

LCP measures perceived load speed by calculating the exact time required for the browser to fully render the largest visible element within the initial viewport—typically a hero image, a primary

Given that the average mobile load time in Nepal sits at a sluggish 4.5 seconds, achieving a sub-2.5-second LCP on a 14 Mbps mobile connection requires an aggressive, multi-faceted approach.

- Next-Generation Asset Compression: Transmitting legacy image formats like JPEG or PNG over 3G/4G networks consumes excessive bandwidth. Developers must transition to highly compressed, next-generation formats such as WebP or AVIF. Furthermore, the srcset attribute must be utilized to serve responsive, dynamically scaled images based on the specific resolution of the user’s mobile screen, preventing the transmission of a 2000-pixel desktop image to a 400-pixel smartphone display.

- Resource Prioritization and Preloading: The browser must be explicitly instructed on which elements are critical. The specific asset identified as the LCP element must be preloaded using the directive in the HTML <head>. This forces the browser to bypass the standard CSS/JS execution waterfall and fetch the primary image or font immediately, drastically reducing LCP time.

- Progressive Enhancement and Critical CSS: Design architecture must prioritize above-the-fold content. The Critical CSS—the absolute minimum styling required to render the initial viewport—should be extracted and inlined directly into the HTML document, while all remaining stylesheets are loaded asynchronously. This prevents the browser from halting the rendering process while waiting for massive, external CSS files to download.

Cumulative Layout Shift (CLS) and Devanagari Typography

CLS measures the visual stability of a webpage, calculating the sum of all unexpected layout shifts that occur during the entire loading lifespan. A passing CLS score requires maintaining a metric of . High CLS scores frustrate users, causing them to accidentally click the wrong links or lose their place while reading as elements unexpectedly jump across the screen.

Beyond standard causes of layout shift—such as injecting advertisements without reserved container dimensions or failing to declare exact width and height attributes on image tags—Nepalese websites face a unique CLS vulnerability related to typography.

When a webpage loads, the browser typically renders a lightweight, default system font while simultaneously downloading a heavier, custom web font. When the custom font finishes downloading, the browser swaps the typography.

In the context of the Devanagari script, custom web fonts often possess vastly different x-heights, baseline metrics, and kerning values compared to the Latin-based system fallback fonts. When the swap occurs, the Devanagari text block will violently expand or contract, pushing all surrounding DOM elements out of alignment and triggering a massive layout shift.

To mitigate this, developers must implement the font-display: optional or font-display: swap CSS properties. More importantly, the CSS size-adjust property must be utilized to artificially normalize the bounding box dimensions of the fallback system font to perfectly match the metrics of the incoming Devanagari font. This ensures that when the typography is swapped, the physical space occupied by the text remains perfectly static, securing a passing CLS score.

Table 3: Core Web Vitals thresholds and targeted remediation strategies for the Nepalese digital ecosystem.

| Core Web Vital Metric | Target Threshold | Primary Nepalese Optimization Focus |

|---|---|---|

| LCP (Largest Contentful Paint) | 2.5 seconds | Serve AVIF/WebP formats via local CDNs; implement for hero banners; inline critical CSS. |

| INP (Interaction to Next Paint) | 200 ms | Defer third-party payment SDKs (eSewa/Khalti); utilize requestIdleCallback; enforce mobile viewport metadata. |

| CLS (Cumulative Layout Shift) | 0.1 | Define explicit dimensions for all media; implement size-adjust for Devanagari font swaps; reserve ad space. |

Phase 5: Trilingual Linguistic Architecture and Hreflang Configuration

NLP Challenges: Devanagari vs. Romanized Script

With global data demonstrating that 76% of online consumers prefer to purchase products from platforms offering information in their native language, deploying a multilingual architecture is a fundamental business necessity. For Nepalese websites, translating content is merely the first step; search engines must be programmatically instructed on how to serve the correct linguistic variation based on the searcher’s geolocation and browser settings.

The implementation of a multilingual structure in Nepal requires understanding how users interact with search interfaces. Users employ three distinct textual formats: standard English, formal Devanagari script, and Romanized phonetic Nepali.

The Romanized script is particularly challenging for Natural Language Processing (NLP) models. Because of the ubiquity of QWERTY keyboards on mobile devices, users frequently type Nepali words using Latin characters (e.g., “Momo-Pasa”). However, Romanized Nepali is highly unstructured and completely lacks standardized orthography. A single word can be phonetically spelled in half a dozen different ways (e.g., “k cha”, “ke chha”, “k chha”), creating extreme keyword fragmentation. Furthermore, the lack of extensive, high-quality, pre-trained datasets for the Devanagari script means algorithmic understanding of context can be fragile.

To command the SERPs (Search Engine Results Pages), keyword research must be approached trilingually. A foundational English keyword must be accurately translated into Devanagari to match formal search behavior, and subsequently mapped to the most statistically probable Romanized phonetic variants. The content strategy must organically weave these variants into the text payload without resorting to keyword stuffing.

URL Taxonomy and Encoding Protocols

The semantic structure of the URL slug itself serves as a vital relevance signal. When constructing localized pages, webmasters must carefully choose their URL encoding strategy:

- English URLs: These remain the safest, most robust, and most universally parsed format. They are immune to encoding errors and are easily understood by global crawlers, sharing platforms, and email clients.

- Devanagari URLs: While search engines technically support UTF-8 encoded URLs, Devanagari characters are immediately converted into percent-encoding when a user copies and pastes the link outside of the browser environment. For example, a clean Devanagari URL transforms into a string resembling %E0%A4%A8%E0%A5%87%E0%A4%AA%E0%A4%BE%E0%A4%B2. This creates exceptionally long, visually aggressive links that severely degrade user trust and reduce click-through rates (CTR) on social media platforms.

- Romanized Nepali URLs: These slugs provide a strong phonetic search signal without triggering percent-encoding issues, as they utilize the Latin alphabet.

The most robust architectural strategy is to utilize clean, concise English slugs or strictly standardized Romanized slugs for the URL taxonomy, while relying heavily on the on-page HTML elements—specifically the

Designing the Hreflang Tagging Matrix

The hreflang attribute is a highly complex piece of HTML metadata that acts as a multilingual GPS, instructing search engines on which specific language or regional version of a page to serve to a specific user. Incorrect implementations are ubiquitous and frequently result in catastrophic indexation conflicts, cross-domain canonicalization errors, and the display of incorrect currency or shipping data to international users.

Hreflang tags must adhere strictly to ISO 639-1 standards for language codes and ISO 3166-1 standards for country codes. The following architectural constraints define a mathematically sound implementation for a trilingual Nepalese domain:

- Devanagari Content: Must utilize the standard ISO 639-1 code ne. If the business explicitly targets Nepali speakers residing within the borders of Nepal (and offers specific domestic pricing in NPR), the tag should be formatted with the regional identifier as hreflang=”ne-NP”. If the content is broadly applicable to the global Nepali diaspora, the general ne is preferred.

- Romanized Nepali Content: Because Romanized Nepali utilizes the Latin script and entirely lacks an official ISO 639-1 code, technical consensus dictates treating these pages as English at the programmatic level. The HTML declaration should use lang=”en”, while the actual text payload is optimized for phonetic search intent.

- English Content: Standardized as en. If the English content is explicitly targeting expatriates, tourists, or international B2B clients in specific regions, en-US or en-GB may be utilized.

- The Default Fallback: The x-default attribute must be implemented on every page. This tells the search engine which version of the page to serve when a user’s browser language setting does not match any of the specifically declared hreflang variants, acting as a global safety net.

Architectural Integration and Bidirectional Linking

Hreflang tags can be injected into the website architecture via three distinct methods: the HTML <head>, HTTP headers, or the XML Sitemap. For small business websites, injecting the code directly into the <head> is sufficient. However, for massive Nepalese e-commerce platforms or national news portals managing tens of thousands of dynamic URLs, embedding massive hreflang clusters in the <head> becomes computationally heavy and negatively impacts the TTFB. In these enterprise scenarios, centralizing the hreflang logic within the XML sitemap is the superior architectural choice.

A non-negotiable rule of hreflang architecture is the strict requirement for bidirectional (reciprocal) linking. If the English version of a page points to the Nepali translation, the Nepali translation must point back to the exact English URL. Furthermore, every single page must contain a self-referencing hreflang tag pointing to its own URL. A single failure in this bidirectional matrix—an orphaned link or an asymmetric tag—causes search engines to instantly invalidate the relationship, ignoring the directives entirely and rendering the multilingual strategy void.

HTML

<link rel="alternate" hreflang="ne-NP" href=" /><link rel="alternate" hreflang="en" href=" /><link rel="alternate" hreflang="x-default" href=" />

Phase 6: Crawlability Controls, Indexation, and URL Architecture

Search engines operate on finite computational resources, allocating a specific “crawl budget” to every domain on the internet. Technical SEO must systematically remove all architectural friction to ensure crawlers discover, parse, and index the most valuable content without squandering resources on dead ends, infinite loops, or duplicated parameters.

Robots.txt and XML Sitemap Optimization

The robots.txt file acts as the primary gatekeeper for crawler access, dictating which directories bots are permitted to scan and which are strictly forbidden. A shockingly common technical failure among Nepalese web deployments is the accidental retention of developer directives post-migration. Developers frequently use Disallow: / to block search engines while a site is on a staging server. Forgetting to remove this single line of code upon pushing the site live effectively blinds search engines to the entire domain, resulting in catastrophic de-indexation. The robots.txt must explicitly allow access to critical rendering assets, including CSS and JavaScript directories, to allow the crawler to properly render the Document Object Model (DOM) and evaluate mobile usability.

The XML Sitemap serves as the architectural blueprint for the search engine.

It must be dynamically generated, mathematically precise, and actively submitted via Google Search Console. A validated sitemap must only contain canonical URLs that return a strict HTTP 200 (OK) status code. The inclusion of 404 (Not Found) or 301 (Redirect) URLs inside the sitemap signals to the crawler that the webmaster’s internal mapping is broken, reducing overall trust. Furthermore, technical teams must audit for “orphan pages”—URLs that exist within the XML sitemap but lack any internal incoming links from the website’s navigation or content. Crawlers rely heavily on contextual internal linking to assign topical relevance and PageRank; orphan pages are therefore viewed as low-value anomalies.

Canonicalization and Duplicate Content Remediation

Duplicate content issues routinely plague locally developed Content Management Systems (CMS) and highly parameterized e-commerce platforms in Nepal. Dynamic parameterized URLs (generated by sorting filters, session IDs, tracking tags, or pagination arrays) create millions of theoretical URL combinations. For example, domain.com.np/category/shoes?color=red&sort=price and domain.com.np/shoes-red may display the exact same product array, but search engines view them as unique pages competing against each other. This rapidly exhausts crawl budgets and dilutes ranking signals.

The rel=”canonical” tag must be meticulously deployed across the entire domain to establish the master version of every URL. If multiple URLs serve identical or near-identical content, the canonical tag must uniformly point to the preferred, authoritative architectural node. Furthermore, strict server-level redirect protocols must be established to ensure that both HTTPS and non-www/www versions of the site resolve to a single, secure canonical destination. If a website answers to both and search engines will index the site twice, triggering immediate duplicate content suppression.

Phase 7: Local SEO Ontology and Geo-Spatial Schema Markup

For businesses heavily reliant on regional foot traffic, localized service delivery, or proximity-based queries (e.g., “best momo in Kathmandu,” “laptop repair in Chitwan,” “travel agency near Thamel”), establishing absolute dominance in the Google Local Pack is paramount. While claiming and optimizing a Google Business Profile (GBP) is the absolute baseline, injecting highly structured semantic data via JSON-LD Schema markup is the advanced engineering required to mathematically connect a digital asset to a physical geographic coordinate.

Entity Declaration via LocalBusiness Sub-types

Search engines do not read text in the human sense; they parse data to establish “entities.” Schema markup translates ambiguous, human-readable HTML text into deterministic, machine-readable JSON-LD code. The LocalBusiness schema type is a specific subtype of both Organization and Place, meaning it dynamically inherits properties that define both corporate identity and physical geographic reality.

A highly optimized implementation must transcend basic markup to include hyper-specific schema sub-types. Rather than deploying a generic LocalBusiness tag, a trekking agency in Nepal should utilize the TravelAgency schema, a hospital should use MedicalClinic, and a restaurant in Thamel should utilize Restaurant or FoodEstablishment. This exact precision provides the crawler with immediate, unambiguous context.

Structuring Nepalese Postal Addresses and Geolocation

The primary challenge of executing local SEO in Nepal is the lack of a standardized, globally recognized street addressing system. Many local addresses rely on landmark-based navigation, informal Tole names, or non-sequential Ward numbering rather than deterministic street numbers and zip codes. Consequently, standard schema deployments utilizing Western address variables often fail validation or deeply confuse geographic mapping algorithms.

To construct a valid, highly accurate PostalAddress schema for a Nepalese entity, the following regional mapping variables must be adhered to:

Semantic mapping of complex Nepalese address parameters to global Schema.org requirements.

| Schema Property | Expected JSON Type | Nepalese Context / Mapping Strategy |

|---|---|---|

| addressCountry | Text | Must be standardized as “NP” or “NPL” (ISO 3166-1 alpha-2/3). |

| addressRegion | Text | The official Province name (e.g., Bagmati, Gandaki, Lumbini). |

| addressLocality | Text | The District, Metropolitan City, or Municipality (e.g., Kathmandu, Lalitpur, Pokhara). |

| streetAddress | Text | The specific street, Tole, Marg, or Ward number (e.g., “Ward No. 4, Pashupati Sadak”, “Sundhara Marg”). |

| postalCode | Text | The specific 5-digit postal code assigned by Nepal Post (e.g., 44600 for Kathmandu Metropolitan). |

Because the streetAddress input is often vague or unmappable by automated systems, relying solely on text-based postal schemas is highly risky. The data must be firmly anchored with exact mathematical GeoCoordinates.

The JSON-LD schema must include the geo property, defining both latitude and longitude to a minimum precision of 5 decimal places to ensure pinpoint accuracy. However, because third-party users can maliciously suggest edits to Google Maps pins, potentially shifting a business’s geographic coordinates, the hasMap property must also be explicitly defined. The hasMap property points directly to the unalterable, authoritative Google Maps URL associated with the verified Google Business Profile, creating a locked, cryptographic link between the website’s HTML and the physical map location that search engines trust implicitly.

Semantic Validation, Citations, and Local Consistency

The schema payload should also include the sameAs property, linking the website to verified social media profiles (Facebook, Instagram) and prominent local directory listings to establish a cohesive Knowledge Graph entity. In the local SEO ecosystem, the absolute consistency of a business’s Name, Address, and Phone Number (NAP) across third-party directories like Yellow Pages Nepal, specialized local portals, and the website’s own schema is a core algorithmic trust signal. Any divergence in NAP data signals instability to the search engine, suppressing local rankings.

Once the JSON-LD script is injected into the HTML (preferably via Google Tag Manager or direct header template inclusion, rather than relying on clunky plugins), it must be rigorously verified using Google’s Rich Results Test and the independent Schema Markup Validator. This ensures the payload is structurally error-free and immediately eligible for enhanced SERP features, such as star ratings, price ranges, and integrated maps.

Phase 8: Security Audits and Resolving Technical Decay

The final phase of the technical SEO implementation involves continuous diagnostic auditing to prevent entropy and technical decay. Websites are dynamic environments; as content is updated, plugins are modified, and servers are patched, technical errors inevitably accumulate, creating friction that actively suppresses organic visibility.

Security Protocols and Mixed Content Architecture

Google explicitly prioritizes secure websites, utilizing HTTPS as a lightweight algorithmic ranking factor and a critical component of the overarching Page Experience signal. A widespread error within the Nepalese web ecosystem is the incomplete or flawed implementation of SSL certificates.

While an SSL certificate may be successfully installed on the server, websites frequently load legacy HTTP assets (such as old images, embedded iframes, or third-party tracking scripts) within the secure HTTPS environment. This triggers a “Mixed Content” warning in modern browsers like Chrome, instantly stripping the secure padlock icon and devastating user trust. To resolve this, a strict HTTP Strict Transport Security (HSTS) header must be deployed via the .htaccess or Nginx configuration file, and global search-and-replace database queries must be executed to force all internal asset URIs to resolve exclusively via HTTPS.

Managing Link Decay, 404s, and Redirect Loops

Internal link decay is mathematically inevitable as a business evolves. Service pages are consolidated, blog posts are updated, and products go out of stock. Left unchecked, this creates an ecosystem of broken internal links (returning HTTP 404 Not Found errors). When a crawler encounters a 404 error, the flow of PageRank algorithms through that link is instantly terminated, creating a dead-end for the crawler and degrading the overall topical authority of the domain.

Webmasters must continuously deploy automated crawling software to detect these 4xx status codes. When a URL is permanently moved, deleted, or consolidated, a strict 1-to-1 301 (Permanent) redirect must be established at the server level, pointing the legacy URL to the most topically relevant active page. However, administrators must aggressively guard against “Redirect Chains” (e.g., Page A redirects to Page B, which redirects to Page C) and infinite “Redirect Loops”. Search engine crawlers are programmed to abandon a redirect sequence if it exceeds five consecutive hops, failing to index the final destination and wasting valuable crawl budget. By maintaining a pristine 301 architecture, technical SEOs ensure that historical link equity is seamlessly transferred to new assets without algorithmic friction.

Strategic Conclusions

Achieving sustainable search engine dominance within the Nepalese market is fundamentally an exercise in rigorous technical engineering, not merely content creation.

The unique challenges posed by fluctuating mobile network speeds, multi-script NLP processing, manual DNS registry constraints, and regional infrastructural bottlenecks cannot be solved through superficial on-page optimization.

To secure a definitive competitive advantage, digital assets must be constructed upon a latency-optimized physical foundation, utilizing local or near-shore server environments augmented by CDN edge nodes actively operating within the Kathmandu valley. The architectural hierarchy must seamlessly manage the interplay of English, Devanagari, and Romanized Nepali through strict URL canonicalization and mathematically precise, bidirectional hreflang networks.

Furthermore, in an environment heavily skewed toward lower-end mobile consumption, aggressively engineering for Core Web Vitals—specifically mitigating Interaction to Next Paint (INP) degradation caused by local third-party payment SDKs—is mandatory for maintaining algorithmic favor. Finally, the strategic, highly specific deployment of localized JSON-LD schema transforms unstructured Nepalese address formats into precise, machine-readable geographic entities, cementing dominance in local search parameters.

By systematically auditing, engineering, and enforcing these technical standards across the eight phases outlined, organizations can forge a resilient, highly visible digital presence that is immune to algorithmic volatility and infrastructural friction.