Choosing Predictive Analytics Software for Mid-Sized Business

The Vanguard of Foresight: An Exhaustive Analysis of Predictive Analytics Software for Mid-Sized Businesses in 2026

The Strategic Imperative of Predictive Analytics in the Mid-Market

The contemporary business landscape is defined by an unprecedented velocity of change, rendering traditional, retrospective data analysis increasingly insufficient for sustained competitive advantage. Historically, mid-sized enterprises have relied heavily on descriptive analytics—systems designed to report on past performance through static dashboards and historical batch processing. However, as market volatility increases and data generation proliferates, the paradigm has decisively shifted toward predictive analytics. This discipline leverages historical data, statistical algorithms, and advanced machine learning architectures to forecast future events, estimate probabilities, and recommend preemptive actions before market conditions materialize.

For mid-sized businesses—defined generally as organizations operating with $50 million to $1 billion in revenue and employing between 50 and 1,000 personnel—the adoption of predictive analytics represents a critical operational inflection point. Unlike large multinational enterprises equipped with dedicated, centralized data science departments and effectively limitless infrastructure budgets, mid-market organizations must balance sophisticated analytical requirements against tangible resource constraints. They require platforms that deliver high-fidelity forecasting without necessitating doctoral-level statistical expertise or exorbitant Total Cost of Ownership (TCO).

The year 2026 has witnessed a profound democratization of these technologies. The maturation of Automated Machine Learning (AutoML), the integration of Generative Artificial Intelligence (GenAI), and the advent of Agentic Intelligence have fundamentally lowered the barrier to entry. Consequently, predictive capabilities are transitioning from specialized data science silos directly into the workflows of business intelligence (BI) analysts, marketing directors, and financial planning and analysis (FP&A) teams.

By spotting patterns in data and forecasting outcomes, cross-functional teams can make crunch-time decisions before customers churn, before performance metrics dip, or before budgets are misallocated. The global predictive analytics market is projected to expand from $22.2 billion in 2025 to $91.9 billion by 2032, driven primarily by organizations recognizing that data-driven marketing and sales models are exponentially more profitable than experience-based methodologies. In an era where 60% of B2B sales organizations are actively shifting toward data-driven selling frameworks, mid-market organizations face an existential mandate to integrate predictive foresight into their core operational scaffolding.

Core Capabilities and Operational Requirements for Implementation

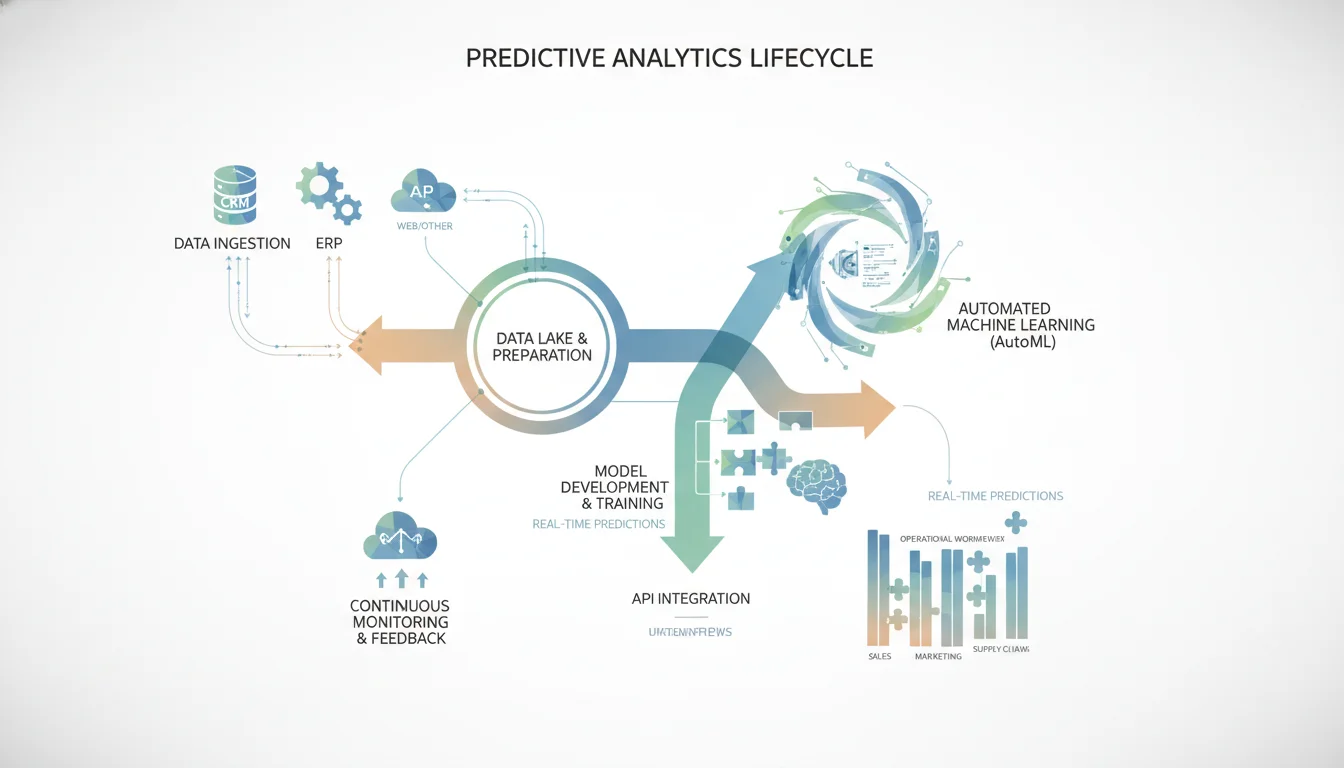

The successful deployment of predictive analytics software transcends the mere selection of an algorithm; it demands a comprehensive operational architecture. Organizations frequently falter by obsessing over algorithmic accuracy while neglecting the operational transformations necessary to capture the financial value of a prediction. For mid-sized businesses, evaluating software necessitates a holistic understanding of the enterprise development lifecycle for predictive systems.

The foundational stage is discovery and requirements definition, wherein prediction targets and success metrics are firmly established. Software platforms must seamlessly interface with data pipeline architectures to establish reliable, scalable data flows from disparate sources, such as Customer Relationship Management (CRM) systems and Enterprise Resource Planning (ERP) databases. Subsequent model development encompasses feature engineering, algorithm selection, and iterative training—processes that modern mid-market tools increasingly automate through AutoML functionalities.

Crucially, the software must facilitate integration development, connecting predictions directly into operational workflows. A prediction regarding customer churn is functionally inert unless it triggers a retention workflow within a marketing automation suite. Platforms are therefore evaluated heavily on their API connectivity and native integrations, allowing seamless data synchronization across the technological stack.

Deployment and change management are equally vital, as they enable organizational adoption. Predictive analytics software should not be limited to isolated data scientists; it must offer intuitive interfaces, drag-and-drop capabilities, or no-code machine learning features to support cross-functional usage. Furthermore, testing, validation, and continuous monitoring are paramount to ensure that models do not degrade over time due to data drift, thus ensuring long-term reliability and governance.

Mid-market organizations are increasingly measuring the efficacy of these platforms through specialized frameworks. Key metrics include time-to-insight benchmarks, which compare current analysis cycles against historical norms, and decision velocity metrics, which track the latency between a generated prediction and the execution of a business action. Furthermore, cross-functional adoption rates are utilized to measure how deeply predictive insights have penetrated daily operational behaviors across various departments, while prediction accuracy tracks directly against actual business outcome improvements.

The Economic Paradigm: Analyzing Total Cost of Ownership

Understanding the Total Cost of Ownership (TCO) is critical, as predictive analytics pricing structures are notoriously complex and highly variable based on deployment architecture. Evaluating vendors requires scrutinizing initial setup expenditures, ongoing subscription fees, hidden infrastructure costs, and the scalability of the pricing model.

For a typical mid-sized enterprise in 2026, the financial blueprint for deploying a robust predictive analytics system generally falls within specific parameters. Initial setup costs range from $10,000 to $100,000. This expenditure encompasses software licensing, initial cloud provisioning, data pipeline architecture consulting, and integration engineering to connect the predictive engine with existing ERP, CRM, and marketing systems. Ongoing monthly operations typically require an investment of $1,000 to $5,000. This covers recurring Software-as-a-Service (SaaS) licensing, API call limits, cloud compute resources, and ongoing model monitoring.

Vendors utilize several distinct pricing paradigms that significantly impact long-term budgeting and organizational deployment strategies:

- Per-User Subscription Models: Platforms such as Tableau ($75 per user/month) and Power BI Premium ($24 per user/month) charge based on individual seat licenses. While highly predictable for budgeting purposes, this model can inadvertently inhibit cross-functional adoption. Companies often restrict licenses to specialized analysts to control costs, which counteracts the objective of democratizing data access across the organization.

- Usage and Capacity-Based Models: Tools like Amazon QuickSight and Microsoft Azure Machine Learning employ pay-as-you-go structures based on query volume or compute hours. This dramatically lowers the barrier to entry—exemplified by QuickSight’s $3 per month reader tier—but requires stringent organizational governance. Poorly optimized queries running against massive datasets can trigger exponential cost overruns if left unchecked.

- Tiered SaaS and Flat-Rate Licensing: Several modern no-code platforms utilize tiered pricing that unlocks specific processing power and feature sets. Akkio offers a highly accessible model starting at $49 per month for its Starter tier, scaling to $1,499 for its Business tier. Pecan AI utilizes a similar structure, with its Starter tier beginning around $760 to $950 per month, escalating based on model usage.

- Enterprise and Server Licensing: Heavy-duty, data science-centric platforms base their economics on combining individual designer licenses with massive centralized server deployments. Alteryx, for instance, charges approximately $4,950 to $5,195 per user annually for its Designer license. However, for a mid-market deployment of 25 users requiring the Alteryx Server for automated workflow scheduling, the total annual contract value frequently ranges from $100,000 to $150,000.

When procuring software, mid-market technology leaders must actively inquire about scalability. A platform that appears economical during a limited pilot phase may become prohibitively expensive when scaled from processing one million rows of data to fifty million rows, or when transitioning from batch processing to real-time, event-driven streaming.

| Pricing Paradigm | Typical Cost Structure | Market Examples | Ideal Mid-Market Persona |

|---|---|---|---|

| Per-User Licensing | $14 - $75+ per user/month | Tableau, Power BI | Organizations with defined analyst teams requiring predictable budgets. |

| Usage/Capacity | $3 - $1000+ scaling with compute | Amazon QuickSight, Azure ML | Firms with highly variable query volumes and strong IT governance. |

| Tiered SaaS | $49 - $1,750+ per month | Akkio, Pecan AI, Julius AI | Marketing agencies and operations teams seeking fast time-to-value. |

| Enterprise Server | $100,000+ total contract value | Alteryx, Dataiku | Data science departments requiring heavy data blending and MLOps. |

The Democratization Wave: No-Code and GenAI-First Platforms

The most disruptive trend in the mid-market predictive analytics sector is the proliferation of no-code platforms augmented by Generative AI. These tools are engineered specifically to circumvent the global shortage of data engineering talent by allowing business users to articulate complex predictive queries using natural language.

The paradigm has shifted from requiring Python or R coding skills to requiring deep domain knowledge and the ability to ask the right business questions.

Pecan AI: Conversational Modeling and Predictive GenAI

Pecan AI has established itself as a vanguard in making predictive modeling accessible to data and business teams without requiring deep data engineering expertise. The platform operates on a proprietary fusion of predictive and generative AI, enabling users to transform plain-English business inquiries into validated, production-ready predictive models.

A defining feature of the platform is its Predictive AI Agent, which allows marketing, sales, and operations teams to tackle high-value use cases such as customer churn prediction, lifetime value forecasting, and demand forecasting without initiating protracted data science projects. Users can literally converse with the system through a “Predictive Chat” interface to define the parameters of their predictive needs, making the experience akin to interacting with a dedicated data scientist. The software then autonomously manages the requisite data preparation, feature engineering, model training, and validation.

From an integration standpoint, Pecan AI delivers transparent, explainable predictions directly into operational systems like Salesforce, HubSpot, and Snowflake. For organizations possessing data analysts who require deeper customization, the platform provides a SQL-based Predictive Notebook. While some advanced users occasionally characterize the automated modeling process as a “black box” regarding absolute transparency, the overwhelming consensus points to massive reductions in time-to-value. Pecan AI is specifically engineered for data-savvy marketing teams who require models faster than a full enterprise platform can provide, positioning it as a highly strategic investment for mid-sized organizations seeking rapid ROI.

Akkio: High-Velocity Drag-and-Drop Analytics

Akkio represents another highly effective no-code solution, specifically lauded for its extreme velocity and accessibility. The platform is explicitly designed for forward-thinking agencies and mid-sized businesses requiring immediate predictive insights. Akkio allows users to forecast critical outcomes—such as sales trajectories, lead scoring, and employee attrition—by training custom machine learning models on historical data with a simple drag-and-drop interface.

The platform’s architecture is built on an AI-first foundation, prominently featuring Generative BI capabilities that allow users to interact with text and data naturally. It boasts incredibly rapid model training times, often generating functional models in as little as ten seconds. Use cases frequently highlight Akkio’s ability to reduce error in cost estimation through scrap rate prediction, its utility in deep digital marketing data analysis, and its capacity to build predictive churn models seamlessly.

Akkio integrates efficiently through direct connections to Salesforce, Google Sheets, Snowflake, and via its proprietary API, allowing for real-time deployment of predictions. User satisfaction is exceptionally high within the mid-market segment (maintaining a 5/5 star rating), driven by the platform’s ease of use, intelligent chat features that identify gaps in training data, and highly responsive customer support. However, enterprise-scale users have noted that expanded API capabilities, enhanced documentation, and deeper white-labeling functionality would further enhance the platform’s utility for complex institutional deployments.

Julius AI: AI-Powered Analytical Assistants

Operating within a similar paradigm of accessibility, Julius AI functions as an AI-powered analytical assistant optimized for Q&A-style data analysis. Rather than focusing purely on deploying predictive models into production environments, Julius AI excels at connecting to existing databases and autonomously generating charts, trend analyses, and forecasting queries based on everyday language inputs.

It handles query execution automatically and learns the organization’s data structure to support repeated forecasting queries over time. Starting at an incredibly accessible $37 per month, it serves as a powerful adjunct to traditional spreadsheets, allowing analysts to rapidly generate insights without manually coding SQL or Python scripts. It is predominantly utilized by business teams managing structured data who require immediate analytical insights without navigating complex BI interfaces.

| Feature Dimension | Pecan AI | Akkio | Julius AI |

|---|---|---|---|

| Technical Requirement | Low (No-code / SQL optional) | Very Low (Drag-and-drop) | Very Low (Natural Language) |

| Primary Advantage | Conversational model building | Speed and extreme affordability | Q&A style insight generation |

| Ideal Mid-Market Use Case | CRM Churn & Demand Forecasting | Agency Lead Scoring & Segmentation | Ad-hoc Analyst Reporting |

| Base Pricing | ~$760 - $950 / month | $49 / month | $37 / month |

Converged Intelligence: Integrating Predictive ML into Business Intelligence

While specialized no-code platforms offer rapid model deployment, a massive segment of the mid-market prefers to leverage their existing Business Intelligence (BI) infrastructure. Recognizing this demand, major BI vendors have aggressively integrated predictive capabilities, Automated Machine Learning, and Agentic AI directly into their reporting environments, thereby converging visualization and prediction into a singular workflow. This convergence minimizes the friction associated with adopting entirely new software stacks.

Tableau and Einstein GPT: Visualizing the Future

Tableau, a subsidiary of Salesforce, remains a dominant architectural force in the mid-market, with 42% of its user base originating from this specific segment. Historically revered for its unparalleled data visualization capabilities, Tableau has evolved into a formidable predictive engine through the deep integration of Einstein Discovery.

This integration introduces dynamic, machine learning-powered predictions and explanatory insights directly into Tableau dashboards without requiring users to write code. Analysts can utilize “Einstein in Tableau Calculations” to generate predictions simply by dragging and dropping predictive fields onto visual canvases, initiating an entirely new class of AI-powered analytics dubbed “Tableau Business Science”. Furthermore, Tableau Prep Builder supports bulk scoring, allowing data engineers to inject prediction factors and suggested improvements directly into the dataset during the data preparation phase. This capability is highly utilized in retail and consumer goods sectors for complex use cases suchs as prioritizing warranty claims.

Tableau’s user satisfaction remains exceptionally high, driven by its aesthetic customization options, robust scientific foundation, and intuitive drag-and-drop interface. Mid-market users consistently praise its ability to quickly convert raw data into meaningful, interactive insights. However, organizations must navigate its pricing structure carefully.

At $75 per user per month for the standard tier, licensing costs can represent a significant barrier to widespread organizational adoption. Additionally, reviewers frequently note that the platform can experience performance latency when processing exceptionally large, unoptimized datasets, and advanced features often require a steep learning curve.

Microsoft Power BI Premium: Scalable Enterprise Analytics

Microsoft Power BI operates as a cornerstone of mid-market data architecture, deeply embedded within the ubiquitous Microsoft ecosystem. While Power BI Pro provides robust data storage (10 GB per user) and foundational reporting capabilities at $14 per user per month, the true predictive capabilities necessary for advanced analytics are unlocked within the Power BI Premium and Premium Per User (PPU) tiers.

At $24 per user per month, Power BI Premium introduces advanced AI features, including built-in AutoML, cognitive services integration, and the ability to execute sophisticated machine learning models directly within the platform. Furthermore, it vastly expands data capacity to 100GB model limits and allows for up to 48 data refreshes per day, ensuring that predictive insights remain continuously synchronized with real-time operational data.

Power BI Premium offers robust API access and connectivity through XMLA endpoints, enabling deep integration with other applications and platforms across the enterprise. For mid-market entities heavily invested in Azure and Microsoft 365, Power BI Premium offers a seamless, highly governed environment for accelerating access to predictive insights at an enterprise scale. The primary differentiator between the Pro and Premium tiers ultimately distills down to performance capacity and the inclusion of advanced predictive AI frameworks.

Zoho Analytics: High Value and Conversational Agility

For mid-sized organizations where budget optimization and ease of deployment are paramount, Zoho Analytics presents a highly compelling alternative. Zoho Analytics provides a unified business analytics platform enriched with Agentic AI, Generative BI, and predictive cognitive analytics.

Its standout feature, the Zia AI assistant, facilitates natural language querying, allowing users to extract instant, data-driven answers and predictive insights without interacting with complex dashboard structures. With over 250 native connectors and automated data blending capabilities, Zoho is particularly adept at providing a 360-degree view of business operations by unifying discrete data sources. Its pricing strategy is highly aggressive—starting at $24 per month—making enterprise-grade analytics accessible to smaller mid-market firms. It is frequently recommended as the best-value tool for organizations seeking self-service data preparation and real-time visualization without heavy IT overhead.

Amazon QuickSight: Serverless Cloud Execution

Amazon QuickSight, built natively on Amazon Web Services (AWS), takes a distinctly different architectural approach. It is a serverless, cloud-based business intelligence service that offers ML-powered forecasts, anomaly detection, and natural language query capabilities without any coding requirements. Its architecture allows it to scale automatically, handling massive datasets efficiently without requiring administrators to provision or manage servers.

QuickSight’s pricing is strictly consumption-based, offering reader access for as low as $3.00 per month and author access at $24.00 per month. This model avoids high upfront infrastructure costs entirely, making it highly attractive for mid-market CFOs. Users frequently praise its fast in-memory engine (SPICE) and seamless integration with AWS storage solutions like S3 and Redshift. However, reviewers note that it suffers from a steeper learning curve regarding initial setup, limited out-of-the-box visual customization compared to platforms like Tableau, and occasional latency during the downloading of complex query results.

Agentic Decision Engines and Autonomous Analysis

The evolutionary frontier of predictive analytics in 2026 is defined by the transition from passive prediction to Agentic Intelligence. Agentic platforms do not merely answer questions; they autonomously investigate anomalies, decompose metrics to find root causes, and recommend downstream actions. Gartner predicts that 40% of enterprise applications will integrate task-specific AI agents by the end of 2026, marking a decisive shift from AI as a “copilot” to AI as an autonomous operational agent.

Tellius: The Agentic Intelligence Platform

Tellius stands at the forefront of this architectural shift for the mid-market and enterprise segments. Tellius unifies natural language processing, automated reasoning, explainable machine learning, and workflow automation into a singular, transparent decisioning layer. Unlike traditional dashboards that require human intuition to drill down into data, Tellius utilizes “Kaiya”—an AI engine comprising GenAI and dedicated AI squads—to automate complex analytical workflows.

If a commercial organization experiences a sudden drop in regional sales, Tellius autonomously scans millions of data points to identify the specific product lines, supply chain bottlenecks, and demographic shifts contributing to the anomaly, presenting the findings alongside a generative narrative. This effectively automates the entire cycle from business question to reasoning, deep insight, explanation, and recommended action.

The platform is engineered for high-cardinality, multi-source data models, seamlessly connecting to cloud data warehouses like Snowflake, Redshift, and Databricks. Crucially, it employs a “live/pushdown query” architecture. Rather than extracting massive datasets into the analytics software’s proprietary memory, Tellius pushes the heavy computational algorithms down into the cloud data warehouse. The warehouse processes the machine learning model using its elastic compute resources, returning only the finalized prediction to the dashboard.

For mid-sized businesses, Tellius offers a Premium plan designed for teams of up to 10 users. This tier supports up to 50 million rows of data in live-mode and is fully hosted on the Tellius Cloud, providing access to guided insights, Vizpads, and concurrent AutoML jobs. As organizations scale, they can transition to the Enterprise plan, which provides unlimited users, unlimited data scale, SAML-based SSO, and flexible deployment options including customer cloud (VPC) and on-premises environments. While pricing is customized based on specific deployments, industry data indicates business tiers hovering around $30 to $70 per user per month, positioning it competitively against traditional BI platforms while delivering exponentially more autonomous capabilities.

Advanced Data Science and MLOps Platforms

While no-code and agentic platforms address the needs of business users, certain mid-market organizations—particularly those in finance, healthcare, and advanced manufacturing—employ dedicated data science teams requiring granular control, statistical rigor, and complex pipeline orchestration. For these entities, advanced data science and Machine Learning Operations (MLOps) platforms remain indispensable, albeit at a significantly higher total cost of ownership.

Alteryx: Automating the Analytical Supply Chain

Alteryx is globally recognized for automating complex data preparation, geospatial analysis, and predictive workflows. It functions as the ultimate data blending tool, allowing analysts to extract data from highly fragmented sources, cleanse it, and apply robust predictive models via a sophisticated drag-and-drop canvas. It is highly valued in analytics-heavy organizations where the automation of manual Excel manipulation and complex SQL scripting translates to massive operational efficiencies.

Alteryx explicitly targets the mid-market through tailored industry solutions and tiered editions. The Starter Edition, priced at $250 per user per month ($3,000 annually), is aimed at small teams needing basic, code-free data preparation with flat-file connectivity. However, to unlock advanced features such as the AI Copilot, cloud reporting, and connectivity to massive data warehouses, organizations must escalate to the Professional or Enterprise editions.

The financial commitment for an enterprise deployment of Alteryx is substantial. Standard Designer licenses typically cost upwards of $4,950 to $5,195 per user annually. For mid-market organizations deploying to 10 to 50 users and requiring the Alteryx Server for automated workflow scheduling, API triggering, and enterprise governance, the total annual contract value can easily range from $100,000 to $150,000. The platform represents a profound investment, justified only when a business possesses dedicated analytical teams capable of leveraging its deep architectural capabilities.

Enterprise MLOps: DataRobot and Dataiku

For organizations prioritizing Automated Machine Learning at an enterprise scale, DataRobot and Dataiku offer premier MLOps environments.

DataRobot provides a centralized system for designing, deploying, and monitoring predictive models, automating feature engineering and model selection to generate predictions quickly. It is highly regarded for its governance and explainable AI capabilities, though its pricing structure is often viewed as prohibitively expensive for smaller businesses or those with highly limited use cases.

Dataiku operates as a collaborative data science platform designed to be accessible to both coders and non-coders, integrating data preparation, model development, and deployment within a single visual environment. Similar to Alteryx, its pricing involves custom enterprise quotes, frequently ranging from $10,000 to $20,000+ per user, representing a significant capital expenditure.

Statistical Rigor: IBM SPSS Modeler and Altair AI Studio

For organizations requiring deep statistical rigor and legacy system integration, IBM SPSS Modeler remains a formidable choice. It provides an extensive algorithm library supporting visual data science, text analytics, and automated modeling, particularly excelling in multivariate regression and complex data clustering. However, the licensing is heavily premium, starting at $4,950 for Personal use and scaling to $7,430 annually for Professional tiers, making it highly asset-intensive for widespread deployment.

Conversely, Altair AI Studio (formerly RapidMiner) offers a more modern, unified data science lifecycle. Recognized highly within the mid-market, it features an intuitive visual workflow designer that grants “code-like control” to non-programmers. It includes access to hundreds of large language models for generative AI functionality and robust AutoML capabilities encompassing clustering and time series forecasting. While offering a free tier for basic usage, enterprise deployments provide scalable model operations, though users note a steep learning curve for highly complex, advanced functionalities and occasional performance issues with massive datasets.

Cloud-Native Data Science Ecosystems

Mid-market firms deeply entrenched in public cloud infrastructure frequently leverage native data science tools provided by major cloud vendors. Google Cloud Vertex AI and BigQuery ML allow users to run high-volume predictive models using built-in SQL-based machine learning without managing underlying infrastructure. Reviewers value the flexible pay-as-you-go pricing model, though costs can scale aggressively if query volumes are mismanaged.

Similarly, Amazon SageMaker provides AWS’s flagship ML tool for data preprocessing, model training, and deployment. While highly scalable, it is generally considered to possess a steep learning curve, requiring sophisticated data science talent to operate effectively. Microsoft Azure Machine Learning offers comparable enterprise ML pipelines with deep integration into the Microsoft ecosystem, supporting robust MLOps, governance, and model monitoring.

| Platform Category | Leading Solutions | Primary Advantage | Primary Constraint |

|---|---|---|---|

| Data Blending & Prep | Alteryx | Massive automation of manual data pipelines. | High TCO ($100k+ total contract value). |

| Enterprise MLOps | DataRobot, Dataiku | Comprehensive model governance and AutoML. | Requires dedicated data science talent. |

| Statistical Analysis | IBM SPSS, Altair | Deep statistical rigor and legacy integration. | Expensive licensing; steep learning curve. |

| Cloud-Native ML | Vertex AI, SageMaker | Serverless scalability; integrated ecosystem. | Complex infrastructure management. |

Foundation Models and Time-Series Intelligence

A highly specialized yet transformative segment of the predictive market involves foundation models trained specifically on temporal data. Forecasting future events based on historical time-series data is a mathematically complex endeavor, historically requiring organizations to build and tune multiple local models.

Nixtla, the developer of TimeGPT, has engineered a pre-trained generative architecture capable of handling large-scale, long-horizon time-series forecasting without the need for constant model retraining—a capability known as zero-shot deployment. TimeGPT modifies access to advanced predictive insights without requiring deep machine learning expertise from the end user.

The platform excels in use cases characterized by massive data granularity. For example, predicting daily demand for tens of thousands of perishable SKUs across brick-and-mortar retail locations, or forecasting node-level electricity prices and generation for energy grid operations. It seamlessly incorporates exogenous regressors—such as local holidays, paydays, and macroeconomic indicators like oil prices—to generate highly accurate multivariate forecasts. Mid-market engineering teams praise the platform for drastically reducing computational engineering effort and capturing meaningful temporal dynamics that traditional models oversimplify.

However, the platform represents a significant financial investment. Enterprise pricing for TimeGPT starts at an estimated $12,000 per month. Furthermore, users have noted that the learning curve remains steep for advanced implementations, documentation is sometimes lacking for complex use cases, and the platform currently lacks robust features for geospatial data handling. Consequently, Nixtla is best suited for upper-mid-market firms where forecasting accuracy directly and immediately translates to massive revenue capture or cost mitigation, justifying the substantial subscription costs.

The FP&A Ecosystem and ERP Integration

Predictive analytics within the office of the Chief Financial Officer (CFO) requires a distinct architectural approach. Financial Planning and Analysis (FP&A) teams are culturally and operationally anchored to spreadsheet environments. Consequently, the most successful predictive tools in this domain do not attempt to replace Microsoft Excel; rather, they augment it with centralized databases, AI-powered scenario modeling, and multi-dimensional querying capabilities.

When evaluating FP&A tools, integration with foundational ERP systems is paramount. In the mid-market, Oracle NetSuite and Sage Intacct represent the dominant ERP architectures. NetSuite is highly favored by mid-market companies scaling globally due to its robust support for complex, cross-functional workflows, multi-entity consolidation, and deep customization via SuiteScript. Sage Intacct, conversely, is revered for its clean, finance-centric user interface, multi-tenant cloud architecture, and powerful native report writer, making it ideal for service-centric organizations that have outgrown entry-level software like QuickBooks.

For organizations utilizing these ERPs, several specialized predictive FP&A platforms stand out:

- Datarails: A completely Excel-native solution that empowers FP&A teams by consolidating disparate data sources (General Ledger, payroll, CRM) into a secure cloud database while allowing users to build models in their familiar spreadsheet interface. It incorporates an AI chatbot (“Genius”) designed to answer management inquiries regarding complex financial scenarios quickly and accurately. Datarails is highly favored by mid-market teams seeking lighter workflows, automated insights, and straightforward permissions without the heavy governance overhead of enterprise tools.

- Vena Solutions: Positioned slightly upmarket from Datarails, Vena is an enterprise-grade platform that also leverages an Excel interface but enforces strict internal controls, structured templates, and heavy governance. It includes a Copilot feature offering conversational queries and automated reporting. Vena is optimal for mature mid-market companies navigating complex regulatory environments and requiring robust audit trails for scaling operations.

- Cube: Designed explicitly for spreadsheet-native mid-market teams, Cube integrates deeply with both NetSuite and Sage Intacct to facilitate strategic budgeting, headcount planning, and what-if scenario analysis. It focuses heavily on team collaboration and reducing manual data entry.

- Planful: A cloud-based FP&A platform recognized for robust workflows and performance management, moving away from a strictly UI-led spreadsheet model toward a modernized planning architecture.

By connecting these predictive FP&A tools directly to underlying ERP data, finance teams can run instant consolidation scenarios—such as simulating a 3% revenue increase across specific international subsidiaries—and track KPIs in real-time without the risk of manual data extraction errors.

Specialized Marketing and Customer Analytics

Beyond broad BI and FP&A applications, predictive analytics plays a highly specialized role in marketing operations.

Marketing analytics software is engineered to measure performance, allocate budgets, and analyze how cross-channel customer journeys contribute directly to revenue.

Adobe Analytics stands as a premium choice within this sector, utilizing deep customer journey analysis and granular audience segmentation to forecast behavioral trends. Optimized for complex digital ecosystems, it anticipates how customer cohorts will perform across varied channels. While highly customizable, reviewers note that initial setup is complex, and performance may lag when processing extremely high data volumes without optimized query management.

For organizations managing highly fragmented marketing stacks, platforms like SegmentStream and Improvado are essential. SegmentStream provides full-funnel insights, cross-channel attribution, and predictive performance optimization to navigate multi-channel environments. Improvado focuses on delivering an end-to-end marketing data pipeline, utilizing AI agents and built-in data governance to unify data from hundreds of sources, thereby streamlining data collection for large mid-market and enterprise marketing departments.

Strategic Synthesis and Governance Recommendations

The selection of predictive analytics software for a mid-sized business is not merely an IT procurement exercise; it is an epistemological shift in how the organization interacts with its own future. Based on an exhaustive analysis of the 2026 software ecosystem, several strategic imperatives emerge for mid-market decision-makers.

The Priority of Explainable AI (XAI)

As predictive models transition from theoretical exercises to autonomous decision engines, regulatory compliance and internal trust become paramount. Mid-market organizations cannot safely deploy “black box” models. Software must support Explainable AI (XAI) frameworks. When a platform generates a prediction, it must simultaneously provide a ranked feature importance matrix detailing precisely which variables drove the outcome. Transparent causality is necessary to satisfy both internal auditors and external regulatory bodies, ensuring that predictions remain aligned with corporate policy.

Modernizing the Data Architecture (ELT over ETL)

The efficacy of predictive analytics software is intrinsically linked to the underlying data architecture. In 2026, the mid-market has overwhelmingly coalesced around cloud data warehouses (CDWs). Consequently, modern predictive platforms execute an ELT (Extract, Load, Transform) paradigm rather than legacy ETL. By pushing complex machine learning queries down into the warehouse layer, organizations leverage elastic compute resources, reducing latency and eliminating the security vulnerabilities associated with extracting data into proprietary analytics engines.

Aligning Software with Talent Availability

Organizations must relentlessly audit their internal technical capabilities before committing to a platform. Procuring heavy-duty, developer-centric platforms like Alteryx, or complex foundation models like TimeGPT, without a pre-existing team of data engineers will invariably result in deployment failure and negative ROI. For the vast majority of mid-market firms lacking dedicated data science departments, no-code solutions like Pecan AI and Akkio offer the highest probability of successful adoption and the fastest time-to-insight. These tools bridge the talent gap by allowing domain experts—those who actually understand the business context—to architect predictive models using natural language and visual interfaces.

Embracing Converged and Agentic Intelligence

Predictive analytics must not exist in an operational vacuum. The convergence of Machine Learning and Business Intelligence is an established reality. Organizations heavily invested in Microsoft or Salesforce ecosystems should deeply evaluate Power BI Premium and Tableau with Einstein GPT to embed predictive insights directly into the dashboards that frontline managers already monitor daily. Furthermore, the emergence of Agentic AI, championed by platforms like Tellius, represents the future standard of enterprise analytics. Mid-sized organizations seeking to leapfrog competitors should look beyond reactive dashboards toward systems that autonomously monitor data, decompose metric anomalies, and surface explainable recommendations.

In conclusion, the optimal predictive analytics software for a mid-sized business is one that seamlessly integrates into existing data architectures, matches the technical proficiency of its workforce, and most importantly, transforms abstract statistical probabilities into immediate, quantifiable business actions. By prioritizing operational integration and user accessibility alongside algorithmic sophistication, mid-market organizations can harness the full vanguard of foresight, securing resilience and driving aggressive growth in an inherently unpredictable global economy.