AI SEO & GEO: Ranking, Mentions, & AI Search Visibility

Generative Engine Optimization and AI Search Visibility: A Definitive Guide

The Paradigm Shift from Lexical Retrieval to Generative Synthesis

The architecture of digital information discovery is undergoing its most profound transformation since the inception of the commercial web. Traditional Search Engine Optimization (SEO) was constructed upon deterministic, lexical retrieval systems. In that paradigm, algorithms evaluated keyword density, backlink profiles, and technical site architecture to match user queries with indexed HTML pages. Today, the digital ecosystem is increasingly mediated by Generative Engines (GEs), including platforms such as OpenAI’s ChatGPT, Perplexity, Anthropic’s Claude, and Google’s AI Overviews. These systems operate on a fundamentally different infrastructure utilizing Retrieval-Augmented Generation (RAG) to synthesize real-time data from disparate sources into unified, conversational, and contextually rich responses.

This systemic evolution has catalyzed the formalization of Generative Engine Optimization (GEO), a highly specialized optimization framework designed specifically to enhance brand visibility, citation frequency, and sentiment within AI-generated outputs. The foundational tenets of GEO were established in a landmark 2024 research paper authored by computer scientists at Princeton University and the Indian Institute of Technology. The researchers identified that conventional SEO tactics were rapidly losing efficacy within generative environments. To investigate this, they developed GEO-BENCH, a comprehensive evaluation benchmark comprising ten thousand diverse queries across multiple domains, and introduced specialized impression metrics tailored exclusively for generative engines. The empirical data demonstrated that while traditional lexical optimization yields diminishing returns, the strategic application of specific GEO techniques can enhance content visibility within AI outputs by up to forty percent.

The pivot toward GEO necessitates a complete cognitive restructuring for digital marketers, technical webmasters, and content engineers. The objective is no longer merely to drive human traffic to a proprietary web page via a high ranking on a Search Engine Results Page (SERP). Instead, the objective is to secure a dominant “Share of Model“—a metric denoting the frequency and prominence with which an artificial intelligence cites, recommends, or mentions a specific brand, entity, or concept across multiple generative platforms. This requires re-engineering digital assets so that Large Language Models (LLMs) can extract, verify, and synthesize underlying factual data with minimal computational friction.

The Architectural Mechanics of Retrieval-Augmented Generation

To effectively influence generative engines, one must first deconstruct the mechanical processes by which they retrieve and generate information. Generative search engines do not rely solely on the static, pre-trained weights of their neural networks; such reliance would inevitably lead to severe temporal degradation and widespread factual hallucinations. Instead, they employ Retrieval-Augmented Generation to ground their outputs in verifiable, real-time data.

When a user submits a query, the system executes a sophisticated sequence of operations. The process typically initiates with a mechanism known as “query fan-out.” Advanced models, particularly Google AI Overviews and Perplexity, do not search for the user’s raw prompt. Instead, the model decomposes a complex query into multiple semantic sub-queries, launching simultaneous retrieval operations across diverse subtopics and external data sources. This enables the engine to construct a comprehensive response that addresses nuanced comparisons and multi-faceted inquiries.

Once the queries are launched, the system utilizes Natural Language Processing (NLP) to convert the search intent into high-dimensional vector embeddings. The retrieval engine then scans vector databases—rather than traditional lexical indexes—to identify content chunks that exhibit high semantic similarity to the user’s embedded intent. During this phase, content chunking strategies become critical. Vector databases, such as Pinecone, require textual data to be partitioned into discrete segments. If a content chunk is too small, it lacks the semantic context necessary for the retrieval algorithm to recognize its relevance; if it is too large, it exceeds the embedding model’s context window, resulting in the truncation and loss of critical information.

Following the retrieval of semantically relevant documents, the system applies rigorous source selection and filtering algorithms. The engine evaluates the retrieved materials based on recency, factual density, and domain authority. Different platforms exhibit unique filtering biases. For example, Anthropic’s Claude utilizes a “Constitutional AI” framework that aggressively favors sources historically deemed trustworthy and safe, while Perplexity’s architecture is heavily weighted toward real-time retrieval from authoritative domains and highly engaged community platforms. Finally, the filtered documents are injected into the LLM’s context window. The model synthesizes the extracted information, resolves conflicting data points, and generates a fluid, conversational output that explicitly cites the grounding sources.

Empirical Content Optimization: The Princeton GEO Framework

The Princeton GEO research isolated specific content modifications to quantify their direct impact on AI visibility and citation probability. The researchers discovered that LLMs exhibit profound deterministic biases toward content structures characterized by high factual density, semantic completeness, and authoritative signals.

The empirical data from the Princeton GEO-BENCH study delineates the precise efficacy of various optimization levers. The following table illustrates the percentage increase or decrease in generative visibility associated with specific content modifications compared to an unoptimized baseline:

| Optimization Technique | Visibility Impact | Mechanistic Rationale for LLM Preference |

|---|---|---|

| Embedding Expert Quotes | +41% | Triggers “authoritative amplification”; LLMs heavily weight aggregated credentialed perspectives. |

| Adding Clear Statistics | +30% | Maximizes factual specificity, providing discrete data nodes for RAG extraction. |

| Including Inline Citations | +30% | Establishes a verifiable citation graph, increasing algorithmic trust in the source material. |

| Improving Readability/Fluency | +22% | Reduces parsing friction and syntactic complexity during the vector embedding process. |

| Domain-Specific Jargon | +21% | Enhances semantic proximity in high-dimensional vector spaces for technical queries. |

| Simplifying Language | +15% | Facilitates highly efficient contextual chunking and summarization. |

| Authoritative Voice | +11% | Aligns with E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) classifiers. |

| Rare Synonyms | 0% (Neutral) | Fails to alter the core semantic vectors; algorithms map synonyms to identical nodes. |

| Keyword Stuffing | -9% (Negative) | Dilutes entity density, triggering spam-filtering and degrading semantic clarity. |

Empirical data derived from the Princeton University GEO research findings.

The data reveals that generative models prioritize verifiable facts over rhetorical persuasion. The most impactful intervention, which yielded a forty-one percent increase in visibility, is the embedding of expert quotes. The integration of direct quotations from recognized authorities creates a phenomenon termed “authoritative amplification”. When a document includes statements explicitly attributed to credentialed experts, the natural language processing algorithms interpret the page not merely as a single opinion, but as an aggregator of authoritative industry perspectives. To execute this effectively, the text must explicitly state the expert’s full credentials, allowing the LLM to map the statement to recognized entity identifiers within its broader knowledge graph.

Furthermore, the inclusion of clear statistics and inline citations each drove a thirty percent visibility enhancement. LLMs are fundamentally deterministic in their search for factual grounding; they seek precise answers to resolve user uncertainty. Content that provides specific data points, statistics, and verifiable claims receives preferential citation over content that relies on generalized marketing language. Paradoxically, citing and linking out to other authoritative domains increases the likelihood that a brand’s own content will be cited by an AI. This occurs because outbound citations signal thoroughness and place the content within a dense, verifiable citation graph, allowing the LLM to rapidly cross-reference facts and establish confidence in the source material.

The Princeton researchers ultimately concluded that “semantic completeness” serves as the strongest overarching predictor of AI citation, possessing a correlation coefficient of r=0.87. Semantic completeness is defined as the capacity of a specific content passage to answer a query fully and autonomously, without requiring the LLM to retrieve secondary or tertiary sources to synthesize a complete thought. Self-contained, highly dense factual passages represent the optimal input for generative extraction.

Architectural Re-engineering for Machine Ingestion

To capitalize on these empirical findings, organizations must fundamentally re-engineer their content architecture.

Writing for vector embeddings requires a departure from traditional keyword targeting, shifting focus toward Natural Language Processing optimization and entity relationships.

The optimal architectural framework for LLM ingestion operates on a tri-layered content structure, designed to maximize extractability and authoritativeness. The foundational layer must manifest within the initial fifty words of a page or section. This introductory segment is strictly reserved for providing a direct, unambiguous answer to the targeted query, stripping away all prefatory marketing language. This structural discipline perfectly aligns with the chunking requirements of vector databases, ensuring the most critical semantic information is captured within a single, highly relevant data chunk. The secondary layer, comprising the subsequent one hundred to one hundred and fifty words, functions to provide the contextual rationale, explicitly explaining why the initial answer matters and detailing its broader implications. Finally, the tertiary layer encompasses the remainder of the document—often extending beyond two thousand words—and serves to establish absolute topical authority through the deployment of deep analysis, statistical density, expert quotations, and comprehensive structured data.

This transition in content architecture also dictates a shift in content typology. Because users increasingly leverage AI platforms for complex reasoning, product evaluation, and comparative analysis, brands must pivot their production toward “decision-support” content. Generative models exhibit a profound algorithmic preference for comparative articles, alternative evaluation pages, and comprehensive migration guides. Conversely, purely promotional materials and top-of-funnel glossaries are rarely cited. Generative engines inherently surface definitions directly within their conversational outputs, resulting in a near-zero click-through rate for traditional glossary web pages. To adapt, technical SEOs must execute a “Glossary Pivot,” extracting definitions from standalone pages and embedding them directly into high-traffic, bottom-of-funnel decision content using formal FAQ schema markup.

The Financial Implications of Architectural GEO

The financial implications of architectural GEO are substantial, as evidenced by recent market case studies. The software company Tally.so fundamentally transformed its acquisition model by re-engineering its content for generative search. By deploying highly structured, decision-support comparison articles—such as objective evaluations of competitive form builders—the company successfully positioned itself within the semantic pathways of ChatGPT and Perplexity, driving an Annual Recurring Revenue (ARR) increase from two million to three million dollars within a four-month period primarily through AI-driven acquisition. Similarly, the B2B technology services firm Broworks executed a comprehensive GEO audit, injecting statistics, expert quotes, and optimized semantic structures into their existing assets. Within ninety days, generative engines accounted for ten percent of their total organic traffic, and this highly qualified, intent-driven traffic converted at a remarkable twenty-seven percent.

Semantic HTML and the LLMs.txt Protocol

Technical SEO has evolved from merely ensuring page indexation to functioning as a sophisticated “Semantic Translator” for AI agents. Large Language Models and their associated crawling bots rely heavily on the Document Object Model (DOM) to infer the hierarchical relationship and relative importance of information on a webpage. Excessive reliance on nested container divisions—colloquially known as “div soup”—or an over-dependence on client-side JavaScript rendering severely disrupts the parsing algorithms utilized by AI crawlers.

Optimal technical hygiene requires the rigorous application of HTML5 semantic tags. Elements such as <article>, <section>, <header>, <aside>, and <nav> provide explicit, machine-readable signals that allow an AI to instantly distinguish primary, high-value content from boilerplate navigational or promotional elements. Furthermore, a strict, logical progression of heading tags—from <h1> through <h6>—is computationally mandatory. Skipping heading levels creates structural ambiguity within the DOM tree, paralyzing the LLM’s internal summarization protocols and hindering its ability to construct an accurate outline of the document’s semantic flow. Predictable and descriptive heading anchors are equally vital, as they facilitate in-answer deep linking, allowing the AI to direct users precisely to the most relevant passage within a lengthy document.

The Emergence of the Machine-Readable Web: LLMs.txt

While semantic HTML improves parsing, the sheer complexity of the modern web remains a significant computational hurdle for AI ingestion. In response, the developer and SEO communities have rapidly standardized a new protocol: the llms.txt file. Proposed in late 2024 by researchers at Answer.AI, this standard provides a simplified, Markdown-formatted directory specifically engineered for AI consumption. The adoption rate has been unprecedented; as of late 2025, over 844,000 domains had implemented the protocol, including major infrastructure providers like Stripe, Cloudflare, and Anthropic.

Located strictly at the root directory of a domain, the llms.txt file acts as a curated informational map. It explicitly instructs Large Language Models on which pages contain the most authoritative, fact-dense information, effectively bypassing the noise of marketing copy, cookie banners, and dynamic scripts. The distinction between this new protocol and legacy files is crucial for technical strategy.

Comparison of foundational web discovery and parsing protocols:

- robots.txt

- Target Audience: Traditional Web Crawlers & AI Scrapers

- Primary Function: Controls access via explicit exclusion directives (allow/disallow).

- Data Format: Plain Text

- sitemap.xml

- Target Audience: Search Engine Indexers

- Primary Function: Lists all universally accessible URLs for comprehensive indexation.

- Data Format: XML

- llms.txt

- Target Audience: Large Language Models & AI Agents

- Primary Function: Curates and prioritizes high-value content via explicit inclusion and summarization.

- Data Format: Markdown (Text)

The implementation of llms.txt requires strict adherence to Markdown formatting standards. The file must be served with a text/plain MIME type and utilize UTF-8 character encoding. The document initiates with an <h1> tag defining the primary entity or brand, immediately followed by a Markdown blockquote (>) summarizing the organization’s core value proposition. Subsequent <h2> sections categorize the most critical digital assets, such as definitive product documentation, technical APIs, and organizational history. Each included URL is paired with a concise, informative description to provide the AI with preliminary context before it initiates a fetch request.

For platforms hosting highly complex technical documentation, advanced implementations of the protocol involve providing clean, Markdown-rendered versions of web pages at identical URLs, simply appending a .md extension. This ensures that when an AI agent follows a link from the llms.txt directory, it receives raw, instantly processable text, entirely bypassing the computational overhead of HTML DOM parsing. Organizations like Vercel utilize this architecture to group documentation by high-intent paths—such as “Quickstarts” and “Core Concepts”—ensuring that AI assistants can instantly locate and synthesize the exact parameters required by developers.

Advanced Crawler Management and Robots.txt Architecture

The proliferation of artificial intelligence has necessitated a highly nuanced approach to crawler management. Historically, webmasters utilized robots.txt as a binary tool: allowing benign search engines while blocking malicious scrapers. Today, the taxonomy of AI bots requires a bifurcated strategy, distinguishing between “Training Data Scrapers” and “Agentic AI Bots”.

Training Data Scrapers are automated programs designed for bulk data acquisition. Bots such as GPTBot (OpenAI), ClaudeBot (Anthropic), Applebot-Extended (Apple), Bytespider (ByteDance), and CCBot (Common Crawl) systematically traverse the web to ingest massive datasets, which are subsequently used to pre-train future iterations of Large Language Models. These scrapers consume significant server bandwidth and raise valid concerns regarding intellectual property ingestion.

Conversely, Agentic AI Bots are real-time search and retrieval mechanisms.

When a user asks a question in an AI interface, bots such as Claude-SearchBot, ChatGPT-User, and PerplexityBot are dispatched autonomously to fetch live web pages, extract specific facts, and return the data directly to the user’s active context window.

A pervasive and highly detrimental error in early GEO strategies involved the blanket blocking of all AI-associated user agents. While this effectively protected proprietary data from bulk training ingestion, it simultaneously eradicated the brand’s visibility in real-time generative search interfaces, cutting off a rapidly growing channel for highly qualified traffic. According to HTTP Archive data from 2025, over 94% of the 12 million tracked websites utilize a robots.txt file, yet a significant portion apply overly broad exclusion directives that inadvertently block Agentic AI Bots.

To execute a sophisticated GEO technical strategy, organizations must deploy precise robots.txt configurations that block bulk training scrapers while explicitly permitting search and retrieval agents.

An optimal, modern robots.txt configuration for generative visibility is structured as follows:

Disallow bulk training data scrapers to protect IP

User-agent: GPTBot

Disallow: /

User-agent: ClaudeBot

Disallow: /

User-agent: CCBot

Disallow: /

User-agent: Google-Extended

Disallow: /

User-agent: Applebot-Extended

Disallow: /

User-agent: Bytespider

Disallow: /

Allow real-time Agentic AI bots for generative visibility

Note: PerplexityBot, Claude-SearchBot, ChatGPT-User, and OAI-SearchBot are permitted by omission, or can be explicitly allowed.

User-agent: PerplexityBot Allow: / User-agent: Claude-SearchBot Allow: / Strategic configuration for managing generative crawler access.

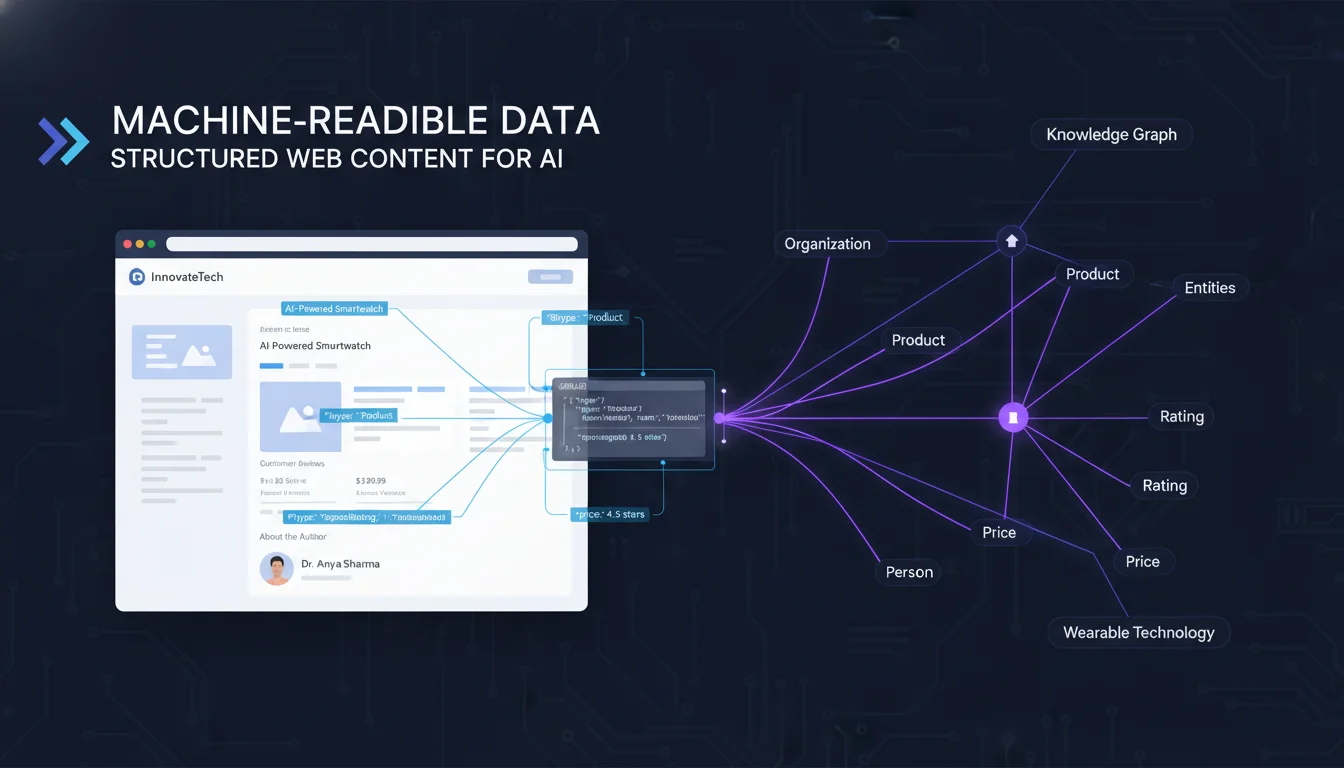

Entity-Based SEO, Schema.org, and Knowledge Graph Dominance

The transition to generative search marks the definitive end of keyword density and the absolute prioritization of entity-based SEO. Generative AI models do not rank strings of text; they map, rank, and synthesize semantic entities. An entity is defined as a distinct, recognizable, and persistent concept—a specific person, organization, product, or location—that is mapped within a global Knowledge Graph. To dominate AI search, a brand must transition from attempting to rank individual web pages to establishing unassailable “Concept Authority” over a network of related entities.

The technical mechanism for establishing this authority is the rigorous implementation of Schema.org structured data, specifically utilizing the JSON-LD format. Schema markup serves as the explicit, machine-readable translation layer, removing all computational ambiguity regarding the identity of a brand and the semantic relationships between the concepts discussed on a webpage. The empirical data from the Princeton study confirms that pages utilizing comprehensive structured data markup exhibit a 73% higher selection rate for AI citations compared to content lacking semantic declarations.

Mastering entity-based GEO requires the strategic deployment of highly specific Schema properties.

| Schema.org Property | Expected Data Type | Mechanism of Action in Generative SEO |

|---|---|---|

| Organization / Person | Text / URL | Establishes the core entity identity and validates overarching E-E-A-T credentials. |

| sameAs | URL | The ultimate entity consolidator; links a proprietary webpage to a universally recognized identity record (e.g., Wikidata, Wikipedia, Crunchbase). |

| about | Thing | Explicitly dictates the primary subject matter of a document, guiding accurate vector embedding. |

| mentions | Thing | Identifies secondary entities discussed within the text, mapping relational semantic clusters. |

| DefinedTerm | Text | Associates specific industry jargon with formal definitions, establishing deep topical authority. |

| inDefinedTermSet | URL / DefinedTermSet | Links a single defined term to a broader, comprehensive glossary or ontology. |

| termCode | Text | Provides a specific identification code for a term, heavily utilized in technical or medical documentation. |

Critical Schema.org properties for LLM entity resolution and mapping.

The sameAs property is the fulcrum of entity SEO. It provides the URL of a definitive reference webpage that unambiguously confirms the entity’s global identity. By linking a brand’s website to its corresponding Wikipedia page, Wikidata entry, or official LinkedIn profile, the webmaster mathematically proves to the AI that the local entity is identical to the entity recognized in broader, cross-referenced global databases.

The Foundational Role of Wikidata

Because Large Language Models are trained on massive datasets, they lean heavily on structured, open-source databases for their foundational, factual knowledge. Establishing an immaculate, data-rich presence on Wikidata is therefore a paramount strategy for anchoring a brand’s entity in the era of GEO. Unlike Wikipedia, which enforces highly subjective and stringent notability requirements, Wikidata functions strictly as a neutral, structured data repository accessible to any verifiable entity.

The process of establishing Wikidata authority requires precise, methodical execution:

- Existence Auditing: The process begins by querying Wikidata.org to determine if an item (identified by a unique Q-number) already exists for the organization, its founders, or its flagship products.

- Entity Creation: If no entry exists, the practitioner must manually create a new item. This requires populating highly specific relational properties, including the instance of property (e.g., defining the entity as a software company or multinational organization), “official website,” “inception date,” “founder,” “headquarters location,” and an array of external “social media identifiers”.

- Verifiable Citations: Wikidata operates on a foundation of verifiability, not mere assertion. Every statement added to the database—from the founding date to the name of the CEO—must be explicitly backed by a reliable, third-party public source (a citation). LLMs rely on these citations to independently verify the legitimacy of the entity.

- Schema Integration: Once the Wikidata profile is populated and stable, the final step is to extract its unique Q-number URL and inject it directly into the sameAs array of the brand’s proprietary Organization JSON-LD markup. This action closes the semantic loop, seamlessly wiring the brand’s local web assets directly into the global semantic web.

The Multi-Platform Influence: Engineering the Citation Graph

A fundamental distinction between legacy SEO and Generative Engine Optimization lies in the scope of strategic influence. Traditional SEO was an insular discipline, focused almost entirely on optimizing a proprietary domain to rank higher on a single search engine. GEO, however, acknowledges that an LLM synthesizes its responses based on an algorithmic consensus drawn from across the entire web. If a brand solely publishes favorable information on its own proprietary website, the artificial intelligence models will weight that data with high skepticism, categorizing it as subjective marketing. To achieve a dominant Share of Model, a brand must engineer its presence across the specific third-party platforms that LLMs inherently trust and cite most frequently.

Data analysis spanning 2024 to 2025 highlights a massive divergence in the citation architectures of different AI platforms. A comprehensive strategy must account for these platform-specific biases.

| AI Platform | Primary Architectural Characteristic | Top Cited Domain | Percentage Share of Citations |

|---|---|---|---|

| ChatGPT | Heavily influenced by static training data and Bing index grounding. | Wikipedia | 47.9% |

| Perplexity | Prioritizes real-time retrieval; favors experiential consensus. | 46.7% | |

| Google AI Overviews | Highly diversified; correlates strongly with top-10 organic SERPs. | Reddit / Wikipedia | 21.0% |

| Copilot / Bing AI | Deeply grounded in the Microsoft Bing search index structure. | Wikipedia | ~35.0% |

Citation distribution data across major AI platforms (Aug 2024 – June 2025).

Perplexity’s Citation Engine: Perplexity operates by searching the web live and selecting victorious sources within milliseconds. It demonstrates a profound algorithmic bias toward recent content, authoritative domains, and community platforms that offer direct answers. Among Perplexity’s top ten sources, Reddit captures an overwhelming 46.7% share, followed by YouTube (13.9%), Gartner (7.0%), Yelp (5.8%), and LinkedIn (5.3%).

However, nuanced analysis reveals that this distribution shifts dramatically based on user intent. For research-intent queries, community forums dominate. But for buying-intent queries—where users are close to a purchasing decision—Perplexity and ChatGPT significantly reduce their reliance on Reddit (dropping to approximately 11%) and shift their citation focus toward professional B2B review aggregators, including G2, Trustpilot, PCMag, and Forbes. To influence Perplexity’s citation graph for commercial queries, brands must secure placements in authoritative industry publications, ensure their external review profiles are robust, and participate selectively in highly specific Reddit threads where deep technical comparisons occur.

Google AI Overviews (AIO): Google’s generative feature triggers frequently on conversational, long-tail queries. Unlike the more insular citation structures of its competitors, Google AIO remains deeply tethered to its legacy search index.

In fact, 93.67% of Google AIO responses cite at least one traditional top-10 organic search result. Therefore, standard SEO excellence—building high-quality, contextual backlinks and maintaining robust internal linking architectures—remains a highly relevant mechanism for securing AIO inclusion. Furthermore, the Princeton research identified that Google AIO exhibits a 156% higher selection rate for multi-modal content; pages that integrate dense text, custom descriptive images, and optimized YouTube video embeds are exponentially more likely to be selected as grounding sources.

It is also crucial for webmasters to understand the rapidly evolving regulatory landscape surrounding Google’s generative features. Following intense scrutiny and consultation from the UK’s Competition and Markets Authority (CMA) in early 2026, Google formally acknowledged the development of new, granular controls that will permit publishers to specifically opt out of generative AI features in Search. This proposed mechanism is designed to address publisher concerns regarding traffic cannibalization without forcing them to remove their content from traditional organic search results. While this provides publishers with an unprecedented level of control, brands that prioritize visibility and lead generation must carefully calculate the opportunity cost; opting out of AI Overviews may protect legacy traffic metrics, but it simultaneously cedes vital Share of Model to competitors who embrace the generative ecosystem.

Looking toward the immediate future, visual and voice integrations will further complicate the citation graph. By late 2025, voice search is projected to account for a massive volume of web queries, necessitating content structured with clear headings and conversational, long-tail answers. Simultaneously, multimodal models powering tools like Google Lens require descriptive alt-text and visually aligned structured data to comprehend context within images. Furthermore, advertising platforms are adapting; Google Ads has introduced Brand Recommendations alongside Cross-Media Reach tracking, indicating that paid and organic brand visibility are becoming increasingly integrated in the AI era.

Advanced Measurement: The 4-Step NLP SEO Framework

Because generative engines are probabilistic, non-deterministic systems, traditional rank tracking methodologies are functionally obsolete. A generative AI model may cite a brand in position two on a Tuesday, position four on a Wednesday, and omit the brand entirely on a Thursday due to minor fluctuations in server load, prompt phrasing, or index updates. Making strategic business decisions based on a single-point measurement constitutes what industry analysts term the “Rank Tracking Fallacy”.

A robust GEO measurement framework requires evaluating performance systematically across four distinct phases: Research, Content, Technical, and Measurement. The overarching Key Performance Indicator (KPI) across these phases is Share of Model, which quantifies the frequency of a brand’s appearance across generative platforms for targeted category prompts.

The Statistical Sampling Methodology

To counteract the probabilistic volatility of LLMs, measurement protocols must rely on rigorous statistical confidence intervals. Running a single prompt provides statistical noise; running a prompt 60 to 100 times across various platforms establishes a highly reliable data baseline. If a brand is mentioned in 35 out of 100 automated iterations, the true mention rate operates within a mathematically predictable 25-45% confidence interval.

Brands must engineer a controlled “Prompt Library” containing 10 to 20 core prompts categorized by the stages of the buyer’s journey:

- Awareness Stage: Informational queries such as “What are the best [category] tools?” or “Who are the leading [category] vendors?”.

- Consideration Stage: Comparative queries such as “Compare vs for [specific need]” or “What are the pros and cons of?”.

- Decision Stage: Validational queries such as “Is good for [specific use case]?” or “What do customers say about?”.

To account for natural language diversity, each seed query must be transformed into 3 to 5 synthetic variations, ensuring the tracking accounts for the myriad ways human users interact with conversational interfaces.

Tooling for LLM Monitoring

To execute statistical sampling at scale, a sophisticated ecosystem of AI monitoring platforms has emerged.

- Profound: Delivers enterprise-grade analysis of brand visibility and sentiment within LLM responses, providing highly accurate share-of-voice metrics and deep citation source discovery to identify which third-party sites are feeding the AI models.

- Semrush Brand Visibility / AI Toolkit: Simplifies cross-platform tracking by aggregating traditional SEO metrics with generative data to output a unified “AI Visibility Score.” Critically, it tracks follow-up queries, revealing brand endurance as users refine their search intent.

- Peec AI and XFunnel: Peec AI monitors mention frequency multiple times daily and benchmarks against competitors, while XFunnel goes further by mapping AI visibility directly to lead generation, calculating the precise return on investment (ROI) of generative mentions.

- Sprout Social and Brand24: Because LLMs continuously ingest real-time social data via APIs (like X/Twitter and Reddit firehoses), these tools monitor the emotional tone of brand mentions across the social web, allowing brands to manage the “conversational corpus” before it becomes embedded in an AI model’s training data.

- Ahrefs Brand Radar and Anvil: specialized tools that track exact prompts, responses, and citations across ChatGPT, Gemini, and Perplexity, providing dashboards for immediate tactical adjustments.

Prompt Engineering for Marketers and Strategists

While GEO focuses on optimizing content for the machine, marketers must also master the inverse process: optimizing instructions given to the machine. Prompt engineering has emerged as a highly technical, high-value discipline. It involves crafting precise queries to manipulate generative tools into producing highly specific, formatted outputs.

Mastering prompt engineering accelerates the implementation of GEO strategies. By utilizing distinct prompting techniques, marketers can rapidly execute complex workflows:

- Zero-shot Prompting: Providing a task to the LLM without any prior examples, relying solely on its internal training data.

- One-shot and Few-shot Prompting: Supplying the model with one or several examples of the desired format (e.g., providing an example of a perfectly structured semantic HTML table) to force the AI to recognize and replicate the pattern.

- Chain-of-Thought Prompting: Engineering a sequence of connected tasks where the AI carries context from previous entries, forcing the model to explicitly state its reasoning process before arriving at a conclusion. This is vital for generating complex entity relationship maps or buyer personas.

A meticulously engineered prompt consists of unambiguous instructions, extensive background context, and specific input data. By commanding the AI to act within specific constraints—such as defining audience pain points, generating initial keyword clusters, or building technical content outlines—marketers can drastically reduce the operational time required to deploy extensive GEO content architectures. However, human vetting remains absolutely critical. LLMs are notoriously prone to hallucinations, generating fictitious citations or historical inaccuracies that can severely damage a brand’s E-E-A-T signals if published without rigorous verification.

The Definitive Execution Guide: A 90-Day GEO Implementation Strategy

Phase 1: Baseline Establishment and Technical Remediation (Days 1–30)

The initiative must commence with the definition of the target vector. Organizations must compile a controlled Prompt Library comprising 15 to 20 bottom-of-funnel, decision-stage queries. Utilizing tools like Profound or Peec AI, practitioners should execute statistical sampling across ChatGPT, Perplexity, and Google AIO to capture baseline Share of Model, citation frequency, and sentiment scores.

Simultaneously, the technical infrastructure must be re-engineered. Webmasters must audit the DOM, eliminating redundant JavaScript and ensuring the deployment of a strict h1-h6 semantic HTML hierarchy. Comprehensive JSON-LD Schema markup—specifically Organization, Product, and FAQ schema—must be implemented, ensuring that sameAs properties link precisely to established Wikidata and Crunchbase profiles. The robots.txt file must be optimized to block bulk training scrapers while explicitly permitting search and retrieval bots like PerplexityBot. Finally, an llms.txt file must be deployed to the root directory, curating the most critical technical and organizational assets in raw Markdown for frictionless AI ingestion.

Phase 2: Content Optimization and Entity Reinforcement (Days 31–60)

With the technical foundation secured, efforts transition to content engineering.

Existing top-performing organic pages must undergo an “Extractability Audit.” Content must be restructured to conform to the Three-Layer Content Structure, ensuring direct, factual answers are positioned within the initial fifty words.

To trigger the visibility enhancements identified in the Princeton study, practitioners must systematically inject verifiable statistics, precise data points, and direct quotations from credentialed experts into the existing text. Concurrently, the content team should deploy four to eight new “money pages,” specifically focusing on comparative analysis, alternatives, and deep-dive use cases that satisfy the generative models’ preference for decision-support content. Where applicable, the Glossary Pivot strategy should be executed, embedding complex definitions directly into bottom-of-funnel pages utilizing structured FAQ schema.

Phase 3: Citation Engineering and Off-Site Syndication (Days 61–90)

The final phase recognizes that generative algorithms rely on external consensus. Utilizing LLM tracking tools, the organization must identify the top 50 third-party domains that the AI platforms are currently citing for the brand’s targeted queries.

A targeted editorial outreach campaign must be launched to secure placements, expert mentions, and verifiable citations on those specific, highly weighted publications. Furthermore, subject matter experts should authentically engage in relevant community forums—such as highly specific technical subreddits—to provide the experiential consensus that real-time models like Perplexity rely upon. To capture the multimodal preference exhibited by Google AI Overviews and Gemini, organizations should also produce and embed YouTube video content optimized to answer specific, conversational queries.

By abandoning the obsolete practices of keyword manipulation and embracing factual density, semantic architecture, rigorous entity definition, and off-site citation engineering, organizations can dominate the generative search landscape. Generative Engine Optimization requires a commitment to becoming an undeniable, verifiable node of truth within the global knowledge graph, ensuring that when an artificial intelligence synthesizes reality, the optimized brand is seamlessly and prominently woven into the narrative.