AI Search Overviews: Impact on B2B Traffic & SEO Strategy

Introduction to the Paradigm Shift in B2B Search Architecture

The digital marketing landscape, particularly within the Business-to-Business (B2B) sector, is currently undergoing an unprecedented structural transformation. For over two decades, the foundational architecture of digital discovery and inbound marketing relied on a predictable, transactional equilibrium. Enterprises allocated substantial resources toward the production of high-utility, educational content—ranging from authoritative white papers and technical documentation to expansive, top-of-funnel blog networks. In exchange for this continuous injection of information into the digital ecosystem, traditional search engines, governed predominantly by Google, rewarded these organizations with predictable, scalable streams of organic discovery traffic. This traffic formed the lifeblood of the B2B marketing funnel, capturing prospects during the earliest phases of their research journey and nurturing them toward commercial conversion. Today, this fundamental paradigm is irreversibly fracturing.

The catalyst for this disruption is the rapid integration and widespread consumer adoption of generative Artificial Intelligence (AI). Specifically, the deployment of Google’s AI Overviews (AIO) within the core Search Engine Results Page (SERP), compounded by the explosive growth of standalone Large Language Models (LLMs) such as ChatGPT, Claude, and Gemini, has fundamentally altered the morphology of information retrieval. Search engines are no longer functioning purely as digital librarians, referring users to an indexed catalog of external hyperlinks. Instead, they are rapidly transitioning into “answer engines,” designed to parse user intent, synthesize data from across the web, and extract definitive answers directly onto the interface of the search platform itself. This shift from a “referral model” to an “extraction model” introduces profound consequences for websites that have historically relied on informational queries to drive brand visibility and lead generation.

The objective of this comprehensive report is to analyze the empirical collapse of traditional search metrics, utilizing diverse cohort data, including targeted assessments of 100-website performance baselines and expansive tracking studies across dozens of B2B organizations. By synthesizing data encompassing millions of queried impressions, shifting click-through rates, and emerging behavioral psychology, this analysis seeks to delineate the true economic and operational impact of AI Overviews. Furthermore, it outlines the strategic migration necessary for organizations to survive this transition, detailing the shift from traditional Search Engine Optimization (SEO) toward Generative Engine Optimization (GEO) and Answer Engine Optimization (AEO).

Baseline Web Infrastructure: Insights from 100-Website Cohort Studies

Before examining the specific behavioral disruptions caused by generative AI, it is imperative to understand the baseline technical and structural health of the digital ecosystem. AI engines rely on robust, highly optimized web infrastructure to crawl, parse, and synthesize information accurately. If a website fails fundamental technical thresholds, its probability of being cited within a real-time Retrieval-Augmented Generation (RAG) process or an AI Overview drops precipitously. Diverse studies analyzing cohorts of 100 websites across varying sectors reveal a stark reality regarding global digital readiness.

A foundational analysis evaluating the top 100 websites globally demonstrated significant vulnerabilities in fundamental technical performance. Despite the universal acknowledgment of site speed as a critical ranking and indexing factor, 66% of these top 100 websites still fail to meet Google’s Core Web Vitals assessment criteria. These vital metrics grade a site based on loading performance, interactivity, and visual stability. Further compounding this technical deficit, performance tracking of these top 100 websites indicates an average load time of 8.28 seconds. In an era where AI bots must execute rapid, real-time web scraping to formulate conversational answers, latency is a critical impediment. If an LLM crawler encounters a timeout or excessive delay due to heavy code or uncompressed media, the site is effectively bypassed, severing any opportunity for citation or brand visibility.

Beyond pure technical latency, the structural usability and information accessibility of websites heavily influence algorithmic trust. A parallel study conducted by the U.S. Department of Health, which evaluated 100 websites strictly from a design and content usability perspective, concluded that only 42% of the surveyed properties met basic usability standards. When users encounter poor usability, bounce rates increase, signaling to search algorithms that the domain lacks authoritative or satisfying content. LLMs process these user engagement signals historically when weighting source reliability.

Similarly, evaluations within the academic and scientific communities highlight the necessity of unobstructed information architecture. A study of 100 websites belonging to foreign university libraries ranked within the Top 100 Leiden ranking of openness revealed that digital strategies must heavily prioritize seamless, ungated information channels. This philosophy of “open science” directly translates to the B2B commercial sector: content that is heavily gated behind forms or inaccessible due to complex client-side rendering is invisible to AI evaluation.

Finally, the concept of managing a brand’s footprint extends far beyond its primary domain. Platforms like Vendasta demonstrate the necessity of managing omnichannel reputation, utilizing AI to generate and monitor reviews across more than 100 distinct consumer and business review websites. This distributed presence is vital, as LLMs build their knowledge graphs not just from a company’s main site, but from the aggregate sentiment scattered across hundreds of secondary platforms. Consequently, these 100-website cohort studies collectively establish that technical speed, ungated accessibility, structural usability, and distributed reputation management form the absolute prerequisite foundation for competing in an AI-dominated search landscape.

The Empirical Collapse of Traditional Click-Through Rates

With the baseline technical realities established, the focus must shift to the explicit behavioral impact of generative AI summaries on organic search traffic. The statistical evidence regarding the influence of AI Overviews on B2B web traffic paints a severe picture of declining visibility for traditional blue-link search results. The historical correlation between securing a high ranking on a search engine and realizing a predictable volume of clicks has been mathematically severed.

A cornerstone investigation conducted by Seer Interactive in September 2025 provides unparalleled insight into this collapse. Analyzing proprietary data across 42 distinct enterprise organizations, the study focused obsessively on 3,119 highly vulnerable informational queries—the exact type of educational content historically utilized by B2B marketers to capture top-of-funnel awareness. By tracking an immense dataset encompassing 25.1 million organic impressions and 1.1 million paid search impressions over a 15-month period spanning from June 2024 to September 2025, the researchers documented catastrophic behavioral shifts triggered by the presence of AI Overviews.

The findings demonstrate a virtual halving of expected user engagement. For search queries where an AI Overview successfully rendered, the organic click-through rate (CTR) plummeted by approximately 61% to 65%, dropping from an already competitive baseline of 1.76% down to a fractional 0.61%. It is crucial to note that this disruption was not isolated to organic, unpaid listings. The paid search ecosystem, long considered a reliable, albeit expensive, method of bypassing organic volatility, suffered equivalently. When an AI Overview occupied the top of the screen, paid CTR crashed by a staggering 68%, falling from 19.7% to an anemic 6.34%. This indicates that generative AI does not merely distract users from organic results; it fundamentally resolves their commercial intent before they feel compelled to click on highly targeted advertisements. The study noted a particularly severe inflection point in July 2025, during which paid CTR across the monitored cohort crashed from approximately 11% to just 3% in a single month, signaling a rapid maturation and user acceptance of Google’s generative capabilities.

These localized findings are rigorously validated across broader, industry-wide analyses. A comprehensive 12-month analysis concluding in February 2026 by BrightEdge established that AI Overviews are no longer an experimental feature; they now trigger on 48% of all tracked commercial and informational search queries, representing an aggressive 58% year-over-year increase in prevalence. When these generative summaries materialize, the damage to traditional top-ranking positions is absolute. Corroborating data from Ahrefs measured a 58% CTR drop specifically for the number-one ranked organic position when an AI Overview is situated above it. This metric fundamentally redefines the economics of digital marketing: securing position one—the undisputed gold standard of SEO for over two decades—now yields less than half the traffic that the exact same ranking would have generated in early 2024.

Further controlled sociological analyses validate these raw tracking metrics. The Pew Research Center conducted a controlled study in March 2025 involving 900 participants to observe organic human interaction with AI-altered interfaces.

The study found that aggregate click-through rates for searches displaying AI Overviews dropped to a mere 8%, compared to a 15% engagement rate for traditional, non-AI results—a net 47% reduction in outbound click activity. Consequently, massive publishing conglomerates and digital organizations are feeling the economic sting. Data from the Digital Content Next (DCN) consortium, representing 19 premium member companies, showed a 10% median year-over-year decline in referral traffic from Google Search, with traffic losses outpacing any incidental gains by a ratio of two-to-one. Similarly, the Professional Publishers Association in the United Kingdom documented CTR declines ranging from 10% to 25% year-over-year, remarkably occurring despite the websites maintaining completely stable algorithmic rankings.

The Anatomy of Pixel 0 and Behavioral Screen Real Estate

To comprehend why such a severe collapse in click-through rates is occurring, one must analyze the physical mechanics of the search interface and the cognitive psychology of the modern internet user. The devastation of traditional traffic is deeply tied to user interface design, specifically the concept of “Pixel 0.” AI Overviews occupy this absolute zenith of the search interface, effectively pushing traditional organic rankings, featured snippets, and even premium paid advertisements entirely below the visible fold.

The visual dominance of this spatial displacement is profound and mathematically measurable. Current user interface analytics reveal that AI Overviews consume approximately 42% of a user’s screen real estate on desktop monitors, and an overwhelming 48% on mobile device displays. Given that macro-level web analytics dictate that roughly two-thirds of all global Google searches are conducted on mobile devices, and more specifically, 81% of queries that successfully trigger an AI Overview occur on mobile platforms, the generative summary practically functions as the entirety of the search experience for the vast majority of users. When a user queries a B2B software definition or a technical how-to question on their smartphone, the AI Overview is often the only element visible without scrolling.

Human behavioral economics dictates the path of least cognitive resistance. Eye-tracking and interface interaction data indicate that 70% of searchers only read the first few lines of an AI Overview, entirely ignoring the lower two-thirds of the generated text. If a user’s informational intent is satisfied within the first three seconds of viewing the Pixel 0 summary, the mathematical probability of them executing a scroll action to interact with a traditional organic link approaches zero. Interestingly, interface design introduces paradoxical user actions: while users may not read the entirety of the text, 88% of users will click the “show more” toggle to expand a truncated AI Overview, expanding the AI’s footprint even further down the page and pushing organic links deeper into invisibility.

Trust in this generative paradigm is solidifying rapidly among critical demographic cohorts. Specifically, individuals aged 25 to 34 utilizing mobile devices represent the highest adoption demographic, choosing the AI Overview as the final, definitive answer to their query in 50% of all instances. Broadly, 70% of consumers now state they trust generative AI search results at least somewhat, and 79% of all consumers expect to actively use AI-enhanced search features within the next twelve months. This signals that the behavioral shift is not a temporary anomaly or a forced beta test, but a permanent evolution in how human beings expect to interface with digital information.

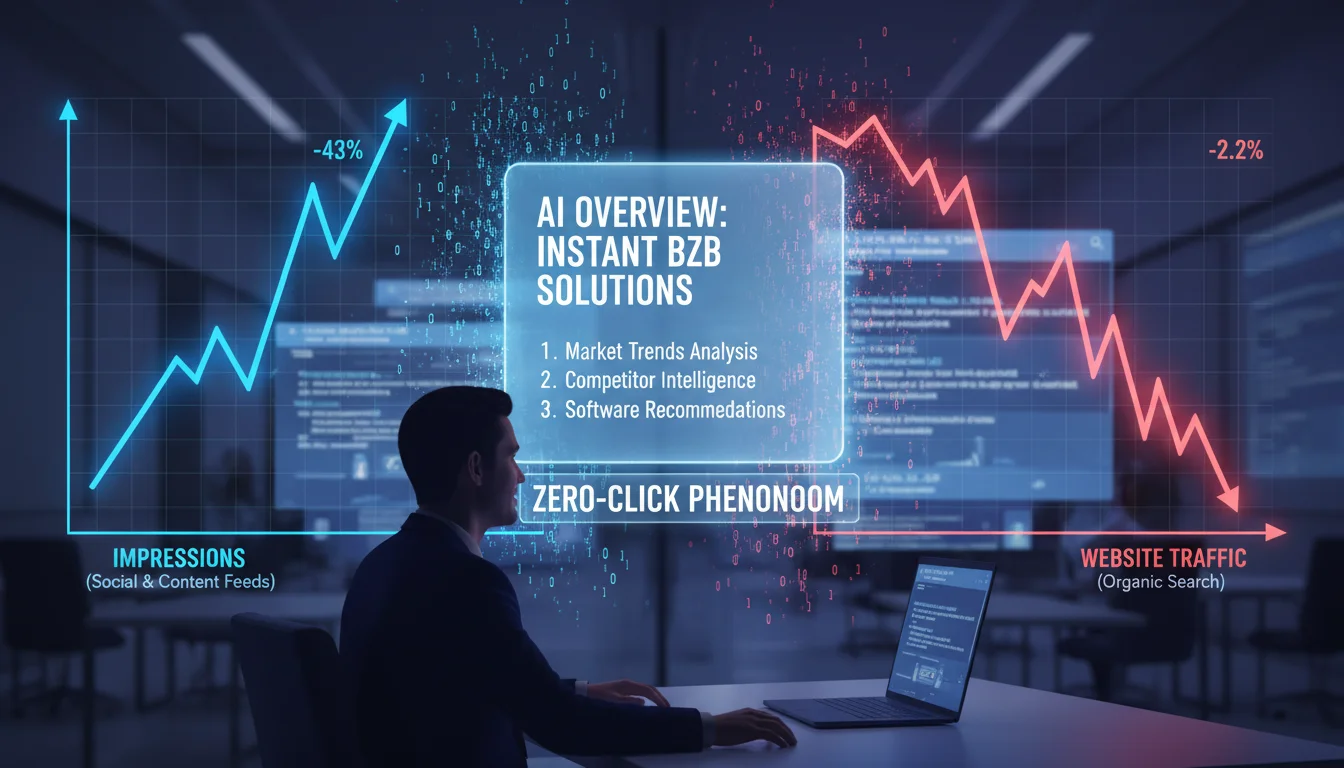

The Great Decoupling: Rising Impressions Against Falling Traffic

The confluence of catastrophic CTR drops and the absolute dominance of Pixel 0 has birthed a deeply confusing analytical paradox for B2B marketing leaders. This phenomenon has been aptly designated within the industry as “The Great Decoupling”. Historically, a digital marketer could rely on a linear correlation: if a website’s impressions (the number of times it is fetched and displayed by a search engine) increased, website traffic (actual clicks) would increase proportionally. The Great Decoupling describes an environment where search volume and query frequency continue to rise, yet the corresponding clicks exiting to external B2B websites are in significant, simultaneous decline.

At the heart of this decoupling lies the “Citation Fallacy.” When Google and standalone LLMs initially rolled out generative answers, they integrated footnote citations to the external websites they scraped to formulate their responses. The prevailing optimistic assumption within the SEO community was that these citations would serve as a modernized referral traffic mechanism, replacing the standard blue links. The empirical data, however, decisively refutes this hypothesis. Rigorous behavioral tracking from Pew Research and independent agencies indicates that only a fractional 1% of AI Overviews result in a user actually clicking through to a cited source. Because the user is provided with a cleanly synthesized, highly readable answer natively on the search platform, the underlying source material becomes utterly redundant. The user has extracted the value without ever needing to visit the creator of that value. This cements the permanent paradigm shift from a referral-based internet ecosystem to an extraction-based one.

The aggregate effect of this decoupling on corporate web traffic is highly destructive. Forensic analysis shows that 73% of all B2B websites experienced net traffic losses between the years 2024 and 2025. The average year-over-year decline across these affected properties sits at a severe 34%. This real-world erosion is validating and, in many sectors, accelerating past macroeconomic predictions, such as the widely circulated Gartner forecast predicting a baseline 25% drop in total search volume by the end of 2026. The reality of the modern web is staggering: 60% of all Google searches conducted on desktop computers are now “zero-click” searches, meaning the user interacts with the SERP and leaves without clicking a single external link. On mobile devices, this zero-click saturation is even higher, reaching 77.2%.

Granular Impact Assessment: Query Intent and Industry Sectors

While the macro-level data presents a uniform narrative of decline, a granular assessment reveals that the impact of The Great Decoupling is not distributed evenly. The severity of the traffic erosion correlates exceptionally strongly with two primary variables: the specific intent of the user’s search query, and the broader industry category of the website.

The most severe devastation is concentrated within top-of-funnel, educational queries. The digital ecosystem classifies search intent into three broad categories: Informational, Transactional, and Navigational. The table below illustrates the disparate impact of AI integration across these intent vectors.

Search Query Intent Classification

Table 1: Forensic Breakdown of Click-Through Rate Impacts Based on Query Intent Types

| Intent Type | Pre-AI Average CTR Baseline | Post-AI Average CTR Reality | Estimated Net Traffic Impact | Core Characteristics and AI Vulnerability |

|---|---|---|---|---|

| Informational (How/What/Why) | 19% – 20% | 8% – 9% | -50% to -61% | Exceptionally vulnerable. AI excels at synthesizing definitions, outlining standard processes, and resolving top-of-funnel research without requiring external validation. |

| Transactional (Buy/Pricing) | ~15% | ~12% | -20% | Moderately impacted. While AI can compare options, users ultimately require access to secure vendor websites to finalize purchases, verify real-time pricing, or book official demonstrations. |

| Navigational (Brand Name) | Highly Variable | Highly Variable | -10% to -15% | Highly stable. Users explicitly typing a brand name are seeking a specific company portal, login page, or customer service hub, bypassing the need for AI summarization. |

Within the B2B sector specifically, the divergence between different industry verticals is equally stark. Industries that have historically relied on expansive content marketing strategies to capture informational queries are suffering the most. The B2B Software as a Service (SaaS) industry is currently navigating catastrophic losses. Market leaders within the SaaS sector, companies that spent millions building massive libraries of inbound educational content, have reported traffic erosion of 70% to 80% specifically for research-phase informational queries. Major enterprise platforms, such as HubSpot, have been documented experiencing massive organic traffic drops securely within this severe 70% to 80% range.

Conversely, sectors dealing in highly specialized, legally sensitive, or strictly regulated information have seen a much softer impact.

The table below outlines these industry-specific estimates.

Estimated B2B Traffic Decline Correlated by Industry Vertical

| B2B Industry Vertical | Estimated Organic Traffic Decline | Underlying Algorithmic Rationale |

|---|---|---|

| B2B SaaS (General) | -34% to -80% | High reliance on easily summarized software definitions and workflow comparisons. |

| Marketing Tech (MarTech) | -40% to -60% | Massive saturation of generic best-practice content easily replaced by LLMs. |

| News / Business Info | -14% to -40% | Moderate impact; AI summarizes news, but users still seek original journalistic authority and deep analysis. |

| E-commerce (B2B) | -5% to -10% | Highly transactional intent protects the sector from pure informational displacement. |

| Healthcare / MedTech | Stable / Slight Drop | LLMs exhibit heavily guarded, cautious behavior (AI safety rails) around critical medical or technical advice, deferring to authoritative links. |

This disparity creates the “B2B SEO Paradox”: a scenario where an enterprise SaaS company might successfully improve its keyword rankings and domain authority over a twelve-month period, yet simultaneously experience a 50% collapse in actual pipeline traffic because the search interface itself has internalized the value of those rankings.

The Migration to Walled Gardens and Standalone LLMs

The decline in traditional organic traffic from Google is not solely a consequence of interface alterations; it represents a more fundamental migration in human behavior. B2B buyers are actively moving their research processes off traditional search engines entirely, favoring new digital environments that offer distinct advantages.

The most prominent shift is the ascendancy of standalone Large Language Models. Current behavioral surveys indicate that an overwhelming 80% of B2B technology buyers now utilize platforms like ChatGPT, Claude, and Gemini as their primary engines for vendor discovery, market research, and capability comparison. The scale and velocity of this adoption are without historical precedent. As of April 2025, OpenAI’s ChatGPT reached over 800 million weekly active users, representing a staggering 8x growth trajectory since October 2023. While ChatGPT maintains market dominance, specialized alternative networks are seeing explosive referral growth, indicating a highly experimental user base. In mid-2025, platforms like Grok achieved massive 1279% growth rates, while Claude demonstrated sustained month-over-month momentum of 58%. Conversely, less optimized models like DeepSeek have seen sharp declines, proving that B2B users are highly sensitive to the quality of the generative output.

This migration fundamentally rewrites the mechanics of B2B research. Historically, a procurement manager evaluating supply chain software would execute a traditional search, open five to ten different vendor websites in separate browser tabs, manually read through feature pages, and mentally synthesize a comparison matrix. Today, this cumbersome “search and browse” phase is entirely bypassed. The buyer replaces it with a “prompt and synthesize” interaction. They instruct an LLM to perform the cognitive labor: “Compare Vendor A, Vendor B, and Vendor C regarding their API rate limits, implementation timelines, and enterprise pricing structures, formatted as a detailed table.” The LLM instantly accomplishes what previously took hours of tedious browsing, entirely circumventing the need to visit the vendor websites. This interaction drastically compresses the traditional marketing funnel, providing decision-enabling intelligence upfront and actively deprioritizing website engagement.

Concurrently, a secondary behavioral shift is occurring in response to the proliferation of AI. As the broader internet becomes deeply saturated with commoditized, machine-written text, high-intent B2B traffic is seeking refuge in “walled gardens”—closed or heavily moderated platforms that offer verifiable human interaction, peer review, and experiential, operator-led knowledge.

Corporate blogs are losing ground to professional networks and niche forums. Current data indicates that 40% of B2B marketers now rate LinkedIn as their single most effective channel, capitalizing on the verifiable professional identity the platform mandates. Simultaneously, anonymous but highly moderated communities are wielding massive influence. An estimated 75% of B2B decision-makers acknowledge that discussions on Reddit directly influence their enterprise purchasing decisions. Buyers are actively seeking out specific industry subreddits to find unfiltered, highly technical opinions from actual practitioners, purposefully circumventing heavily sanitized, SEO-optimized vendor copy.

The Algorithmic Disconnect: Traditional Rankings vs. AI Citations

As search engines and standalone LLMs increasingly assume the role of “content personal shoppers,” the underlying algorithms determining which brands are highlighted have evolved significantly away from traditional SEO signals. For twenty years, achieving visibility required optimizing for specific keyword densities, building massive, volume-based backlink profiles, and matching the broad semantic intent of a generalized audience. While establishing domain authority remains a foundational necessity, the specific mechanism by which an AI selects its citations is entirely distinct from traditional PageRank.

AI models are precision extractors, not generalist rankers. They do not automatically prioritize the webpage with the highest number of referring domains. Instead, they prioritize entity clarity, contextual relevance, and the presence of specific, highly structured “chunks” of factual data that best answer a highly nuanced user prompt.

This algorithmic divergence has manifested in what industry analysts term the “Position 21+ Phenomenon.” A deep analysis of ChatGPT’s search and retrieval behavior reveals a shocking metric: the LLM cites webpages that rank in traditional organic search positions 21 and beyond almost 90% of the time. This indicates that ChatGPT frequently bypasses the entire first and second pages of traditional Google results to find the specific data node it requires. Similarly, data tracking Google’s own AI Overviews indicates that 31% of the citations drawn into its generative responses originate from pages ranking beyond position 100 in the traditional index.

This phenomenon carries massive implications for B2B strategy. A highly technical, deeply nested PDF document buried on the fourth page of Google, or a highly specific comment in a niche forum, could unexpectedly govern the narrative an AI model provides to millions of global users regarding a specific product category. Conversely, a brand’s meticulously optimized, highly backlinked pillar page ranking securely in Position 1 might be entirely excluded from the AI Overview sitting directly above it. Rankings and AI citations are now two fundamentally different games. Traditional SEO aimed to satisfy the statistical average of a query, resulting in bloated, generalized “ultimate guides.” AI models deconstruct and bypass this generic content in favor of dense, specific expertise.

To navigate this, one must analyze source reliance—understanding exactly where LLMs gather their training data and real-time contexts. Despite the individual dominance of community forums—with Quora standing as the most frequently cited website in Google AI Overviews, followed closely by Reddit—commercial domains remain absolutely critical. In fact, 50% of the outbound links provided in ChatGPT 4o responses point directly to business or service websites, proving that LLMs rely heavily on corporate entities for authoritative, topical information.

Furthermore, AI models display an immense reliance on Digital PR and broader media coverage, which accounts for approximately 34% of all AI citations. Mentions in prominent industry publications weigh heavily in an LLM’s assessment of brand authority and factual consensus. Social platforms, including LinkedIn articles and specific Reddit threads, contribute to nearly 10% of AI citations, acting as vital real-time sentiment indicators. Consequently, in the generative era, unlinked brand mentions within trusted digital environments often carry significantly more algorithmic weight than a traditional hyperlink.

The Economic Paradox of the AI Search Visitor

While the aggregate volume of organic traffic is collapsing, and impressions fail to translate into clicks, the remaining traffic ecosystem is demonstrating profoundly different economic characteristics. A critical finding from extensive market tracking in 2025 is that raw traffic volume and actual business impact are now two entirely decoupled metrics.

Currently, despite the massive user bases of platforms like ChatGPT, AI search platforms account for less than 1% of total referral traffic across the broader web. For the vast majority of B2B organizations, AI search functions almost exclusively as a top-of-funnel research and exploration channel. Analytics reveal near-zero direct conversions tracked immediately from platforms like ChatGPT. Users enter the funnel via AI to gather intelligence and map the market, but they rarely execute a commercial transaction directly from the chat interface. Instead, traditional organic search remains the absolute cornerstone of direct conversion, maintaining the highest conversion power and consistently outperforming all other digital channels. The user who utilizes traditional search demonstrates a clear, immediate intent to interact with a vendor’s interface.

However, complex forecasting models project a rapid convergence.

Extensive data modeling anticipates that visitors originating from AI search platforms will officially surpass traditional search visitors for complex, research-heavy topics—such as digital marketing software or enterprise SEO platforms—by early 2028. If Google fully defaults its core experience to an “AI Mode,” completely overriding traditional results, this timeline will accelerate drastically.

More importantly, the inherent commercial value of an AI-driven visitor is exponentially higher than a traditional visitor. Current conversion rate analyses reveal a startling economic paradox: the average AI search visitor is 4.4 times more valuable than a traditional organic search visitor.

The reasoning behind this massive multiplier effect lies in the mechanics of lead qualification. Because the LLM has already provided the user with deep comparative data, answered highly specific technical implementation questions, and synthesized the competitive market landscape, the user is immensely educated before they ever click a link to visit a vendor. When a user finally transitions from the AI chat interface to the vendor’s actual website, they are bypassing the traditional top-of-funnel and mid-funnel education stages entirely. They arrive as a highly qualified, bottom-of-funnel prospect ready to engage in a transactional conversation, view pricing, or request a contract. Thus, while the gross volume of clicks has decreased dramatically, the economic density and conversion likelihood of the surviving clicks have multiplied. By the end of 2027, AI channels are projected to drive similar amounts of global economic value as traditional search, despite driving a fraction of the raw traffic.

Furthermore, there is a massive, quantifiable competitive advantage available to brands that manage to integrate themselves into the AI’s response narrative. The Seer Interactive study established a clear “survival path” within the disrupted SERP. While overall CTR drops when an AI Overview is present, the brands that successfully secure a citation within that AI Overview earn 35% more organic clicks and an astonishing 91% more paid clicks compared to competitor queries where they are omitted from the generative summary. The AI essentially acts as an objective digital kingmaker. By citing a brand, the algorithm confers a powerful layer of objective, third-party trust onto that company, dramatically increasing the likelihood that the user will choose to engage with that specific brand over all others.

Strategic Imperatives for 2026: Generative Engine Optimization

Prioritizing Exposure over Raw Traffic Volume

Because search algorithms are now the ultimate gatekeepers of information, and the interface actively suppresses outbound links, visibility is the new primary metric of digital success. If up to 77% of mobile searches result in zero clicks, a strategy predicated on driving millions of visitors to a blog is mathematically doomed. Instead, organizations must embrace “Zero-Click Marketing.” The strategic goal is to ensure that a brand’s name, its unique value propositions, and its product category relevance are injected directly into the generative text of the LLM.

In B2B purchasing, the primary goal of discoverability is rarely an immediate credit card transaction; it is inclusion on a procurement manager’s shortlist. Even if a buyer never clicks through to the corporate website, conditioning the LLM to consistently associate a specific brand with a solution to the buyer’s problem equates to a massive strategic victory. Organizations must abandon legacy rank trackers and implement sophisticated AEO monitoring toolsets. Platforms like Morningscore are emerging to fulfill this need, offering dedicated AI Overview trackers and ChatGPT visibility metrics, allowing brands to monitor their presence in AI responses just as they historically tracked keyword rankings. Success requires tracking share of voice, positive sentiment clustering, and citation frequency within these models. Traffic is no longer the sole goal; being cited by the machine is the new top-of-funnel.

Publishing Irreplicable, Human-Centric Insights

LLMs function by scraping, summarizing, and regurgitating existing historical information. They are fundamentally incapable of inventing genuine human experience, conducting primary field research, or generating novel, unpublished data. Therefore, dedicating marketing budgets to producing generic “How-to” guides, simple software definitions, or derivative listicles is a futile effort. It simply provides free training data to the AI engine that will ultimately replace the brand’s visibility.

To force AI models to cite a brand as a primary, authoritative source, organizations must completely pivot their content operations to produce original insights that the AI cannot recreate independently. This mandates the execution of proprietary customer surveys, the publication of unique benchmark data, the detailing of rigorous, data-heavy case studies, and the offering of first-person, practitioner-led insights. Furthermore, this content must be grounded entirely in real-world operator experience; abstract, high-level “content hubs” produced by generalist copywriters no longer influence algorithmic trust. Content must differentiate itself by engaging in authentic, highly technical conversations that signal true E-A-T (Expertise, Authoritativeness, Trustworthiness) to both human buyers and machine evaluators.

Intent-Based Keyword Mapping and Consolidated Architecture

The standard SEO practice of targeting keywords strictly based on the highest monthly search volume is actively detrimental in an AI-first environment. B2B organizations must map keywords and query clusters strictly by nuanced user intent, carefully anticipating which specific topics will trigger a generative summary, a comparison matrix, or a conversational follow-up prompt.

This requires a recalibration of value, prioritizing highly specific, low-volume keywords. In technical B2B niches, a long-tail query with only 30 searches a month may represent immense commercial intent, far outweighing the superficial value of a broad industry keyword generating 30,000 searches. Content assets must be ruthlessly consolidated. Rather than publishing ten overlapping, thin blog posts to target minor variations of a keyword, marketing teams must architect singular, highly authoritative “pillar” pages. These pages must feature distinct, cleanly formatted sections explicitly designed to answer multiple specific intents—ranging from basic definitions and pricing structures to complex workflows, competitor alternatives, and technical implementation guides.

Furthermore, this consolidated architecture must cater to multiple decision-makers simultaneously. Unlike consumer marketing, B2B purchasing involves a committee. A single content asset must contain tactical data for the software practitioner, workflow comparisons for the mid-level manager, and high-level ROI scalability metrics for the executive sponsor. By concentrating this density of value into a single node, the brand increases the probability that an LLM will utilize the page as a primary reference source.

Technical Refinement for Machine Readability and Tracking

Despite the paradigm shift toward generative exposure, foundational technical SEO architecture remains critically important. This is precisely because AI platforms deploy their own proprietary crawlers to feed their LLMs and construct their internal knowledge graphs. ChatGPT actively utilizes Bing’s crawling infrastructure, Google’s AI Modes rely entirely on the traditional Google Index pipeline, and Claude leverages Brave’s search index mechanisms. Therefore, optimizing a website’s technical foundation ensures frictionless discoverability across all emerging platforms simultaneously.

However, the specific nature of technical optimization must evolve. Content must be structured to be highly “chunkable” and flawlessly machine-readable. AI systems parse content in discrete, factual nodes rather than fluid narratives. Organizations must enforce strict, mathematically clean internal link lattices to clearly signal the semantic relationships between core product pages and tangential industry topics. Utilizing consistent definitional terminology, maintaining clear entity alignment, and deploying robust, highly detailed schema markup are absolutely mandatory to help AI engines interpret and extract facts accurately without hallucination.

Furthermore, organizations must ensure their digital footprint is fully and rapidly crawlable without relying heavily on client-side JavaScript execution.

Many lightweight AI crawlers lack the computational budget to render complex JS frameworks, meaning heavily scripted websites are effectively invisible to the systems generating tomorrow’s search results. As reinforced by the 100-website benchmark studies, extreme speed, unhindered accessibility, and clean code are no longer merely best practices; they are the baseline requirements for existence in the generative era.

Conclusion

The findings synthesized from comprehensive market tracking, behavioral studies, and performance analyses between 2024 and 2026 clearly indicate an irrevocable shift in the digital economy. The era of relying on traditional organic search as a predictable, high-volume, top-of-funnel engine for B2B traffic has definitively concluded. The aggressive expansion of AI Overviews, which now command the critical Pixel 0 interface, alongside the mass migration of B2B buyers to standalone LLMs, has precipitated a catastrophic decline in traditional discovery clicks. This reality has effectively decoupled brand visibility from raw website traffic, birthing an extraction-based internet where information is synthesized on-platform, rendering traditional referral links obsolete for research-based queries.

However, this disruption is not synonymous with the death of digital marketing; it is an evolutionary filter. While the aggregate volume of traffic is shrinking rapidly, the economic density of the surviving, AI-qualified traffic has multiplied significantly. B2B organizations that persistently cling to outdated, volume-centric SEO playbooks face profound stagnation and eventual algorithmic erasure. Survival and dominance in this new ecosystem demand a radical, immediate strategic pivot toward Generative Engine Optimization. Enterprise leaders must ruthlessly prioritize the publication of proprietary, human-centric data, architect their digital assets for optimal, latency-free machine extraction, and aggressively cultivate unlinked brand authority across trusted, multi-platform ecosystems. Ultimately, the future of B2B discovery is no longer about driving millions of users to a corporate domain; it is about ensuring that the brand serves as the definitive, unassailable source of truth embedded seamlessly within the machine-generated answers of the future.