Agency Static Site Workflows: Optimization for Speed & Security

The architectural paradigm of web development has undergone a foundational and irreversible shift, transitioning away from monolithic, server-side rendered applications toward decoupled, API-driven static site architectures. Often categorized under the Jamstack umbrella, this methodology abstracts the frontend presentation layer from backend business logic and databases. Instead, it relies heavily on Static Site Generators (SSGs), headless Content Management Systems (CMS), and globally distributed Content Delivery Networks (CDNs). For digital agencies managing expansive portfolios containing dozens or hundreds of client websites, this architectural shift introduces a complex orchestration challenge. Agencies are uniquely pressured to balance the relentless consumer demand for sub-second page load speeds and dynamic interactivity against the absolute necessities of robust cybersecurity, stringent access controls, and automated infrastructure management.

The operational reality of a modern digital agency dictates that infrastructure can no longer be managed manually. Deploying, securing, and maintaining hundreds of distinct client environments requires a meticulous approach to Infrastructure as Code (IaC), multi-tenant DNS provisioning, and continuous integration/continuous deployment (CI/CD) pipelines. Furthermore, the introduction of serverless functions—which execute backend logic on demand without persistent server maintenance—has simultaneously solved the problem of dynamic content on static sites and introduced entirely new vectors for cyberattacks, resource exhaustion, and billing overages.

This comprehensive report evaluates the critical components of modern static site workflows optimized for digital agencies. It examines the intricate interplay between advanced rendering strategies, enterprise-grade headless CMS integrations, edge computing performance, serverless security protocols, and automated domain management. By synthesizing these highly technical domains, the subsequent analysis provides a strategic and operational framework for agencies seeking to architect scalable, high-performance, and secure digital portfolios in 2026 and beyond.

Architectural Foundations: Generators, Repositories, and Content Management

The foundation of any static site workflow rests on the strategic selection of the Static Site Generator (SSG) and the structural organization of the underlying codebase. The modern SSG ecosystem has matured significantly, evolving beyond simple Markdown-to-HTML compilers into sophisticated, full-stack web frameworks capable of granular rendering control and advanced routing.

Frameworks such as Next.js, Nuxt, SvelteKit, and Astro currently dominate the landscape, each offering distinct architectural philosophies designed to solve specific performance bottlenecks. Next.js, tightly integrated with the React ecosystem, provides immense power for complex web applications and enjoys first-class, highly optimized support on platforms like Vercel. Conversely, Astro has gained substantial traction through its pioneering “island architecture,” which ships zero JavaScript to the client browser by default. Instead of hydrating the entire page, Astro hydrates only specific, interactive components as needed, drastically reducing the total blocking time of the main thread.

Other frameworks prioritize different developer experiences or performance metrics. Qwik, for instance, is a newer entrant that has drawn significant attention for its radical approach to web performance. It promises instantaneous interactivity with zero hydration cost by utilizing a “resumability” framework, which serializes the application state into HTML and only downloads JavaScript when a user directly interacts with a component. For documentation-heavy client sites or technical knowledge bases, specialized generators like Docusaurus or MkDocs—which utilize a “docs-as-code” philosophy with simple YAML configurations—provide lightweight, highly optimized alternatives that require minimal frontend engineering overhead.

| Static Site Generator | Primary Framework Ecosystem | Core Architectural Philosophy & Best Use Case |

|---|---|---|

| Next.js | React | Full-stack application framework with granular rendering controls (SSG, SSR, ISR, PPR). Ideal for complex, data-heavy agency clients. |

| Astro | Framework Agnostic | Island architecture shipping zero JavaScript by default. Ideal for content-rich marketing sites and high-performance landing pages. |

| SvelteKit | Svelte | Compiler-first approach reducing runtime overhead. Ideal for highly interactive, lightweight applications. |

| Nuxt.js | Vue.js | Robust ecosystem for Vue developers, mirroring Next.js capabilities with strong module integration. |

| Qwik | Qwik | Resumability over hydration, delaying JavaScript execution until user interaction. Ideal for achieving perfect performance scores on complex UIs. |

| MkDocs / Docusaurus | Python / React | Docs-as-code philosophy. Ideal for technical documentation, client manuals, and corporate wikis. |

Repository Architecture: Monorepo vs. Multi-Repo Strategies

For agencies managing multiple clients, the decision between a monorepo and a multi-repo architecture carries profound implications for developer velocity, cybersecurity, and long-term project maintainability.

A monorepo consolidates code from various services, shared UI libraries, and disparate client applications into a single, centralized repository. This approach fosters rapid collaboration, simplifies dependency management, and allows agencies to enforce uniform linting, testing, and formatting standards across all client projects simultaneously. Changes to a shared, agency-wide UI component library can be propagated across all client sites in a single commit, ensuring brand consistency and drastically reducing duplicated development effort. Furthermore, modern tooling like Turborepo or PNPM workspaces orchestrates these complex, multi-application builds efficiently, caching unchanged packages to accelerate CI/CD pipelines.

However, the monorepo approach introduces significant security and governance risks in a multi-client agency context. An overly permissive monorepo grants all internal developers access to the entire client portfolio, fundamentally violating the principle of least privilege. If an agency employs freelance developers, external contractors, or offshore teams for a specific client project, a monorepo makes it exceptionally difficult to restrict their access solely to that assignment. The version control system enforces coherence, but at the cost of strict access isolation.

Conversely, a multi-repo strategy abstracts each client project—and often the headless CMS configuration and frontend code of a single project—into entirely distinct repositories. The primary advantage of this architecture is enhanced security; granular permissions can be assigned per repository, ensuring that team members only access the codebases relevant to their current, authorized assignments. Multi-repos also facilitate seamless abstraction and scalability, allowing an agency to easily hand off a repository to a client’s internal IT team at the end of a project lifecycle without disentangling it from a shared corporate codebase. In practice, a hybrid approach often yields the best operational results: a multi-repo structure for individual client projects combined with a centralized, version-controlled package registry for shared agency components.

Headless Content Management Systems and Non-Technical Workflows

The decoupling of the frontend presentation layer from the backend content repository necessitates the implementation of a Headless CMS. For agency clients, the chosen CMS must delicately balance API flexibility for the development team with an intuitive, visual workflow for non-technical marketing teams. Traditional monolithic CMS platforms like WordPress or Drupal bound the frontend presentation and backend database together, resulting in severe performance bottlenecks, security vulnerabilities, and constrained omnichannel delivery. However, these legacy systems offered highly familiar “What You See Is What You Get” (WYSIWYG) editing experiences that clients have come to expect.

Transitioning clients to a headless architecture requires mitigating the loss of this visual context. Platforms such as Storyblok address this friction by integrating real-time visual editors that display components inline, allowing marketers to assemble pages from pre-built “bloks” visually. Storyblok’s component-based architecture empowers non-technical users to execute routine publishing, scheduling, and updates without generating developer tickets or triggering manual code changes.

The financial and operational scaling of these headless platforms must be carefully monitored.

Storyblok, for example, operates on a tiered pricing model that dictates user seats, API requests, and advanced feature availability.

| Storyblok Plan | Monthly Cost | Included Seats | Traffic / API Limits | Target Agency Use Case |

|---|---|---|---|---|

| Starter (Free) | $0 | 1 (Max 2) | 100GB / 100k requests | Proof of concepts, personal developer portfolios, very small client sites. |

| Growth | $99 | 5 (Max 10) | 400GB / 1M requests | Standard agency client websites, regional businesses, mid-sized e-commerce. |

| Growth Plus | $349 | 15 (Max 20) | 1TB / 4M requests | High-traffic content hubs, large marketing teams requiring multiple locales. |

| Premium & Elite | Custom | Custom | Fully customized | Global enterprises requiring 99.99% SLAs, Single Sign-On (SSO), and custom roles. |

For global enterprise clients requiring sophisticated governance rather than pure visual assembly, platforms like Kontent.ai provide structured content modeling and multi-stage approval workflows involving legal and regional compliance departments. These enterprise-grade systems utilize artificial intelligence to offer content recommendations and maintain strict audit trails, ensuring that localization and translation management across global markets do not disrupt the continuous deployment pipeline.

The implementation of a headless CMS fundamentally alters agency-client operational dynamics by enabling parallel workflows. Developers can iterate on frontend components, optimize CI/CD pipelines, and refine rendering strategies simultaneously while content teams draft, preview, and approve campaigns within the CMS interface. Because content is delivered exclusively via APIs (often JSON or GraphQL), the same centralized content repository can seamlessly populate a static website, a mobile application, and digital signage, realizing the “write once, publish everywhere” omnichannel strategy.

Performance Engineering: Rendering Paradigms and Edge Computing

The primary value proposition of static site architectures is unparalleled speed. Serving pre-compiled HTML, CSS, and highly optimized assets directly from a globally distributed Content Delivery Network (CDN) drastically reduces Time to First Byte (TTFB) and improves Core Web Vitals such as Largest Contentful Paint (LCP) and First Input Delay (FID). However, as web applications grow increasingly dynamic, relying solely on static compilation becomes fundamentally untenable. E-commerce platforms, authenticated client portals, and real-time data dashboards require data freshness that static builds cannot provide without triggering prohibitive, hours-long build times.

The Evolution of Rendering Strategies

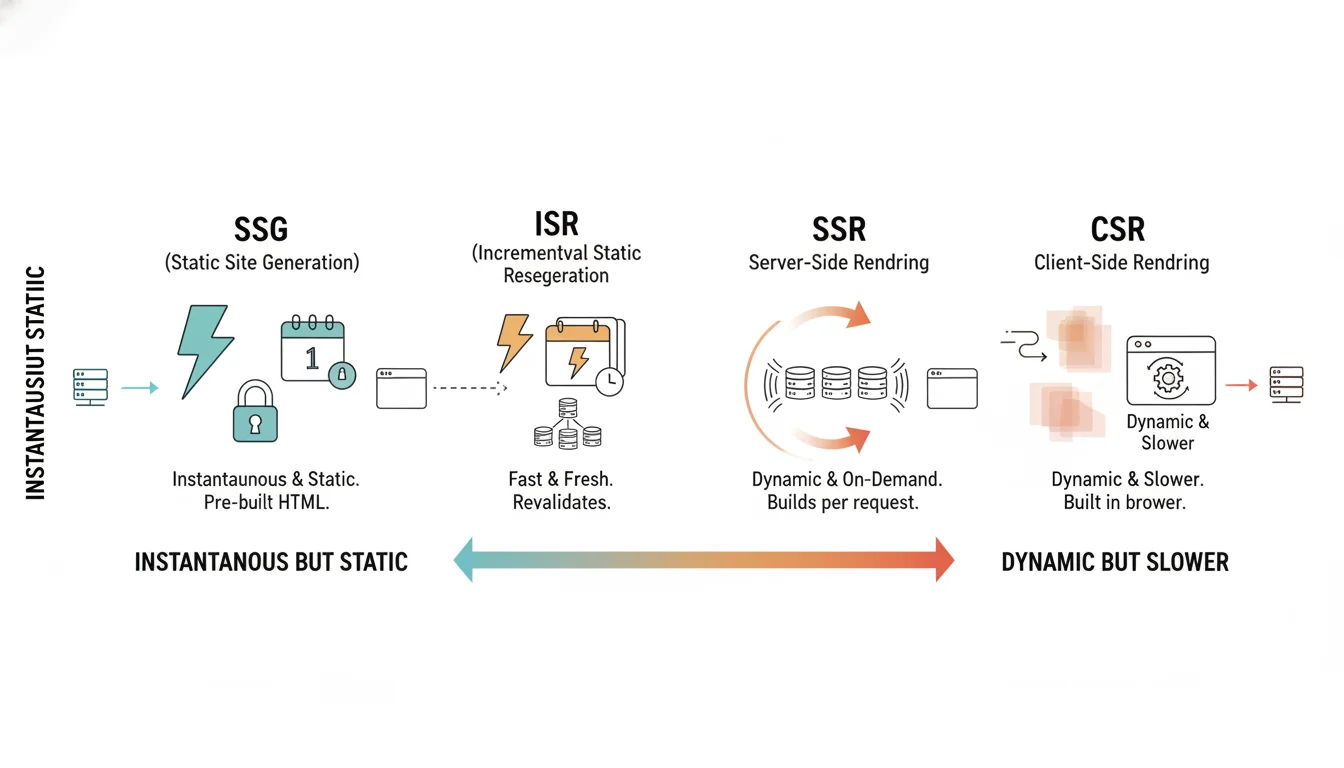

To resolve the inherent tension between delivery speed and data freshness, modern frameworks have evolved a spectrum of rendering strategies, allowing agencies to tailor the performance profile to the specific needs of the client.

Static Site Generation (SSG) compiles pages entirely at build time. This offers the highest possible performance and security profile, as there is no database querying or server-side execution occurring at runtime. However, the content remains completely stale until the next CI/CD build process is triggered via webhooks. Conversely, Server-Side Rendering (SSR) generates pages dynamically on the server for every incoming request. This guarantees absolute data freshness and allows for user-specific personalization, but the catastrophic tradeoff is severely degraded TTFB, as the server must resolve all database queries and external API calls before transmitting a single byte of HTML to the client browser.

Incremental Static Regeneration (ISR) was developed to bridge this gap. ISR allows specific static pages to be revalidated and rebuilt in the background at defined chronological intervals, without requiring a full site rebuild. While this ensures relatively fast load times for subsequent visitors, the data is still stale within the revalidation window, and it remains fundamentally incapable of delivering real-time, user-specific personalization based on session state.

| Rendering Strategy | Delivery Speed (TTFB) | Data Freshness | Personalization Capability | Primary Drawback |

|---|---|---|---|---|

| SSG (Static) | Instantaneous | Stale until rebuild | Identical for all users | Requires full site rebuilds for content updates. |

| ISR (Incremental) | Very Fast | Stale within cache window | Identical for all users | Complex cache invalidation logic required. |

| SSR (Server-Side) | Slow | Always fresh | Highly personalized | High server compute costs; blocks initial render. |

| CSR (Client-Side) | Slow FCP | Always fresh | Highly personalized | Poor SEO; heavily dependent on client device capability. |

The Paradigm Shift: Partial Prerendering (PPR)

The historical binary choice between the instant delivery of SSG and the dynamic freshness of SSR has been fundamentally disrupted by the introduction of Partial Prerendering (PPR), an experimental but highly impactful rendering model pioneered within the Next.js App Router.

PPR allows a single page route to be simultaneously static and dynamic. During the build process, the framework generates a static HTML shell containing the structural layout, navigation elements, footers, and static marketing copy that rarely changes. When a user requests the page, this static shell is served instantly from the CDN edge, resulting in an optimal TTFB and immediate visual feedback to the user. Concurrently, the dynamic components—such as live inventory counts, real-time pricing, user-specific recommendations, or shopping cart states—are executed at request time on the server.

Instead of waiting for the dynamic data to resolve before responding, the server utilizes React Suspense to stream the dynamic chunks into the existing static shell over a single HTTP connection as the data becomes available. This architecture eliminates client-side fetching waterfalls and jarring layout shifts while achieving the previously impossible synthesis of static performance and SSR personalization. For agencies building complex e-commerce platforms or personalized client portals, mastering PPR drastically reduces backend server load and maximizes perceived performance.

Edge Computing and Global Distribution

To maximize the efficacy of these rendering strategies, agencies must leverage advanced edge computing and CDN configurations. Modern CDNs operate on geographically distributed Points of Presence (PoPs) equipped with edge servers. These servers not only cache static assets but increasingly execute serverless logic closer to the end user, reducing the latency inherent in transatlantic or transpacific data routing.

Optimizing global delivery requires rigorous adherence to caching best practices. Establishing clear performance baselines across major geographic regions is crucial; industry benchmarks suggest aiming for a global TTFB under 200 milliseconds and an LCP under 2.5 seconds. The cache hit ratio can be maximized by ensuring static assets are aggressively cached with long Time-to-Live (TTL) values and versioned URLs, which force cache invalidation only when the underlying file fundamentally changes. Conversely, user-specific dynamic content must be explicitly bypassed from the cache using custom cache keys to prevent catastrophic data leakage between distinct user sessions. Furthermore, adopting modern network protocols such as HTTP/3 and QUIC mitigates latency and packet loss over unreliable mobile networks, while enabling TLS early data accelerates secure cryptographic handshake negotiations.

Serverless Architecture: Security Posture and Threat Mitigation

While static sites inherently boast a reduced attack surface due to the absence of a traditional, continuously running backend server and direct database connections, the introduction of serverless functions to handle dynamic interactions—such as form submissions, third-party payment processing, and authenticated API requests—reintroduces significant cybersecurity vulnerabilities. Serverless environments shift the security burden; developers no longer manage operating system patches or physical hardware, but they assume full, unmitigated responsibility for application code, Identity and Access Management (IAM), and strict data sanitization.

The Serverless Threat Landscape

The ephemeral, event-driven nature of serverless computing creates a unique and challenging threat model. Because functions execute in transient containers that spin up and spin down in milliseconds, traditional long-running security agents, endpoint monitoring tools, and legacy firewalls are highly ineffective. Attackers exploit this lack of granular visibility by targeting over-privileged IAM roles, vulnerable third-party open-source dependencies, and unvalidated event inputs.

Furthermore, serverless architectures are uniquely vulnerable to Denial of Wallet (DoW) attacks. In a traditional infrastructure, a massive Distributed Denial of Service (DDoS) attack overwhelms the server resources, causing the application to crash. In a robust, auto-scaling serverless environment, the cloud infrastructure simply scales indefinitely to absorb the malicious traffic.

While the application remains online, it generates massive, unexpected billing overages for the agency or the client as they are charged for millions of fraudulent function executions.

Defensive Strategies and Least Privilege

Securing serverless functions demands a rigorous defense-in-depth approach. The absolute cornerstone of serverless security is the strict application of the Principle of Least Privilege. Each individual serverless function must be provisioned with a dedicated IAM role that grants only the exact minimum permissions required to execute its specific task. For instance, a function designed to read inventory data should be strictly and cryptographically barred from writing to the database, accessing adjacent storage buckets, or invoking unrelated cloud services.

Additional critical mitigations include:

- API Gateways as Security Buffers: Serverless functions should rarely, if ever, be invoked directly from the public internet. Utilizing an API Gateway acts as a highly configurable reverse proxy, enforcing authentication, routing rules, and access control policies uniformly across all underlying microservices.

- Immutable Deployments and Runtime Hardening: Functions must be deployed as immutable artifacts, preventing any runtime alteration of the executing code. Furthermore, specifying exact runtime environments (e.g., locking the environment to Node.js 20.11 rather than using the generic ‘latest’ tag) prevents unexpected execution behaviors or broken dependencies caused by automated cloud provider environment updates.

- Secrets Management: Hardcoding API keys, database credentials, or encryption keys within function code or standard, plaintext environment variables is a critical, highly exploitable vulnerability. Secrets must be retrieved dynamically at runtime from encrypted, centralized vaults (such as AWS Secrets Manager or HashiCorp Vault), utilizing granular access policies that dictate precisely which specific function has the clearance to access which specific secret.

- Input Sanitization and Event Validation: Every event payload and HTTP request entering the function must be treated as inherently untrusted. Rigorous input sanitization prevents code injection, SQL injection (if connecting back to legacy relational databases), and parameter tampering.

- Cold Start Context Security: Developers must not assume that the execution context (such as the /tmp directory or global variables) is clean between invocations. To optimize performance, cloud providers often reuse “warm” containers for subsequent function calls. Sensitive data left in memory from a previous execution can leak into a subsequent invocation if the container is reused, violating data isolation.

API Throttling and Rate Limiting

To protect against DoW attacks and external API rate limit exhaustion, agencies must implement aggressive throttling mechanisms. When static sites query third-party APIs (e.g., a CRM, a weather service, or a payment gateway) via a serverless function, uncontrolled frontend traffic spikes can lead to upstream API rate limit blocks, causing cascading application failures.

Implementing algorithms such as the Token Bucket or Leaky Bucket at the API Gateway or Edge Middleware level systematically controls the flow of requests. In a Token Bucket algorithm, a fixed-capacity container holds algorithmic tokens that are replenished at a consistent, configured rate. Each incoming request consumes a single token; if the bucket is empty, the request is instantly rejected with an HTTP 429 Too Many Requests status, preventing the backend from being overwhelmed.

Modern edge platforms allow for highly sophisticated per-domain and per-IP aggregation. For example, if an edge function is limited to 300 requests per minute to protect database spend, the edge network will intelligently weight incoming IP addresses based on recency and volume. It will systematically ban the specific IPs generating the highest anomalous traffic first, thereby preserving the API allocation for legitimate, human users.

Securing Jamstack Forms and CSRF Mitigation

Forms remain the primary interactive vector on static sites, utilized for capturing leads, customer feedback, and sensitive payment data. Building and securing custom backend database infrastructure simply to process a contact form contradicts the efficiency and ethos of the static workflow. Consequently, agencies frequently rely on third-party Static Form Providers or managed serverless integrations.

The market offers a spectrum of solutions with varying degrees of developer control and integrated security:

-

Lightweight API Endpoints (e.g., Basin, Getform): These tools provide a unique endpoint URL. The agency retains complete control over the frontend HTML

<form>markup, styling, and validation, simply pointing the action attribute to the external endpoint. These services handle backend data storage, email routing, and sophisticated spam protection via built-in honeypots, machine-learning virus scanning for file uploads, and reCAPTCHA integrations. This approach is highly favored by digital agencies seeking minimal plugin overhead and maximum frontend control. -

Integrated Platform Forms (e.g., Netlify Forms): Hosting platforms like Netlify automatically parse HTML files during the build step, identifying

<form>tags containing a specificdata-netlifyattribute. It dynamically provisions backend endpoints to capture submissions, applying proprietary machine-learning spam classifiers in the background. While highly convenient, this inherently couples the application’s core logic to a specific hosting provider, complicating future migrations. - Specialized Middleware (e.g., Formspree): Tools like Formspree offer React-specific packages and extensive plugin ecosystems that facilitate direct, secure integrations into enterprise CRMs like HubSpot, Zendesk, or Salesforce. They utilize automatic retries with exponential backoff to guarantee data delivery even if the downstream CRM experiences a temporary outage.

| Form Provider | Frontend Control | Built-in Spam Protection | Native CRM Integrations | Hosting Agnostic |

|---|---|---|---|---|

| Basin | Full HTML Control | High (Honeypot, reCAPTCHA, AV) | Webhooks / Zapier | Yes |

| Getform | Full HTML Control | Medium | Webhooks / Slack | Yes |

| Formspree | React Hooks / HTML | High (Formshield ML) | Deep native integrations | Yes |

| Netlify Forms | HTML Attribute parsing | High (Proprietary ML) | Webhooks / Serverless | No |

Regardless of the form handler chosen, protecting against Cross-Site Request Forgery (CSRF) is paramount, particularly for authenticated sessions or forms processing sensitive data. CSRF attacks trick an authenticated user’s browser into executing unwanted actions (such as submitting a malicious state-changing form) on a trusted site by exploiting the browser’s automatic inclusion of session cookies.

Mitigation strategies tailored for static sites utilizing serverless APIs include:

-

Same-Site Cookies: Configuring session cookies with the

SameSite=StrictorSameSite=Laxattribute instructs the browser not to send cookies along with cross-site requests. This modern browser feature effectively neutralizes the primary delivery vector for CSRF attacks. - Double Submit Cookies and CSRF Tokens: Because static sites lack a persistent, stateful backend server to generate, track, and validate unique tokens per page load, a stateless Double Submit Cookie pattern is often employed. A random cryptographic value is sent in both a secure cookie and as a request parameter (or custom HTTP header). The serverless function verifies that the two values match. Since an attacker cannot read or modify cookies on the target domain due to the browser’s strict Same-Origin Policy, they cannot forge the matching request parameter, neutralizing the attack.

-

Custom Request Headers: Leveraging REST principles, requiring a custom HTTP header (e.g.,

X-Requested-With) on all state-changing POST or PUT requests provides robust protection. Browsers inherently prevent cross-origin requests from setting custom headers without explicit Cross-Origin Resource Sharing (CORS) preflight approval, blocking the forgery attempt at the network level.

Infrastructure as Code: DNS and Environment Orchestration

For an agency deploying hundreds of static sites, manually configuring DNS records, provisioning SSL certificates, and managing staging environments via graphical user interfaces is an unsustainable, highly error-prone methodology. A single misconfigured CNAME or A record caused by human error can result in extended client downtime, broken email routing, and severe reputational damage.

To achieve enterprise-grade scalability and reliability, agencies must aggressively adopt Infrastructure as Code (IaC) tools, primarily Terraform, to define, version, and orchestrate their entire domain and DNS architecture.

Declarative DNS Management via Terraform

Terraform’s declarative language allows DevOps engineers to mathematically define the desired end-state of their infrastructure—including domains, specific routing records, and cryptographic certificates—within version-controlled repositories. Using native providers such as DNSimple, Cloudflare, or AWS Route 53, agencies can automate the entire lifecycle of a client’s domain portfolio without relying on dusty internal documentation.

When managing records programmatically, the Terraform DNS provider utilizes the DNS update protocol (RFC 2136), authenticated securely via Transaction Signatures (TSIG).

This relies on a shared secret key and one-way hashing to ensure that DNS packets originate from an authorized sender, preventing unauthorized zone transfers and malicious record tampering.

A standard, best-practice Terraform configuration for a client environment might define:

- dns_a_record_set: Mapping the apex domain directly to the hosting provider’s IPv4 edge servers.

- dns_cname_record: Aliasing subdomains (like www or app) to the primary application load balancer or edge network.

- dns_txt_record_set: Automating the deployment of crucial security protocols like Sender Policy Framework (SPF) and Domain-based Message Authentication, Reporting, and Conformance (DMARC) records to secure client email routing and prevent domain spoofing.

- dns_mx_record_set: Configuring Mail Exchange preferences to route client communications accurately.

By utilizing Terraform modules, for_each iteration loops, and parameterized workspace configurations, an agency can rapidly stamp out identical, perfectly configured DNS setups for hundreds of clients, completely eliminating manual data entry. This methodology also provides a robust disaster recovery mechanism; if a client’s DNS zone is accidentally deleted or compromised, the entire architectural state can be reconstructed in seconds by simply reapplying the Terraform configuration.

Multi-Tenant SSL and Custom Domain Routing

Many modern digital agencies develop white-labeled SaaS platforms, e-commerce site builders, or multi-tenant applications from a single, unified codebase. These applications must serve hundreds or thousands of distinct client vanity domains (e.g., client-one.com and client-two.com both routing transparently to the same underlying Next.js application). Provisioning SSL certificates and routing logic at this massive scale requires highly specialized infrastructure.

Solutions such as Cloudflare for SaaS and the Vercel Domains API automate this complex lifecycle entirely. In a standard multi-tenant operational flow:

- Configuration: The agency provisions a custom hostname via an API call (e.g., passing client-one.com to the edge provider).

- DNS Delegation: The client is instructed to add a single CNAME record at their registrar, pointing their domain to the agency’s primary platform proxy domain.

- Validation and Provisioning: The platform automatically verifies domain ownership. Using ACME protocols and authorities like Let’s Encrypt, the platform performs an HTTP-01 challenge (for standard domains requiring Port 80 access) or a DNS-01 challenge (necessary for wildcard certificates) to automatically issue, bind, and continually renew the SSL/TLS certificate.

- Edge Routing: Once cryptographic verification is complete, the global edge network intercepts traffic bound for client-one.com, decrypts the SSL packet at the edge node, and proxies the request to the underlying tenant application, all while seamlessly retaining the client’s custom domain in the browser address bar.

This automation effectively eliminates the profound DevOps overhead historically associated with bespoke certificate generation, manual installation, and tracking expiration dates across disparate client portfolios.

SEO Optimization and Staging Environment Parity

A critical component of a professional agency workflow is maintaining strict parity between staging and production environments. Staging environments must mirror production exactly—utilizing identical container configurations, dependency versions, API connections, and CI/CD deployment pipelines—to prevent the ubiquitous and costly “works in staging, fails in production” scenario caused by configuration drift.

However, deploying exact replicas of staging environments introduces severe risks regarding data leakage and SEO indexing dilution. Staging environments should be populated strictly with sanitized, anonymized, or synthetic data—never raw production data—to comply with stringent privacy regulations (like GDPR) and minimize the catastrophic impact of an accidental public exposure. Furthermore, staging domains must be absolutely shielded from search engine crawlers. While robots.txt directives can politely request that bots avoid crawling, robust security implementation requires HTTP Basic Authentication, restrictive firewall ACLs, or Identity-Aware Proxies to completely isolate the staging environment from unauthorized public and algorithmic access.

When integrating third-party tools or establishing complex sub-site architectures (such as a marketing blog hosted on a headless CMS but operating alongside a primary e-commerce application), agencies face a critical DNS routing decision: utilizing a CNAME subdomain versus a Reverse Proxy.

Setting up a CNAME record (e.g., blog.agencyclient.com) is technically trivial and provides clear logical separation for infrastructure teams. However, search engine algorithms frequently treat subdomains as distinct, entirely separate entities from the root domain. Consequently, the high-value SEO authority and backlink juice generated by the blog content is credited solely to the subdomain, failing to fully benefit the root domain’s primary search ranking.

Conversely, a Reverse Proxy (e.g., agencyclient.com/blog) acts as an intermediary server that intercepts traffic requesting a specific subdirectory and silently fetches the content from the external host, serving it back to the user under the primary domain’s umbrella. To Google and other search engines, the content appears entirely native to the root domain, consolidating domain authority and massively boosting holistic SEO rankings. While technically more complex to configure and maintain via edge middleware or dedicated API gateways, the long-term organic search benefits for agency clients make reverse proxies the objectively superior architectural choice.

Platform Economics and Vendor Evaluation in 2026

The execution of these advanced static workflows relies heavily on the strategic choice of hosting and deployment platform. In the current 2026 market, Vercel, Netlify, and Cloudflare Pages represent the triumvirate of Jamstack infrastructure, each optimizing for different developer experiences, framework affinities, and pricing paradigms. For an agency managing over 100 enterprise clients, the economic scaling mechanics of these platforms are as critical as their underlying technical capabilities.

Free Tier Capabilities (Proof of Concepts and Internal Tooling)

Agencies frequently utilize platform free tiers to spin up rapid proof-of-concepts, internal tools, or very small client landing pages. Understanding the constraints of these tiers prevents unexpected project blockage.

| Feature | Vercel (Free) | Netlify (Free) | Cloudflare Pages (Free) |

|---|---|---|---|

| Bandwidth | 100GB / month | 100GB / month | Unlimited |

| Build Minutes | 6,000 / month | 300 / month | 500 / month |

| Serverless Invocations | 100,000 / month | 125,000 / month | Unlimited |

| Team Members allowed | 1 | 1 | Unlimited |

Pro Tier Pricing and Cost at Scale

The true operational cost of a platform is revealed when scaling an agency’s portfolio. The differences in pricing models heavily dictate long-term profitability.

| Feature / Metric | Vercel (Pro Tier) | Netlify (Pro Tier) | Cloudflare Pages (Pro Tier) |

|---|---|---|---|

| Pricing Model | $20 per user / month | $19 per user / month | $20 per project / month |

| Bandwidth Limits | 1TB | 1TB | Unlimited |

| Build Minutes | 24,000 | 25,000 | 5,000 |

| Team Members | Unlimited (per-seat cost) | Unlimited (per-seat cost) | 5 included |

| Analytics | Included | $9 add-on | Free |

| Estimated Cost at Scale (500GB / 10K builds) | ~$150+ | ~$99 | ~$20 |

Vercel: The Next.js Optimization Engine

Vercel stands as the undisputed leader for agencies building heavily upon the Next.js framework. By maintaining both the open-source framework and the proprietary hosting platform, Vercel offers seamless, zero-configuration deployments that automatically optimize complex rendering strategies like Partial Prerendering (PPR) and Incremental Static Regeneration (ISR) out of the box. Its developer experience is highly polished, featuring robust preview deployments, integrated analytics, and advanced fluid compute optimizations that utilize bytecode caching and predictive instance warming to virtually eliminate cold starts.

However, Vercel’s pricing structure scales steeply and can escalate quickly at scale due to its complex pricing matrix. Billed on a strict per-user basis, an agency with a large development team will incur significant base costs ($20 per developer) before accounting for potential traffic overage fees. Furthermore, advanced security features and Web Application Firewall (WAF) capabilities are often gated behind higher-tier Enterprise plans, making it generally the most expensive option for high-volume portfolios.

Netlify: The Collaborative Ecosystem Pioneer

Netlify pioneered the original Jamstack philosophy and remains the most flexible, framework-agnostic platform available. It excels in generalized agency workflows by offering an expansive ecosystem of open-source build plugins and deeply integrated proprietary services. Unlike Vercel, which often requires bespoke serverless code or third-party integrations for ancillary features, Netlify provides built-in form handling, server-side A/B testing, and native identity management. This native integration significantly reduces the reliance on external SaaS vendors, streamlining the development lifecycle and reducing vendor sprawl.

Netlify’s collaboration tools are particularly agency-friendly.

The ability to generate secure deploy preview URLs for clients to review staging content without requiring platform authentication radically simplifies the client feedback loop. Like Vercel, Netlify employs a per-user pricing model ($19 per seat) with fixed bandwidth limits (1TB), requiring careful monitoring to avoid overage charges for high-traffic, media-heavy client portfolios. Furthermore, essential features like Analytics require additional a la carte payments.

Cloudflare Pages: Unmatched Scale and Edge Economics

Cloudflare Pages disrupts the traditional hosting model by leveraging Cloudflare’s massive global network (spanning over 300 data centers) to deliver static assets with unparalleled latency reduction. Cloudflare’s primary differentiator is its economic model: it completely abolishes bandwidth charges, offering unlimited egress even on its free tier.

For an agency, this translates to entirely predictable hosting costs. The Pro plan is billed at $20 per project rather than per user, encompassing a base team of 5 and unlimited bandwidth. For a 10-person agency managing a portfolio generating 500GB of traffic and 10,000 builds monthly, Cloudflare runs approximately $20 per month, compared to nearly $100 on Netlify and $150+ on Vercel.

Furthermore, Cloudflare integrates deeply with its wider, industry-leading security ecosystem. Client sites automatically benefit from enterprise-grade DDoS mitigation, a highly configurable Web Application Firewall (WAF), and Cloudflare Workers for edge compute. Workers utilize V8 isolates rather than traditional containerized Node.js environments. This architectural choice dramatically reduces cold start times to single-digit milliseconds and executes logic directly at the edge node closest to the user.

The trade-off for this performance and cost-efficiency is a steeper learning curve; migrating a legacy application heavily dependent on native Node.js APIs to Cloudflare’s strict V8 environment requires architectural adjustments and lacks the out-of-the-box Next.js synergy natively provided by Vercel. However, for high-traffic portfolios where cost control and global performance are paramount, Cloudflare presents an undeniably compelling infrastructure solution.